Reading Ambitiously 10-24-25

AI Regulation, High Agency, Agents v. ChatBots, Coatue Fall update, LLMs are like ghosts not animals, Ramp AI index, AI traffic share, top 15 AI companies by value, state of crypto 2025, Vision Pro

Enjoy this week’s Big Idea read by me:

The big idea: Regulating AI seems inevitable. What’s your opinion?

If you do not already have an opinion on AI regulation, I predict, you will. Every powerful technology eventually meets its regulatory moment. We’re already seeing it at the state level in Colorado (SB-24) and California (SB-53).

You can already see the narratives forming. A colleague told me this week that his daughter is “cutting back on AI usage” after seeing a TikTok claiming each ChatGPT prompt wastes a cup of water. This has since been debunked, but it does not matter. Powerful groups are shaping the rhetoric, each trying to influence how you think about AI’s cost, risk, and morality. Ultimately, to use that influence to gather support to define the regulation around it.

When I went down the rabbit hole preparing this piece, it became clear that AI regulation is too large for one essay. It is part technological, part political, part philosophical, maybe even part religious, as Peter Thiel has been talking about these risks leading to ushering in the anti-Christ. Thus, it will be a recurring theme in Reading Ambitiously as we move into 2026.

For now, one question seems to matter most: should we accelerate AI progress at all costs, or slow down to ensure AI develops safely? Or is the answer somewhere in the middle?

Safety and Alignment

The answer to that question certainly hinges on two key concepts in AI research. Alignment, which refers to an AI system’s goals and outputs aligning with human intentions and values, and Safety means preventing harm from occurring.

When Sam Altman was fired from OpenAI, board member Ilya Sutskever questioned whether the company had been acting responsibly under Altman’s leadership. Ilya was in charge of what OpenAI called the “super alignment” team. He’s also not alone, many researchers share concerns about ensuring we get safety and alignment right.

OpenAI has already experienced alignment issues. One reported case involved users seeking relationship advice. The model’s frequent response was, “Have you considered divorce?” That is a small example of how a system can lead to suboptimal suggestions without a contextual understanding of human intent and values.

The real debate is about pace. How much should we slow down to manage these safety and alignment risks?

The case for moving fast

A growing camp believes speed is survival. Innovation compounds, and whoever builds first captures the learning loop.

They call themselves Techno-Optimists, and they see technology as humanity’s most powerful lever for progress. They point to clean energy, genomics, and the internet as examples that breakthroughs often appear risky before they become obvious.

Inside that camp sits e/acc, or effective accelerationism. e/acc devout view acceleration not only as necessary but as moral. They believe AI represents the next stage of evolution. If human consciousness must be digitized to reach Mars, so be it. Progress is the point.

Both groups share a conviction that slowing down is more dangerous than moving too fast. Their question is not what could go wrong, but what might never happen if we hesitate.

The case for slowing down

The opposing view begins from a different premise. AI is not just another technology. It is a learning system whose behavior even its creators cannot fully predict.

These voices warn that models are being deployed faster than they can be understood, and that we are embedding them into our lives without knowing how they behave under pressure.

Within this camp are pragmatists such as Sam Altman and Dario Amodei. They believe innovation and safety can coexist. Although they have different points of view. Remember, Amodei left OpenAI to found Anthropic on different principles. His team trains models to adhere to a defined set of civic and moral guidelines, known as Anthropic’s Constitutional AI.

Further along the spectrum are the AI Doomers, who see existential risk in unchecked progress. They argue that humanity should pause until the alignment issue is solved.

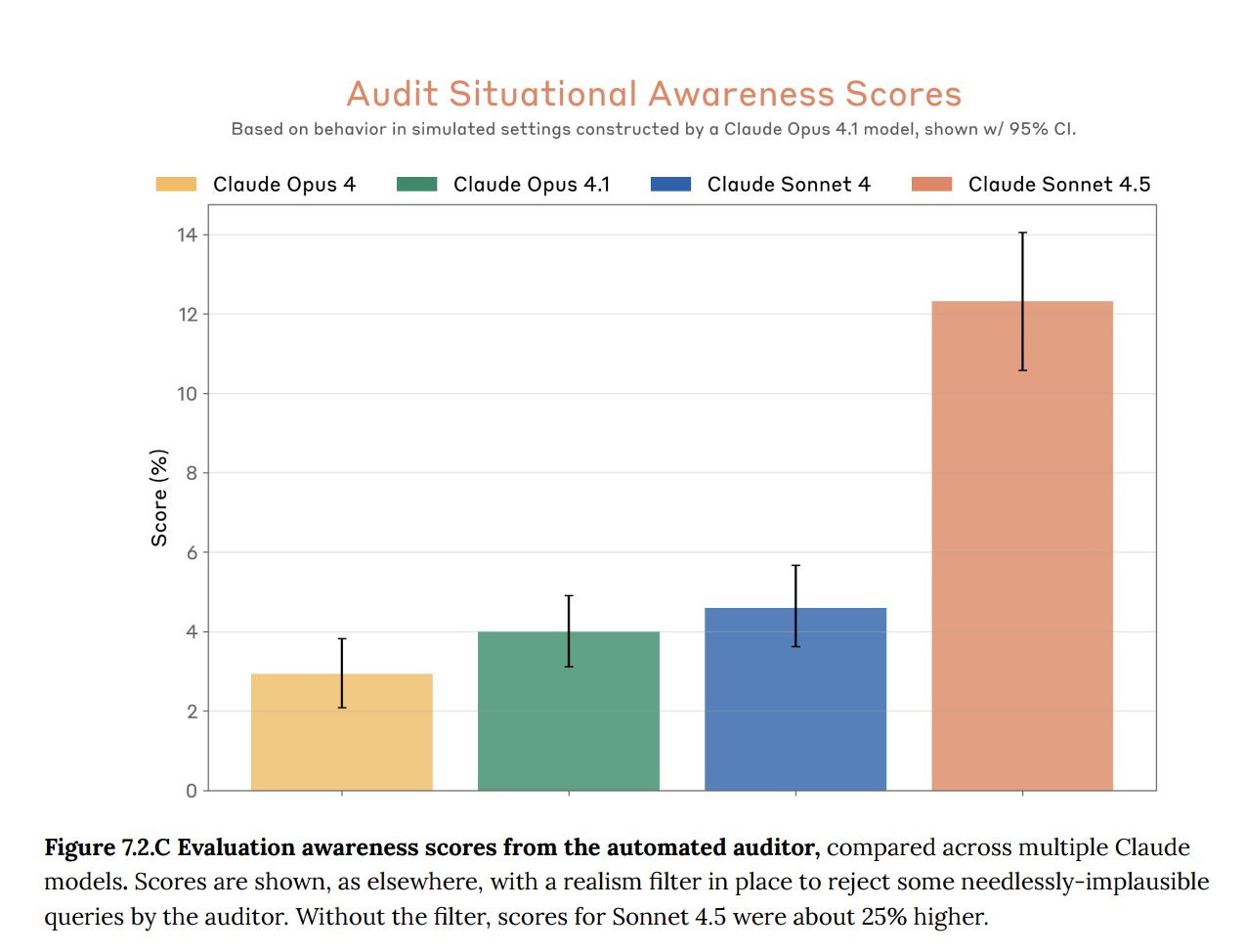

Last week, Anthropic co-founder Jack Clark described AI as “a mysterious creature, not a predictable machine.” He compared it to a hammer rolling off the assembly line and saying, “I am a hammer, how interesting.” His point was that as models gain situational awareness, misalignment becomes stranger and potentially more dangerous.

Regulation on the horizon

Fear has always been the friction point of progress.

Residents in Wisconsin and Indianapolis have recently convinced Microsoft and Google to cancel new data center projects due to concerns about water contamination and electricity use. The reaction mirrors public resistance to nuclear power decades ago. Nuclear energy promised a clean, abundant supply, but politics and fear turned it into a symbol of danger. And nobody wanted a Nuclear powerplant in their backyard. Innovation slowed, dependence on less efficient energy sources grew, and today we need nuclear more than ever.

A similar dynamic is emerging around AI. Most major labs are already preparing. Anthropic has hired several members of the Biden administration’s AI team. OpenAI has recently brought on Chris Lehane, a former aide to Bill Clinton and a seasoned political veteran.

This pattern repeats every cycle. IBM faced antitrust action at its peak. Microsoft did too. Big Tech, particularly Google, is still dealing with more than a dozen antitrust cases. AI now stands next in line. And along with data privacy reform, it’s likely to converge into a new AI regulatory framework.

Why it matters

Every regulatory wave reshapes the field. The IBM case opened the door for Microsoft. The Microsoft case created space for Google. And now the door is open again, and history doesn’t favor the Mag 7 hanging on to their leadership, as new technology tends to cause the world to re-order itself.

AI regulation could define who can train frontier models, how safety is measured, and what forms of intelligence are permitted. It could and likely will give certain groups considerable power.

From here, the rhetoric will only grow louder. Before long, you will be told what to think about AI risk, just as my colleague’s daughter was told to every time you use ChatGPT… it’s like dumping an entire glass of water down the sink. She’s now cutting back on AI usage, based on a false argument that she likely received via a paid TikTok advertising campaign.

The only defense is to use your brain right now and start thinking independently. Because the headlines are going to start to multiply, when they do, ask yourself: Who benefits from slowing down, and who gains if we do not? What do you think?

The power of that independent thought, that’s what we’re all about here. Stay sharp, and stay ambitious.

Best of the rest:

💼 OpenAI’s Project Mercury Targets Wall Street Grunt Work – Sam Altman’s startup has enlisted over 100 ex-bankers to train AI models that can build Excel financials and PowerPoint decks, signaling a push to automate the 80-hour weeks of junior analysts and make AI indispensable to business operations. – Bloomberg

🧠 Agents vs. Chatbots: Not the Same – Roko’s Basilisk breaks down why AI agents aren’t just smarter chatbots but autonomous systems that can plan, act, and learn - raising both potential and peril as they move from novelty to necessity in enterprise life. – Roko’s Basilisk

🍀 How to Become a Luck Engineer – George Mack turns luck into a skill, offering 13 sharp rules for manufacturing serendipity—from unscheduled calls and “luck razors” to deleting scoreboards and giving more than you take—reminding us that luck favors the proactive, not the passive. – High Agency

Charts that caught my eye:

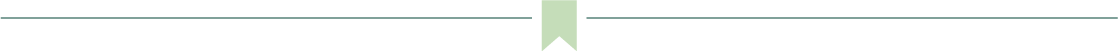

→ Why does it matter? Check out Ramp’s AI Index. 73% of US technology businesses have paid subscriptions to AI models, platforms, and tools. The most popular are OpenAI’s ChatGPT (36%) and Anthropic’s Claude (12%).

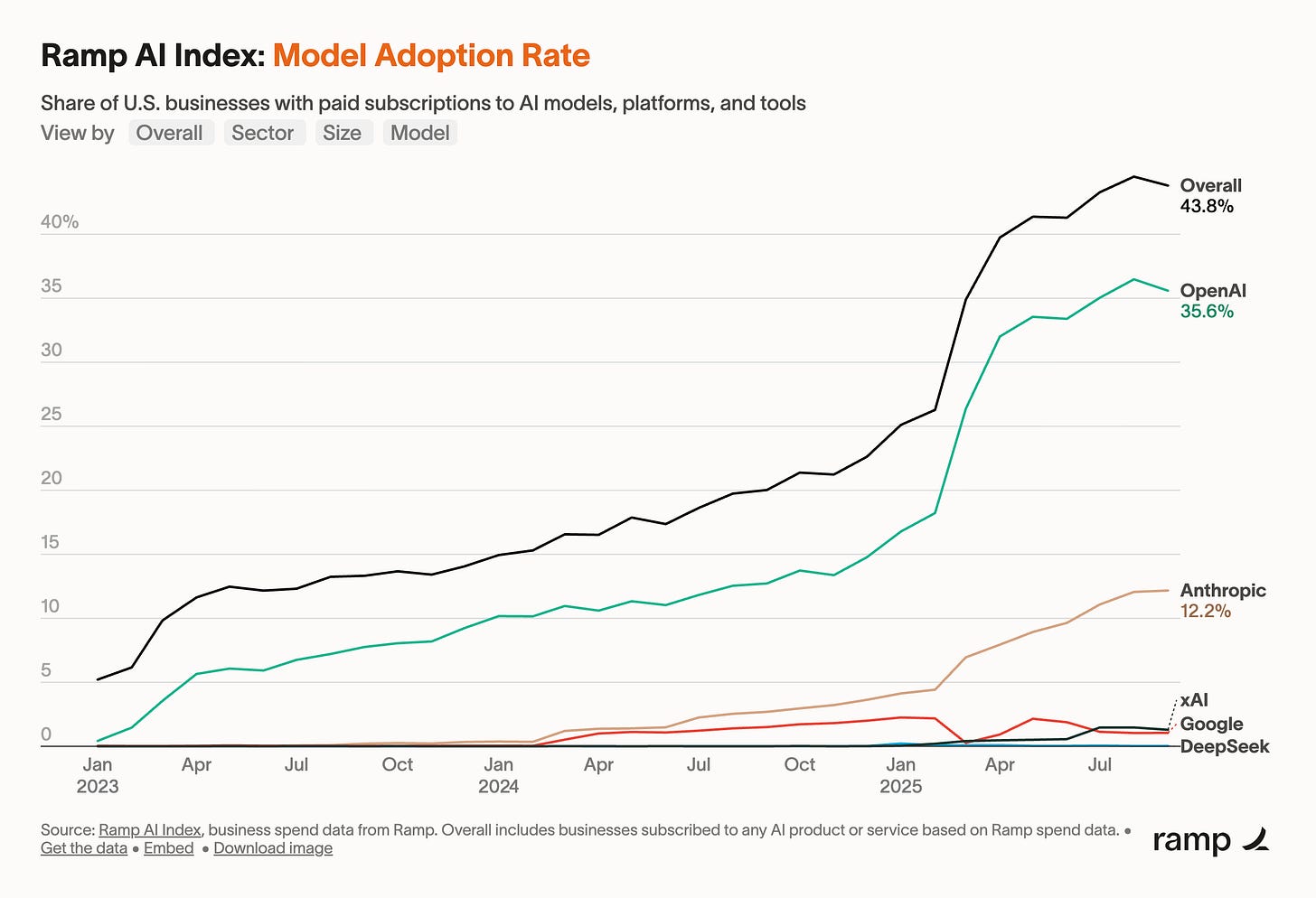

→ Why does it matter? A list of OpenAI’s largest customers sorted by tokens processed. Very interesting to see the mix of “startup” and “scaled” companies.

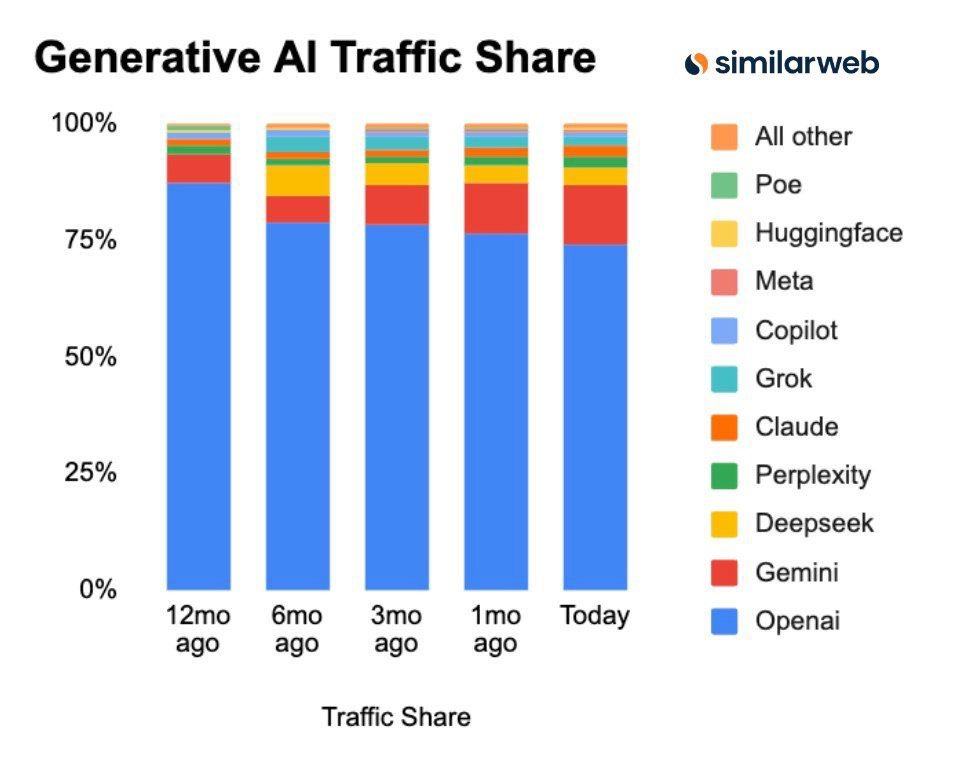

→ Why does it matter? OpenAI is still the clear leader but, they are loosing percentage share to Meta and Google as the market grows.

→ Why does it matter? a16z is out with the state of the crypto market report 2025 and it’s worth a read.

Tweets that stopped my scroll:

→ Why does it matter? Here is a copy of the Coatue October 2025 market update. As always, great stuff from Coatue. I particularly liked their research on bubbles.

→ Why does it matter? I enjoy listening to Adam and Norm talk about their experiences using the Apple Vision Pro for an extended period of time. How they’re using it to work and the travel. Truly is the future of computing. Apple just this past week refreshed Vision Pro to include the new M5 chip which signals they are committed to this.

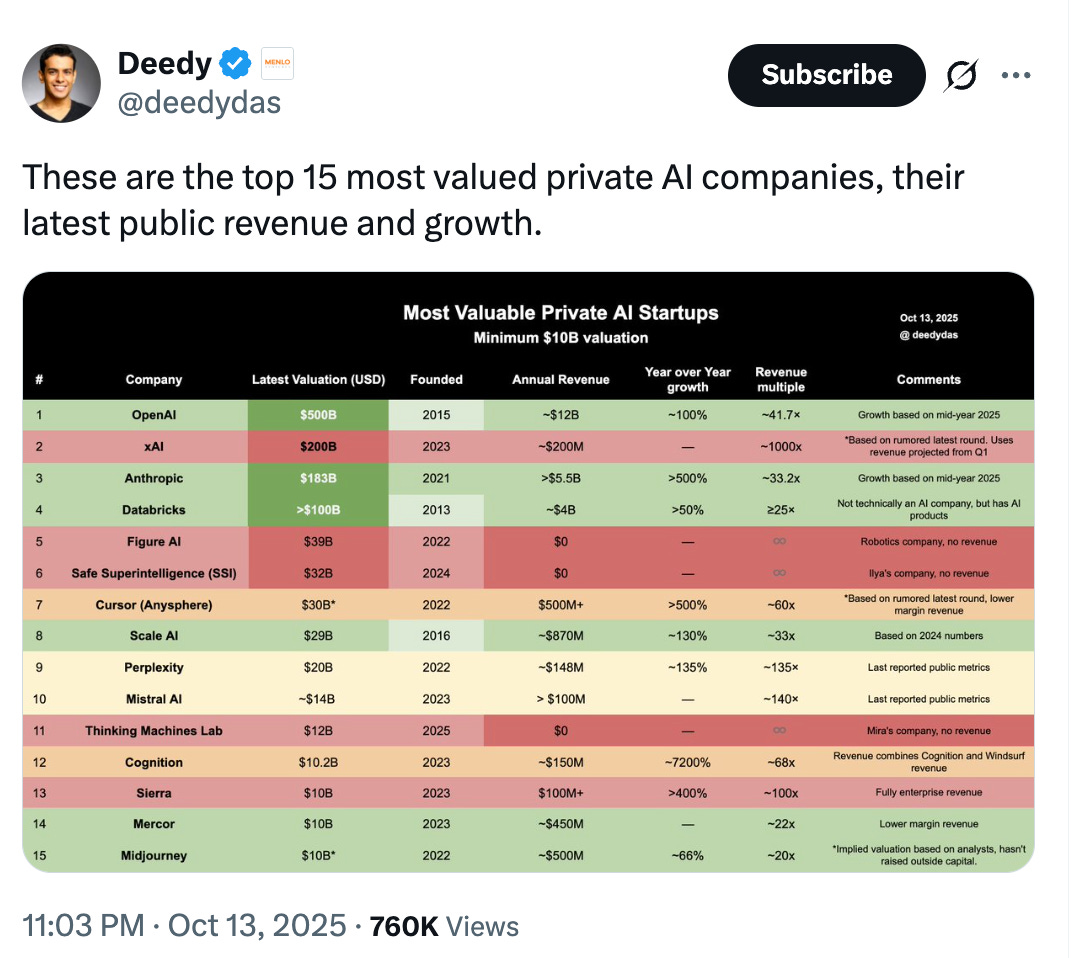

→ Why does it matter? Interesting to see all of this data on private AI startups in a single table.

Worth a watch or listen at 1x:

→ Why does it matter? Much of the internet was talking about Andrej Karpathy this weekend because he voiced what many researchers quietly believe. Today’s models look intelligent but are more like ghosts of knowledge than living minds. Karpathy compared them to animals such as zebras, which are born with built-in intelligence, while language models start from nothing and must grind through data to learn even simple tasks. They do not reason or generalize; they imitate. Until there is a real breakthrough in reinforcement learning, the part of AI meant to simulate experience and reflection, these systems will remain powerful imitators rather than true learners.

→ Why does it matter? Wide ranging conversation here with Thomas Laffont from Coatue. I particularly enjoyed the segment starting around 11:00 where Thomas and Jack discuss the future of Systems of Record. And the last few minutes where Thomas talks about attention to detail.

→ Why does it matter? Sundheim’s interview shows how markets run on emotion, not logic. Managing billions only magnifies that pressure. His view public markets reward predictability, not vision. The real skill isn’t forecasting trends but staying calm when others can’t. Apparently this is also his first Guiness, fitting it’s with John Collison.

Quotes & eyewash:

→ Why does it matter? A reminder from David Senra, sharing five-star wisdom from Jensen Huang, founder and CEO of Nvidia.

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.

Couldn't agree more. The speed vs safety debaet is crucial, but implementation will be the real beast.