Reading Ambitiously 11-15-24

Is AI hitting a plateau? This week, we dive into AI scaling laws, Salesforce’s AI hiring spree, and the latest on cloud markets poised for a $4 trillion Service as Software shakeup.

The Wall Street Journal once used ‘Read Ambitiously’ as a slogan, but it became a challenge I took to heart. If that old slogan still speaks to you, this weekly curated newsletter is for you. Every week, I will summarize the most important and impactful headlines across technology, finance, AI and enterprise SaaS. Together, we can read with an intent to grow, always be learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Thanks to GenerativeAI and our friends at GoogleNotebookLM, you can enjoy this week’s Reading Ambitiously as a podcast entirely generated by AI. If you haven’t experienced this technology yet, definitely give this a try!

In the news:

OpenAI Shifts Strategy as Rate of ‘GPT’ AI Improvements Slows (The Information)

"Some researchers at the company believe Orion isn’t reliably better than its predecessor in handling certain tasks, according to the employees. Orion performs better at language tasks but may not outperform previous models at tasks such as coding, according to an OpenAI employee. That could be a problem, as Orion may be more expensive for OpenAI to run in its data centers compared to other models it has recently released, one of those people said."

→ Why does it matter? There is an increasing concern that the rate of improvement to large language models (LLMs) is starting to plateau. This week, The Information reported that OpenAI has shifted its strategy in response.

In Leopold Aschenbrenner’s seminal AI-focused paper, Situational Awareness: The Decade Ahead, released earlier this year, we’ve learned that AI researchers have long grappled with scaling LLMs. According to Leopold Aschenbrenner, an early engineer at OpenAI, researchers typically use three strategies: increasing compute, enhancing algorithms, and ‘unhobbling’ models with better tools. Will these approaches work again? Could they deliver another order-of-magnitude improvement like we saw from GPT-3 to GPT-4? That’s the $64,000 question.

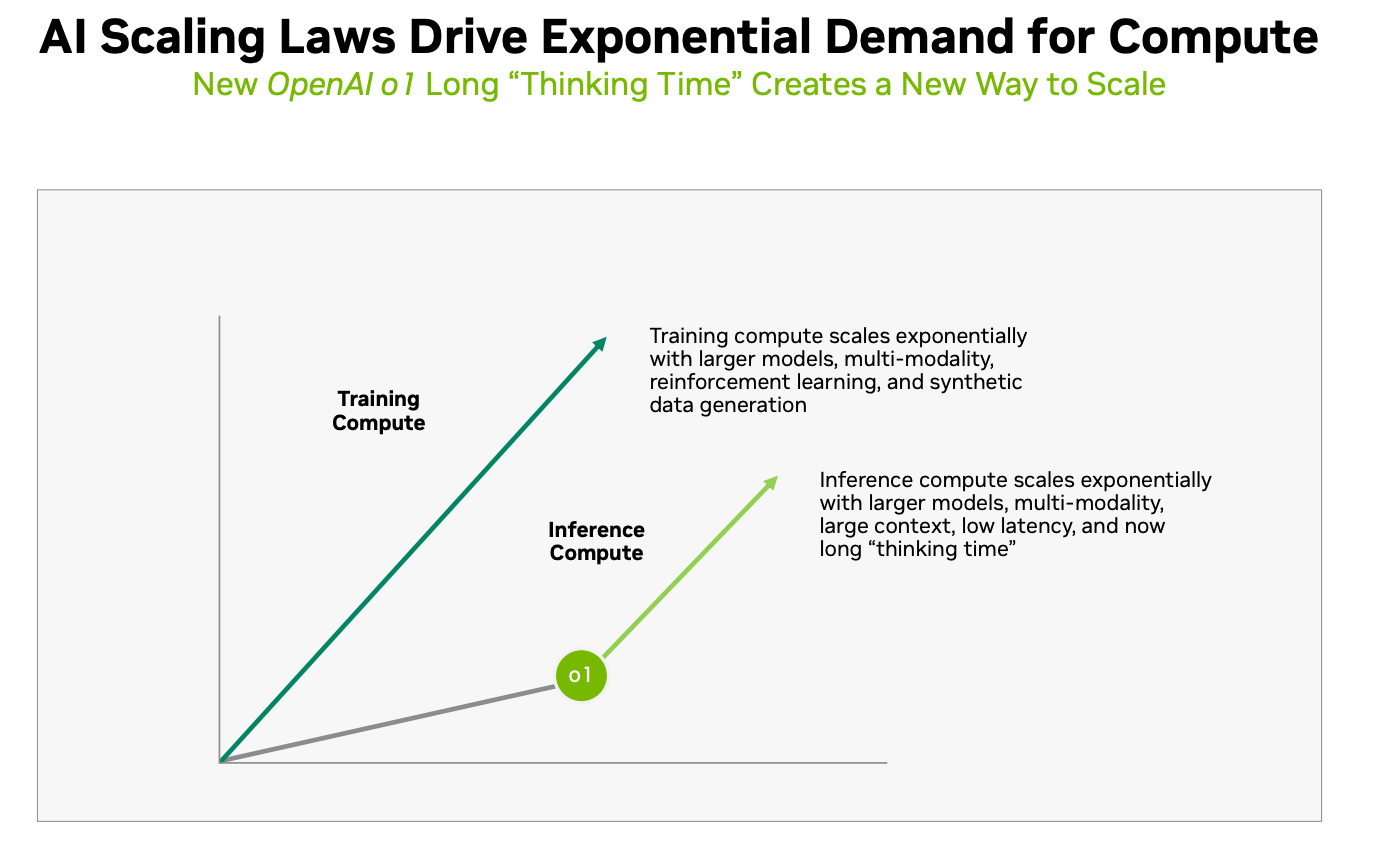

It’s worth listening to both podcasts mentioned below, where both Sam Altman (CEO, OpenAI) and Dario Amodei (CEO, Anthropic) discuss these scaling laws. While Dario offers an optimistic view based on overcoming similar challenges in the past, Altman quickly points out that more recent models like o1 are much better at specific kinds of tasks like reasoning. Reasoning requires more inference, an important concept in GenAI that you’ve likely heard about. Inference is when a trained model uses learned patterns to generate responses or make predictions from new input data. Altman may be betting that more inference, through better reasoning models like GPT-o1, is the best way to deal with the plateau. It comes as no surprise that this slide showed up in Nvidia’s recent investor day presentations:

Interest in AI progress has never been higher. Are scaling concerns simply the result of more people following the story, with researchers set to overcome challenges as they have before? Or are we truly at an inflection point where AI scaling laws hamper progress? Stay tuned, ambitious readers!

Best of the rest:

💼 Salesforce to Hire 1,000 Salespeople for AI Agent Push - Bloomberg

🧠 Reimagination of Everything: How Intelligence-First Design and the Next Stack Will Unlock Human+AI Collaborative Reasoning - Insight Partners

💡 13 Harsh Truths About Success Nobody Told You - Sahil Bloom’s Newsletter

📱 Google’s Gemini AI Now Has Its Own iPhone App - The Verge

Charts that caught my eye:

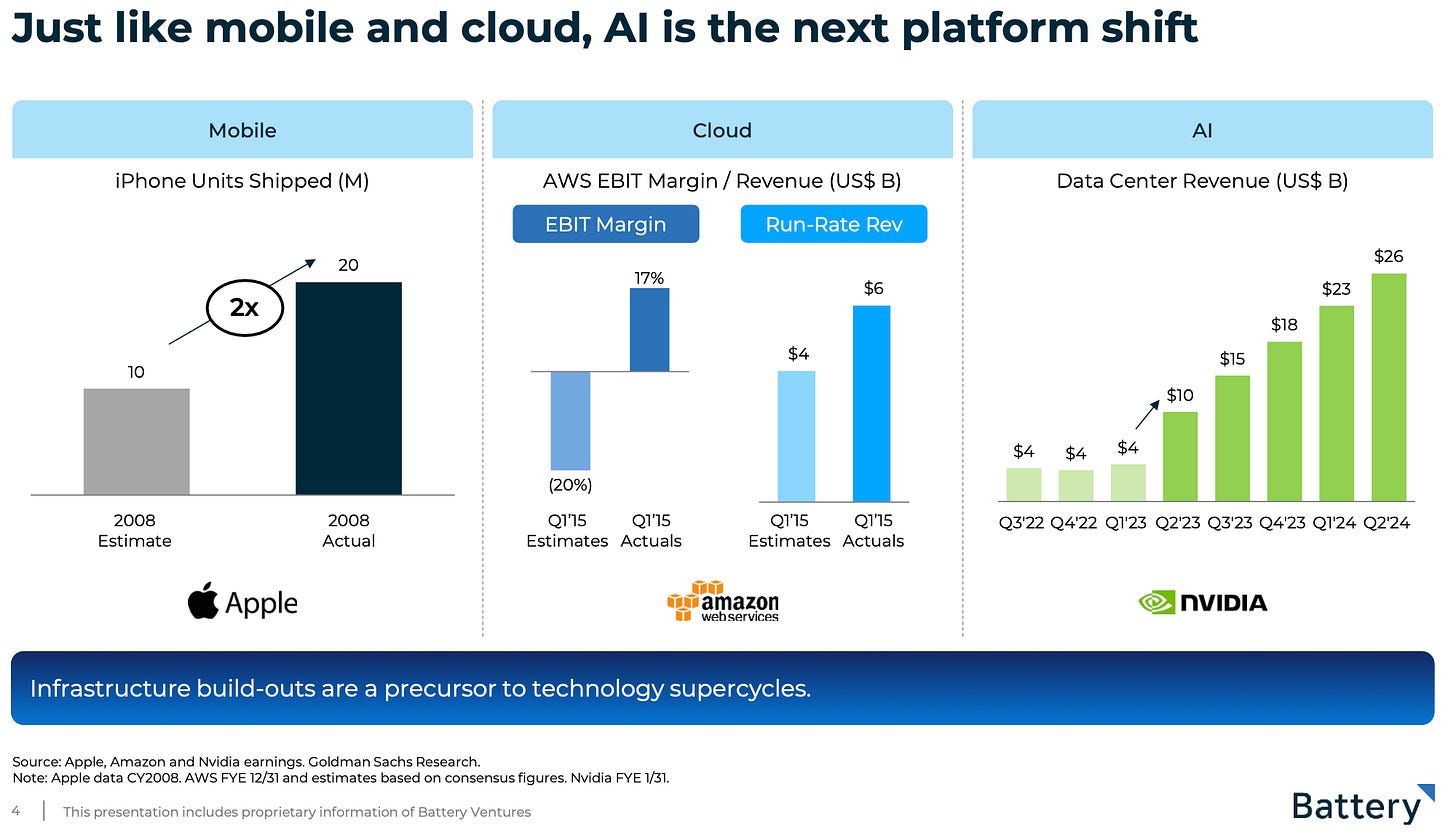

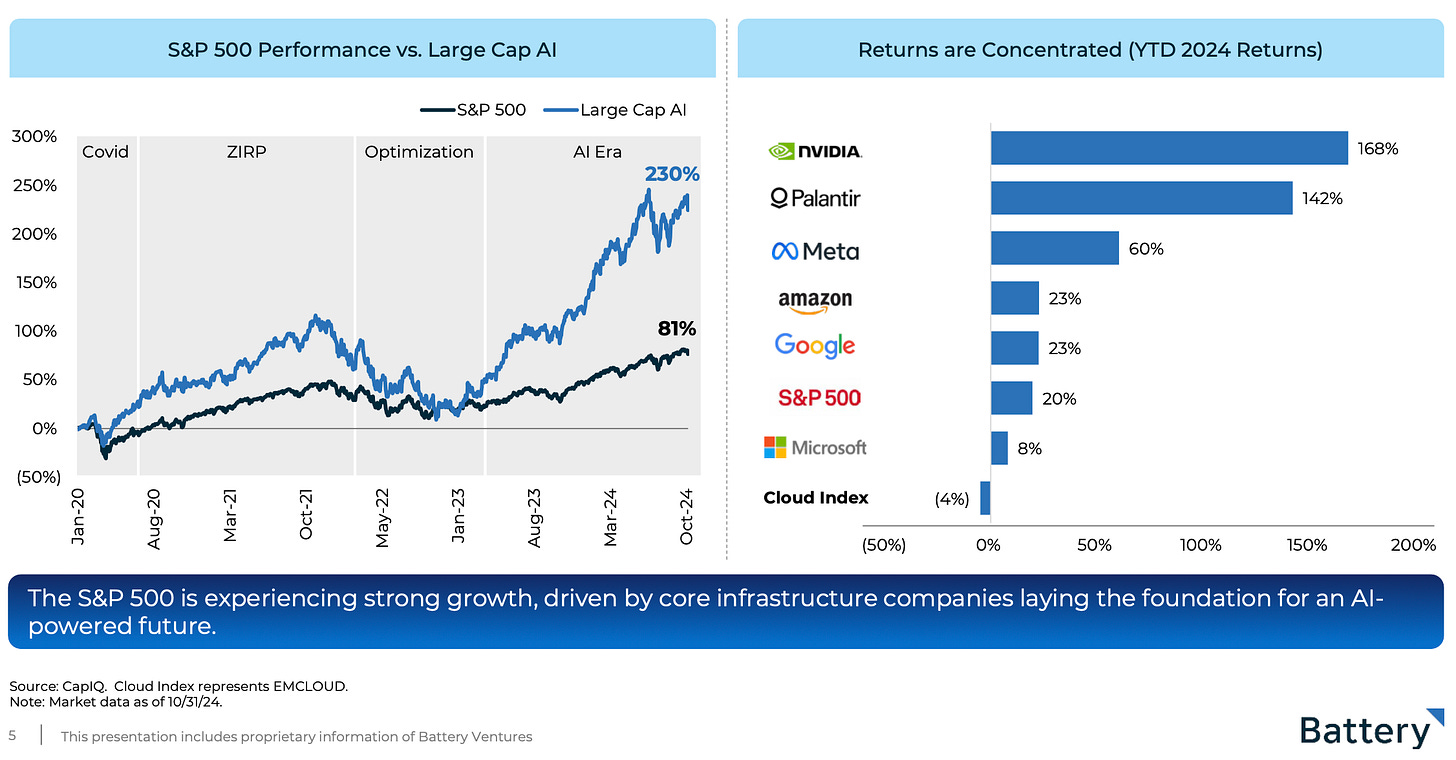

Inside the Coming AI Market “Supercycle” and How Cloud Startups Can Benefit: The Battery Ventures 2024 State of OpenCloud Report (Battery Ventures):

→ Why does it matter? I always look forward to Battery Venture’s State of OpenCloud annual report. My key takeaway: There remains a lot of opportunity in a maturing cloud market. Simultaneously, a new AI supercycle is emerging.

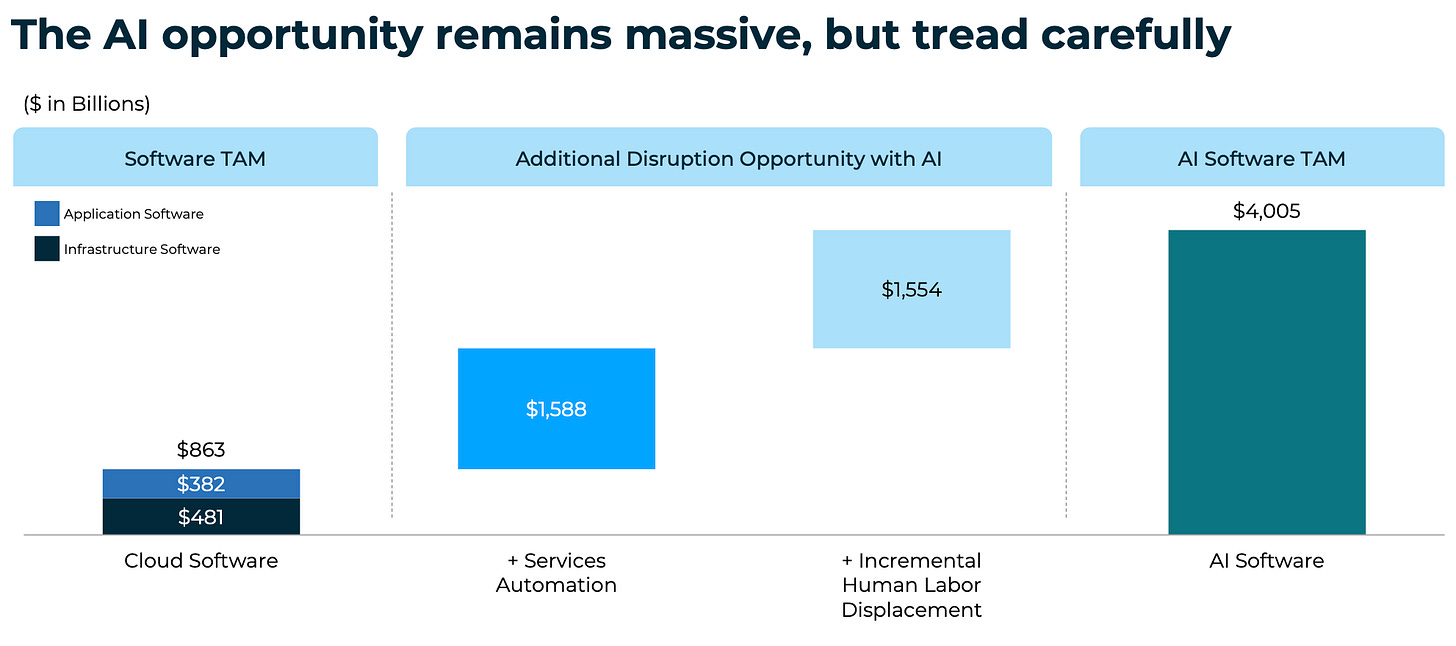

"We see growing capacity to handle a coming AI “supercycle”, a technology platform shift just as big as previous mobile and cloud-computing revolutions led by Apple and Amazon Web Services, respectively. Our estimate is that $4 trillion or more in market value is up for grabs as AI moves toward disrupting software, services and certain labor markets in the coming years.

Palantir Shares Surge 20% On Revenue Outlook (CNBC)

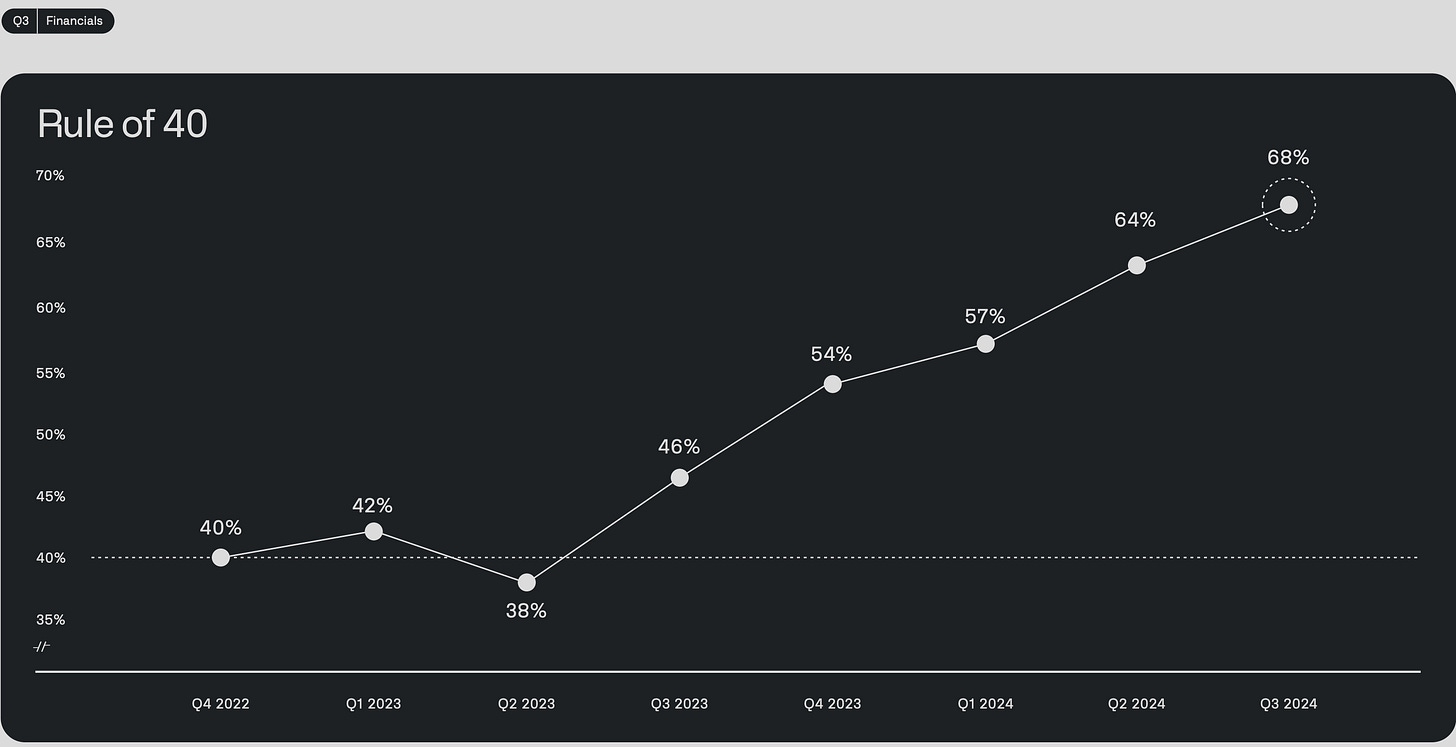

→ Why does it matter? Palantir ($PLTR) is on an AI-led growth tear. The Rule of 40 [Revenue Growth Rate (%) + Profit Margin (%) should be 40% or higher] is a financial metric used in the Software-as-a-Service (SaaS) industry to assess a company’s health by balancing its growth and profitability. Check out Palantir’s Rule of 40. 🤯

Here is Alex Karp, CEO, on their last earnings call:

"We absolutely eviscerated this quarter, driven by unrelenting AI demand that won’t slow down. This is a U.S.-driven AI revolution that has taken full hold. The world will be divided between AI haves and have-nots. At Palantir, we plan to power the winners."

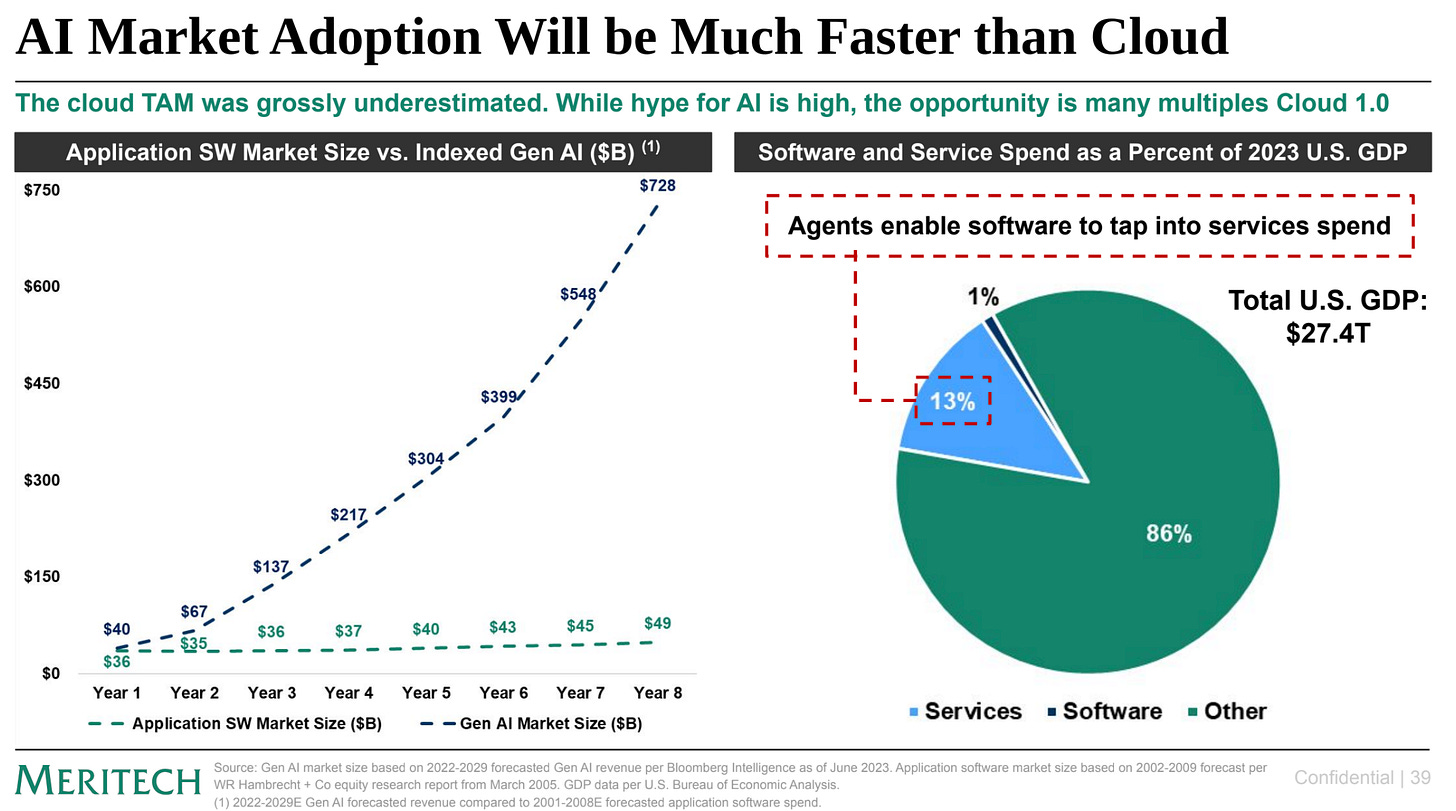

2024 Meritech Annual Meeting Market Insights (Meritech Capital):

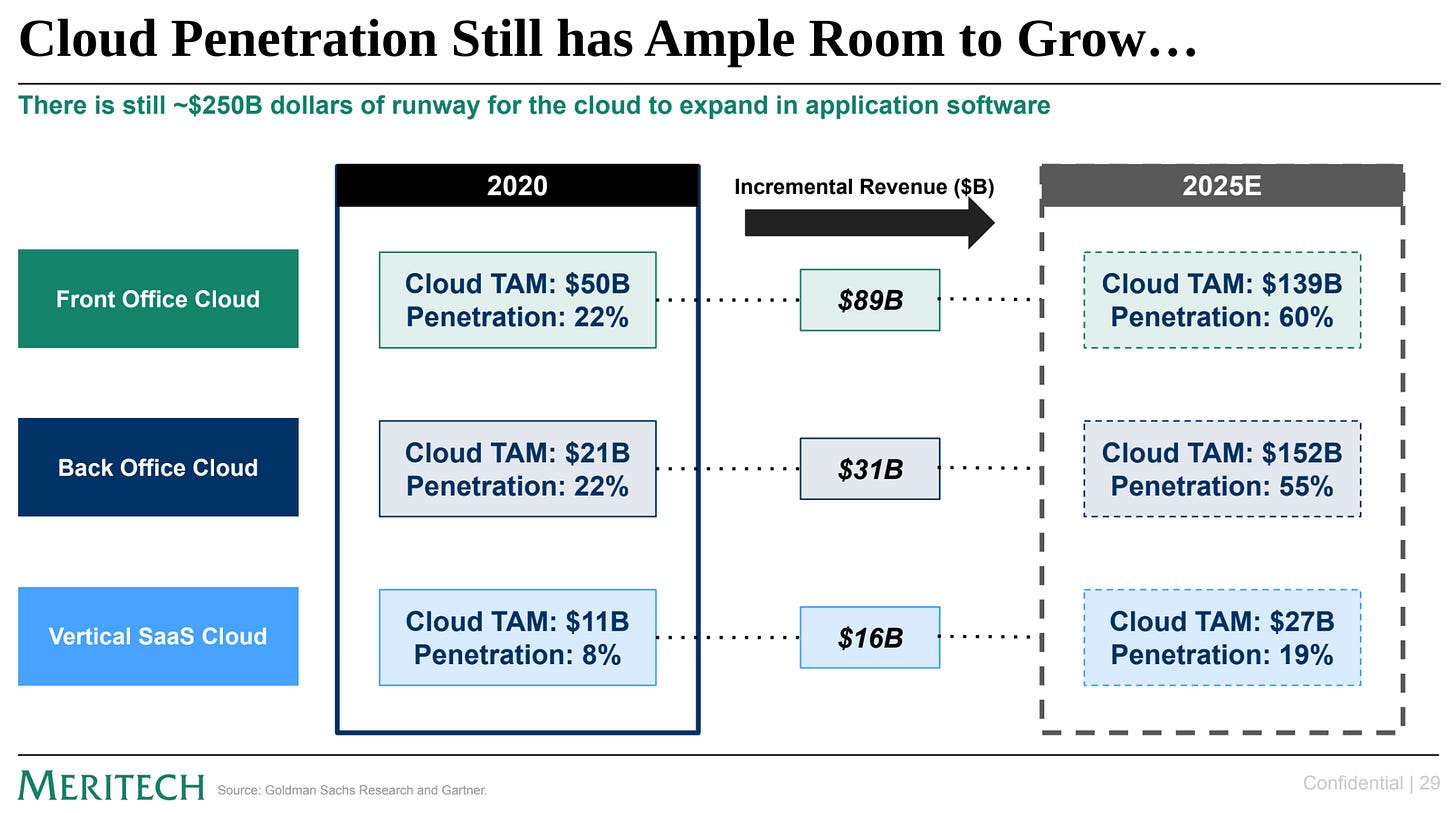

→ Why does it matter? I like to say there’s still plenty left to ‘cloudify’—a term we use to describe moving workloads to the cloud. The Meritech team refers to this as ‘Cloud 1.0’ and estimates that vertical SaaS opportunities, like those pursued by Ridgeline, have only tapped into 19% of their total market potential:

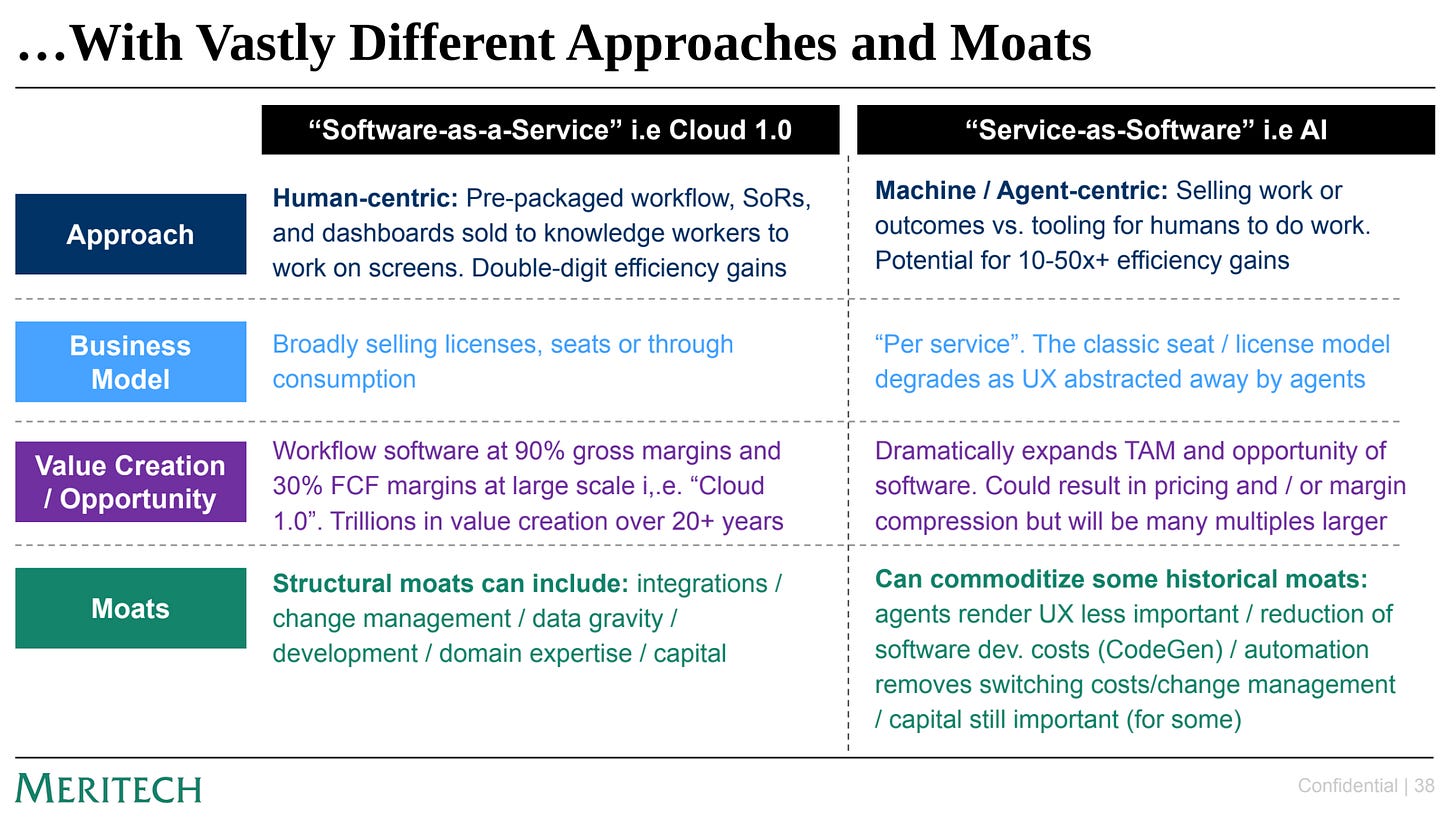

At the same time, we’re moving toward a new ‘Service as Software’ model. The most valuable enterprise technology companies will excel by driving both cloud adoption and seizing the AI-led opportunities in this new model.

Tweets that stopped my scroll:

→ Why does it matter? New research on using a Graph Neural Net (not an LLM) in Scientific Discovery. The results? The GNN enables scientists to discover 44% more new materials, file 39% more patents, and introduce 17% more product prototypes. All in the space of 7 to 24 months.

Worth a watch or listen at 1x:

→ Why does it matter? YC President and CEO Garry Tan sits with Sam Altman to discuss the Open AI origin story, scaling laws, and navigating the AI paradigm shift.

→ Why does it matter? This is one you may need to break up into multiple parts as Dario goes multiple hours with Lex Fridman. It’s no surprise that Dario immediately starts talking about scaling laws!

Quotes & eyewash:

"There’s absolutely nothing you can control other than showing up and doing your job.” - Tituss Burgess

For Sale! This Lake Tahoe “Ranch” 1949 Glenbrook Inn Road - Glenbrook, NV. Asking price? $188,000,000. 👀