Reading Ambitiously 11-21-25

AI regulation, Em-dashes, Bezos is back w/ $6B+, SaaS ARR per employee, Gemini 3, Google TPUs, Harada method, SaaS's fate, AI thoughts from Gavin Baker, Anti AI = live, Buffett's thanksgiving note

Enjoy this week’s Big Idea read by me:

The big idea: Stories, rules, and power. AI regulation.

Reading time: 7 minutes

George Stigler, who won the Nobel Prize for his work on regulation, put it this way: regulation is acquired by the industry and is designed and operated primarily for its benefit.

In 2009, the HITECH Act was passed as part of the broader economic stimulus package, allocating tens of billions of dollars to encourage doctors and hospitals to adopt electronic health records. Each eligible physician could receive tens of thousands of dollars for buying certified EHR software, plus additional payments for proving “meaningful use.”

Epic Software founder Judy Faulkner sat on the federal health IT policy committee, which helped shape the standards. The threshold for “meaningful use” ultimately resembled Epic’s feature set. Smaller rivals that tried to offer simpler or lighter-weight products struggled to clear certification. A few that cut corners were hit with record fines.

On paper, HITECH was intended to modernize healthcare and improve patient outcomes. In practice, it locked in one vendor’s architecture, raised the bar for new entrants, and turned compliance into a moat.

I know this because at Ridgeline, we seriously considered building a competitor to Epic. When we studied that market, we reached the same conclusion: once the government had blessed a feature checklist and fined those without it, the window for new competition had mainly closed.

A well-intentioned effort. A complex bill, a new regulatory framework, a new agency. Then, a few years later, a market more concentrated and less competitive than ever before.

Power gradually accrued to those who wrote those rules. If you control the narrative, you gain the resources to influence the rules. If you influence the rules, you tend to shape them in a way that helps you stay in power. This idea is what led to Stigler winning that Nobel Prize, and it’s called regulatory capture.

For a long time, I assumed the next big regulatory wave in the United States would center on data privacy and controlling big tech. However, I am increasingly convinced that it will focus on artificial intelligence, and that next year the rhetoric on this topic will become very loud. The smartest players already know this and are working to control the narrative, because those who control the narrative will be in the best position to write the rules. And those who write the rules will give themselves every chance to stay in power.

Fear sells

If you are trying to control the narrative, fear is an effective way to do it. In too many conversations about AI, I am struck by how often the default starting point is fear:

Mass job loss. AI could eliminate half of all entry-level white-collar jobs and push unemployment rates into the 10-20% range within five years.

Terminator. AI that sometimes sabotages its own shutdown procedure, and headlines about AI that evades humans while refusing to turn itself off.

A general sense that something fast and opaque is being deployed into every part of life before anyone knows if it is safe.

In a recent interview with CBS News, Dario Amodei, Founder & CEO of Anthropic, warned that AI companies could end up like “the cigarette companies, or the opioid companies,” firms that knew their products could cause significant harm and “did not talk about them, and certainly did not prevent them.” He argued that AI systems are on track to become “smarter than most or all humans in most or all ways.”

Anthropic also recently disclosed a case that sounds like something out of a Mission Impossible script.

A Chinese state-sponsored group utilized Anthropic’s AI to execute a sophisticated cybersecurity attack. The targets ranged from tech and financial firms to chemical manufacturers and government organizations. Anthropic wrote that between 80 and 90 percent of the operations were carried out autonomously by their own product.

U.S. Senator Chris Murphy, after reading the story, warned that AI will “destroy us” if we do not make regulation a national priority.

There is no doubt about that AI changes the game in cybersecurity. However, it is also hard to ignore how neatly this sequence lines up: the CBS interview, a dramatic Chinese cyberattack, and an emerging policy consensus that “something must be done.” Taken together, they form a powerful bid to shape the public's perception of AI. Shape the narrative, influence the rules, protect your power.

Anthropic, OpenAI, Nvidia, and their peers have every reason to protect that power. They are waging a war against a much cheaper and potentially more secure alternative to their offerings: open source.

When entrepreneurs walk into Andreessen Horowitz to pitch an AI startup, partner Martin Casado now estimates there is “an 80 percent chance” they are using a Chinese open-weight model.

Meanwhile, the valuations of US companies built on closed models depend on the idea that these systems are scarce, hard to replicate, and safer in the hands of professionals. The rise of open alternative ecosystems, many developed in China, threatens those economics.

With business models threatened by open source, it is not a long putt from fear to regulation written in the image of the incumbents. Call it safety, argue that only a handful of tightly controlled frontier labs can be trusted, and you quietly turn a competitive threat into a compliance advantage. That is regulatory capture in real time: fear sets the story, the story justifies the rules, and the rules lock in the power of the firms that helped write them.

Play for the upside

Too many of my AI conversations start with fear. What I want for ambitious readers is to develop an awareness of the agenda behind these headlines, and more importantly, a stubborn optimism about the future that lies before us.

There is no doubt that we need to figure out how to use this technology safely. That work is serious and overdue. It will involve technical safeguards, institutional resilience, and some complex tradeoffs.

At the same time, it is worth remembering that we are still in the early stages of this technological revolution. The same tools that can concentrate power can also widen access, reduce friction, and create new forms of leverage for small teams and individual builders.

So, you owe it to yourself to think independently and develop an original perspective on AI. To pay attention to when headlines are being used to funnel you into a prewritten position. To keep a clear head about the risks while playing for the upside vs. overly protecting the downside.

Best of the rest:

✍️ OpenAI says it’s fixed ChatGPT’s em dash problem — More granular style control weakens simplistic “AI tell” heuristics that flag punctuation, giving writers clearer control over tone — TechCrunch

🧠 He’s Been Right About AI for 40 Years. Now He Thinks Everyone Is Wrong. — AI pioneer Yann LeCun is openly betting that today’s large language models are a dead end and that “world models” will power the next wave of breakthroughs, a sharp strategic divergence that could reshape how Big Tech and researchers chase superintelligence. — The Wall Street Journal

🤖 A multibillion-dollar A.I. start-up – Jeff Bezos is stepping back into an operating role and betting $6.2 billion on Project Prometheus, an industrial A.I. startup aimed at engineering and manufacturing, signaling that the next phase of the A.I. race is about real-world leverage, not just chatbots. – The New York Times

Charts that caught my eye:

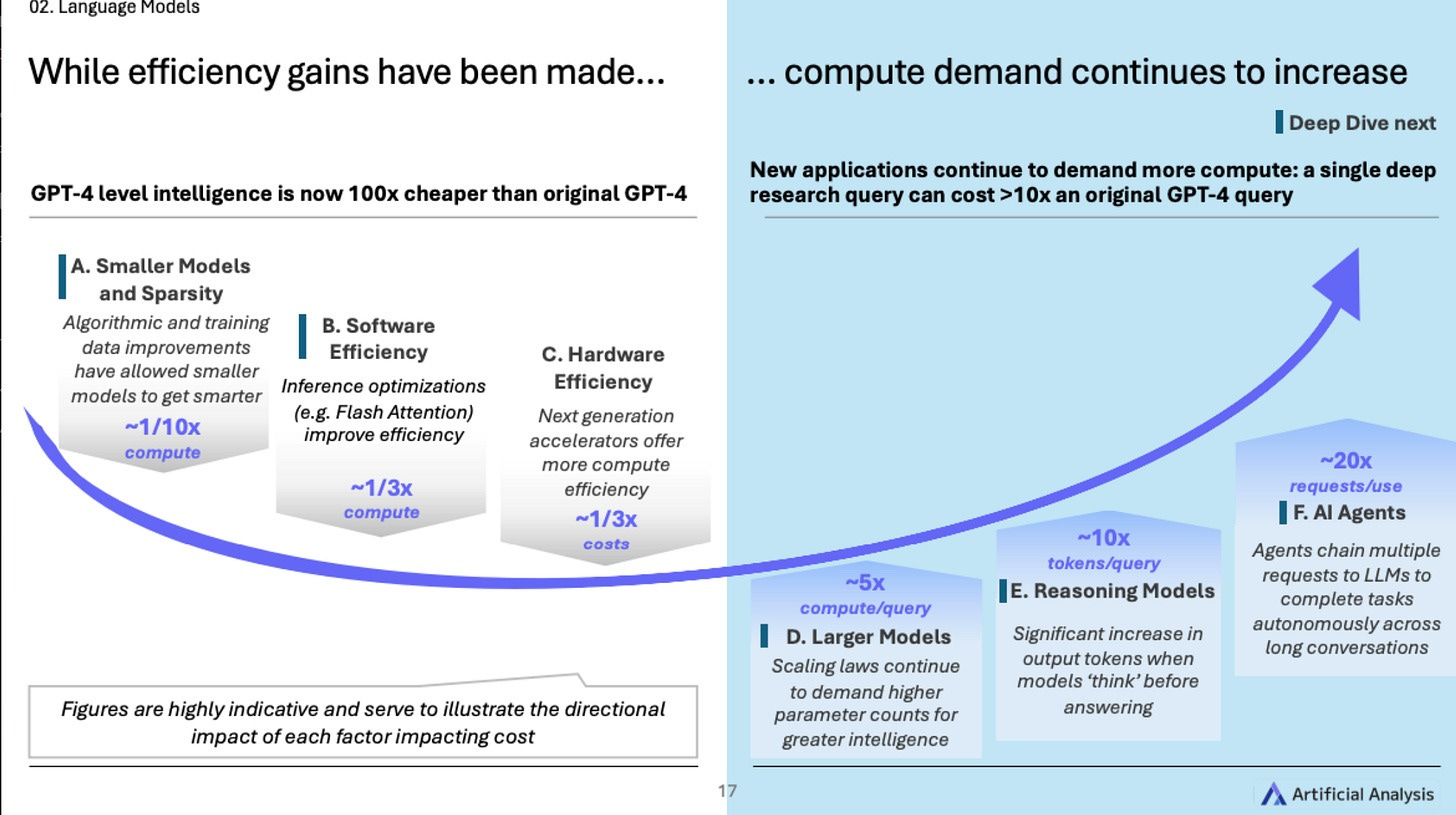

→ Why does it matter? GPT-4-level intelligence is now 100 times cheaper than the original GPT-4. 🤯

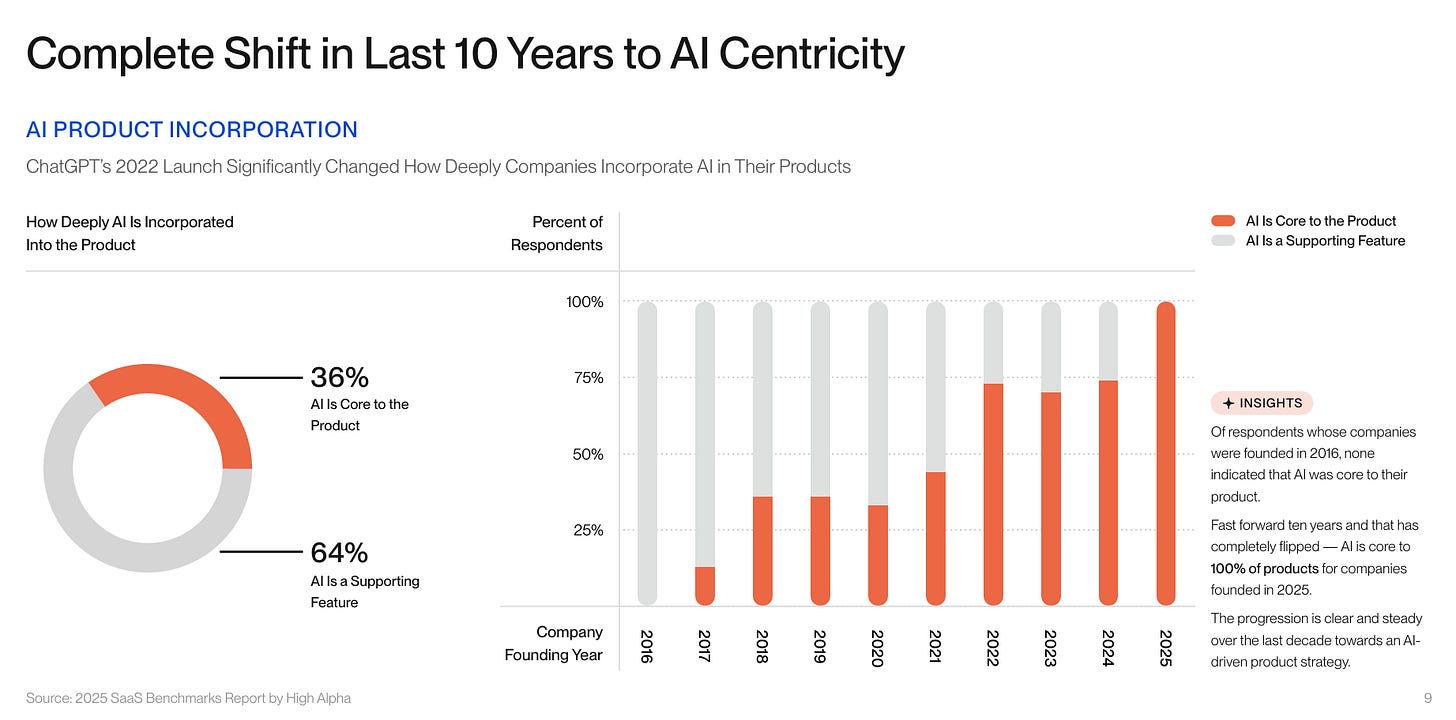

→ Why does it matter? Incredible how central AI has become to every product in such a short amount of time. Just 3 years ago, we were asking AI to write poems about otters.

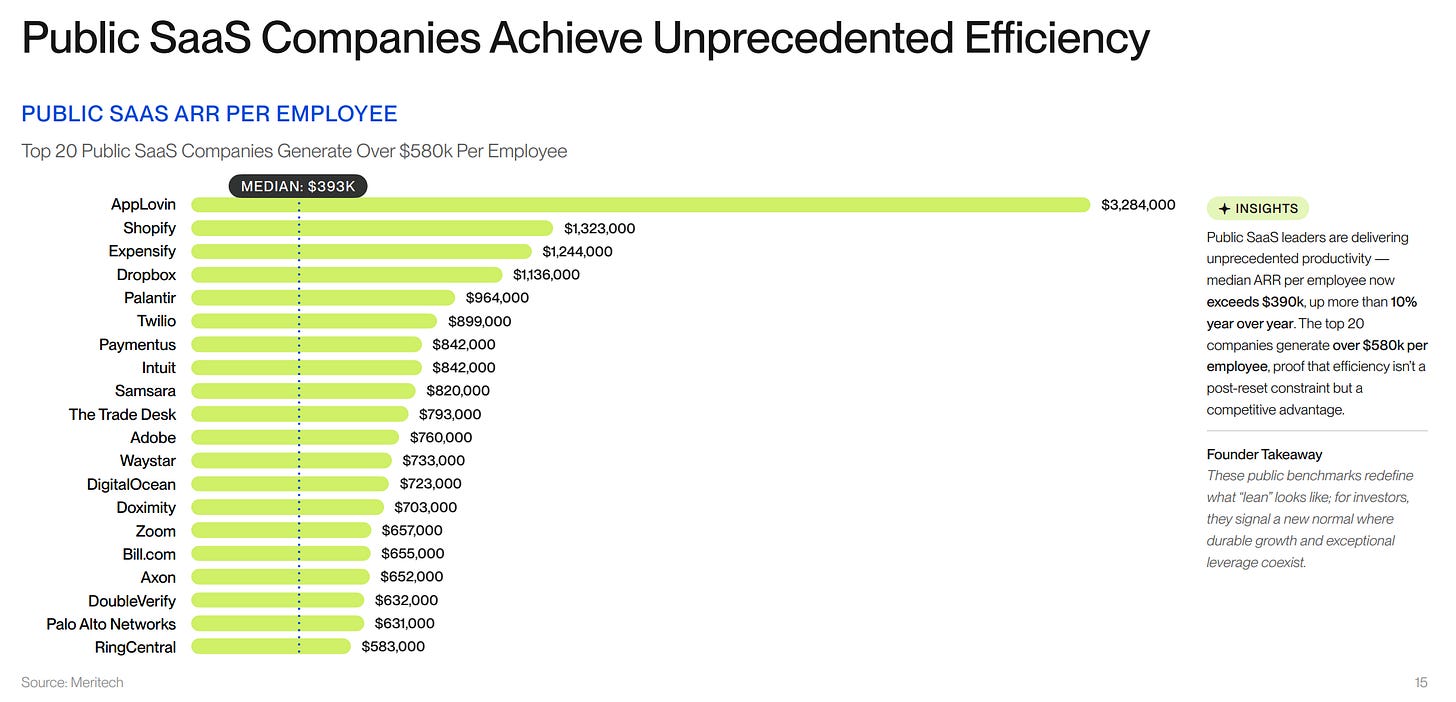

→ Why does it matter? Public SaaS companies have gotten very fit.

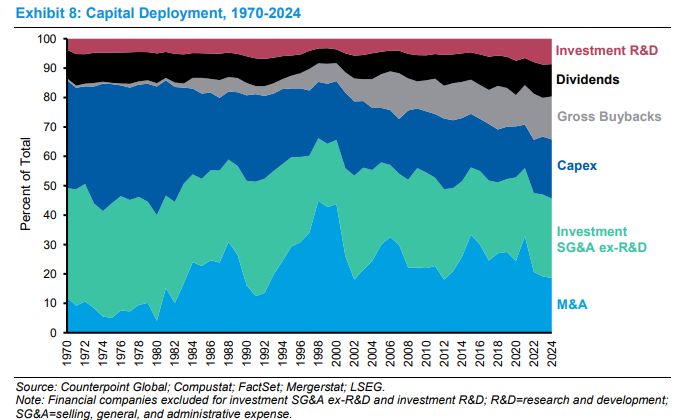

→ Why does it matter? Over the past five decades, U.S. public companies have reshaped their capital deployment strategies, transitioning from heavy investments in physical assets to intangible ones, such as software, brands, and talent. Michael Mauboussin’s Morgan Stanley report details this: capital expenditures fell from 40 percent of total spending in the 1970s to below 15 percent by 2024, while intangibles rose above 40 percent. Share buybacks, absent before the 1980s, now account for about 25 percent; dividends remain consistent at 20-30 percent. This reflects a knowledge-driven economy where value comes from building capabilities rather than owning factories, challenging managers to evaluate returns on incremental investments against incentives that may prioritize short-term gains.

Tweets that stopped my scroll:

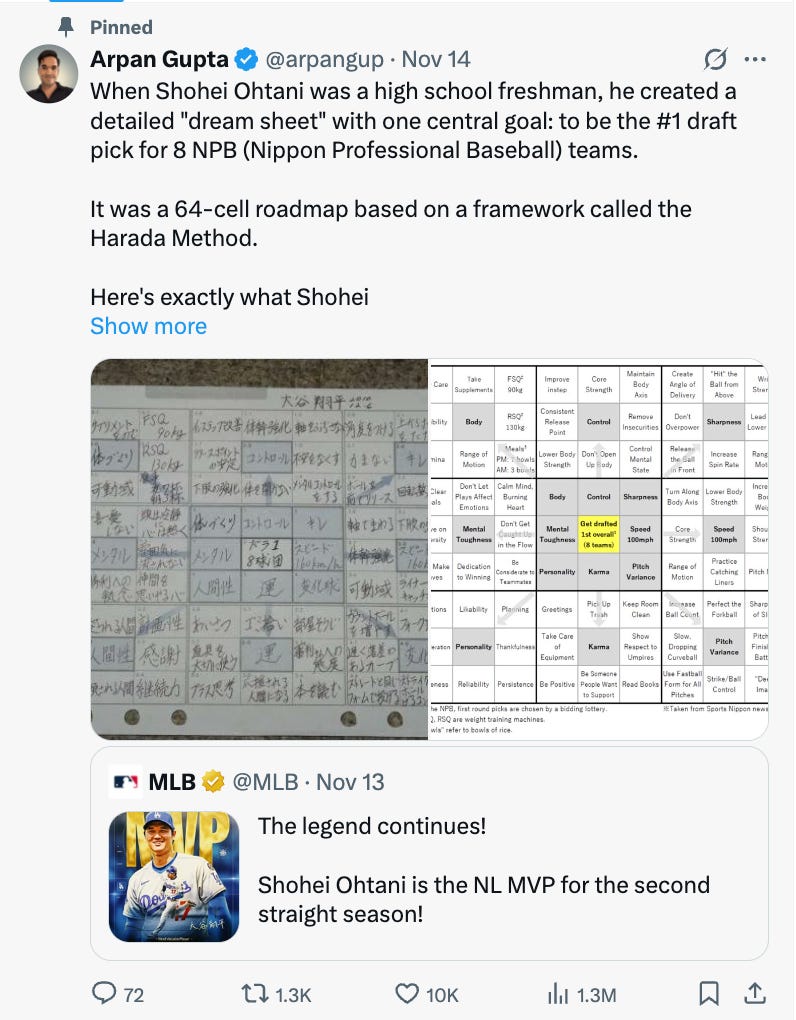

→ Why does it matter? Shohei Ohtani’s high school “dream sheet,” using the Harada Method’s 8x8 grid to break his pro baseball goal into 64 habits across physical, mental, and karmic areas (like respecting umpires and picking up trash for “good luck”), highlights how holistic, habit-focused planning drives elite success, as seen in his back-to-back NL MVP wins.

→ Why does it matter? Larry Fink is the Chairman & CEO at Blackrock. His message: “Tokenize every financial asset!”

→ Why does it matter? SaaS is apparently dead, again. Former Reddit CEO Yishan Wong warns that most AI application startups face imminent obsolescence from rapidly evolving foundational models, such as those from OpenAI, which integrate app features on 9-to 12-month cycles. With little time to scale, founders should aim for quick cash flows (12-18 months) or acquisitions, as AI lacks the stability of past tech waves. According to Wong, true moats exist only in niches with proprietary physical data, such as robotics or biotech.

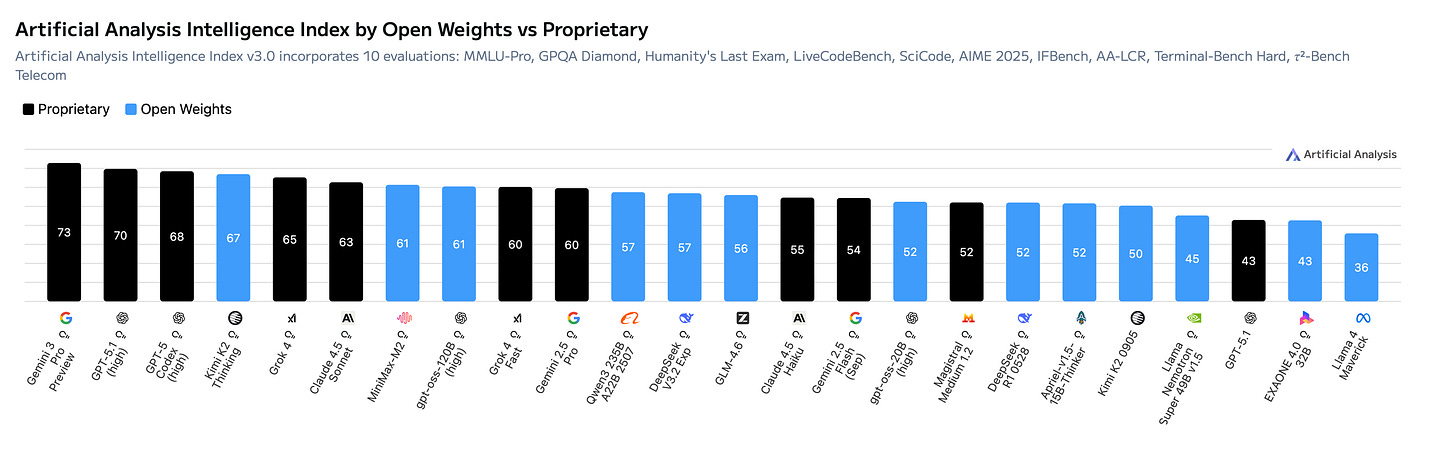

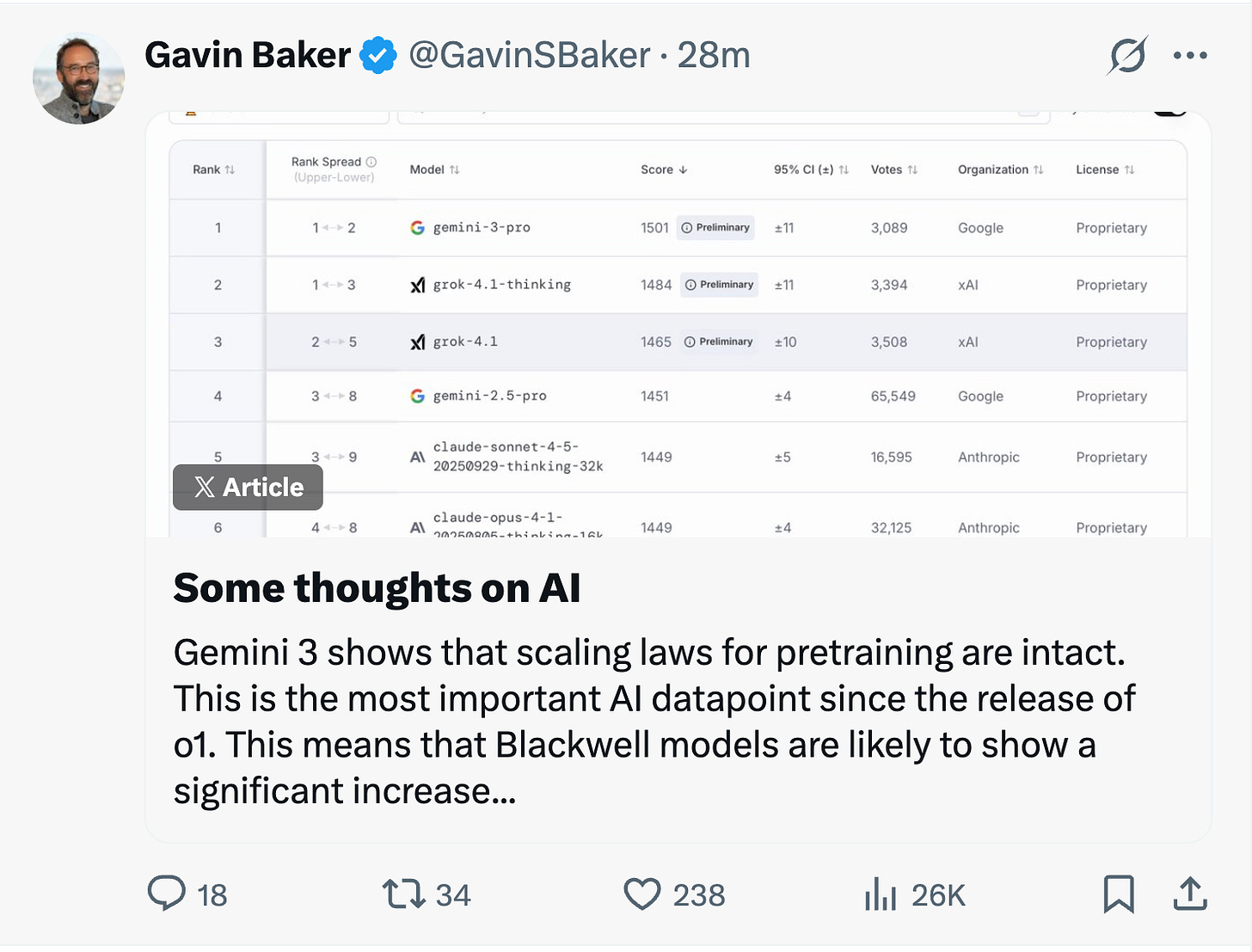

→ Why does it matter? Wild that Gemini was trained without the need for any Nvidia GPUs. Gemini 3 is powerful! Check out Sundar’s examples here.

→ Why does it matter? Gavin Baker is one of the best at the intersection of AI and Markets. Don’t miss his latest.

Worth a watch or listen at 1x:

→ Why does it matter? This may be the best Satya interview I have heard this year. It is frank, candid, and unafraid of hard questions. What struck me most was his range, from the way Microsoft architects its data centers to the strategic decisions steering its AI strategy.

→ Why does it matter? Ari Emanuel is the inspiration for Ari on Entourage. This one is worth a watch. His point on “live” in a world of AI-generated slop makes a ton of sense.

Quotes & eyewash:

A Few Final Thoughts

One perhaps self-serving observation. I’m happy to say I feel better about the second half of my life than the first. My advice: Don’t beat yourself up over past mistakes – learn at least a little from them and move on. It is never too late to improve. Get the right heroes and copy them. You can start with Tom Murphy; he was the best.

Remember Alfred Nobel, later of Nobel Prize fame, who – reportedly – read his own obituary that was mistakenly printed when his brother died and a newspaper got mixed up. He was horrified at what he read and realized he should change his behavior. Don’t count on a newsroom mix-up: Decide what you would like your obituary to say and live the life to deserve it.

Greatness does not come about through accumulating great amounts of money, great amounts of publicity or great power in government. When you help someone in any of thousands of ways, you help the world. Kindness is costless but also priceless. Whether you are religious or not, it’s hard to beat The Golden Rule as a guide to behavior.

I write this as one who has been thoughtless countless times and made many mistakes but also became very lucky in learning from some wonderful friends how to behave better (still a long way from perfect, however). Keep in mind that the cleaning lady is as much a human being as the Chairman.

Warren Buffett - Thanksgiving Note 11-10-25

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.