Reading Ambitiously 1.23.26 - Context is king, protect it at all costs

The context window is the scarce resource inside every LLM. Recent innovations are just different ways of spending that budget wisely.

Enjoy this week’s Big Idea read by me:

The big idea: Context is king, protect it at all costs

Reading time: 6 minutes

Every AI demo you have ever seen has the same hidden dependency: a box of text that the underlying LLM can hold in its “mind” at once.

That box is the context window.

Think of it as the model’s working memory at run time. Models learn enormous amounts during training, but at runtime, they can only condition on what fits within the current window: the system prompt, your message, tool outputs, documents, and custom instructions. All of it competes for the same space. If it’s not in the window, it’s not reliably in play.

If you feel the pace of AI development is dizzying, you’re not alone. Agents, skills libraries, sub agents, MCPs, tools and plugins, Ralph Wiggum loops, it’s a lot to keep up with. Until you realize most of it converges on the same problem: protecting and compounding context.

That pressure is turning into a discipline. Software development is getting easier. The harder work, the new software engineering, is protecting and compounding context across time, tools, and workflows, without sacrificing reliability, security, or clarity.

The long-context arms race

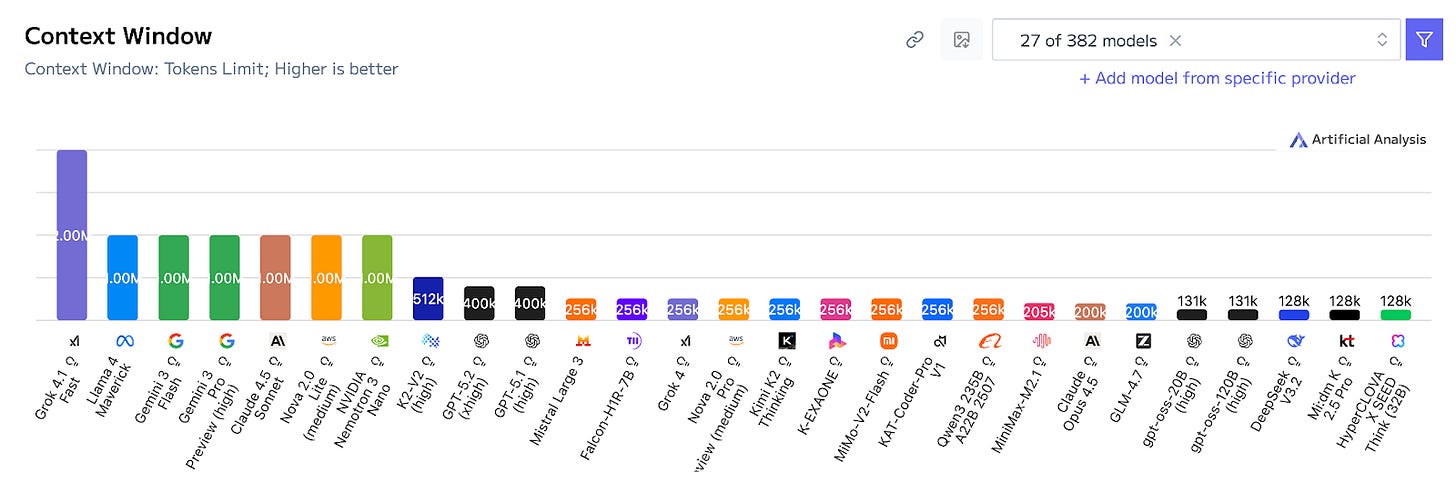

For the past two years, model providers have competed on context window size the way cloud companies once competed on storage limits.

The state of the art now supports 1M-plus token context windows. In plain English, you can hand a model a small library, not just a prompt. Long context expands what is possible.

But a bigger memory does not eliminate the hard part: deciding what matters.

Meet “context rot,” or why more can lead to worse performance

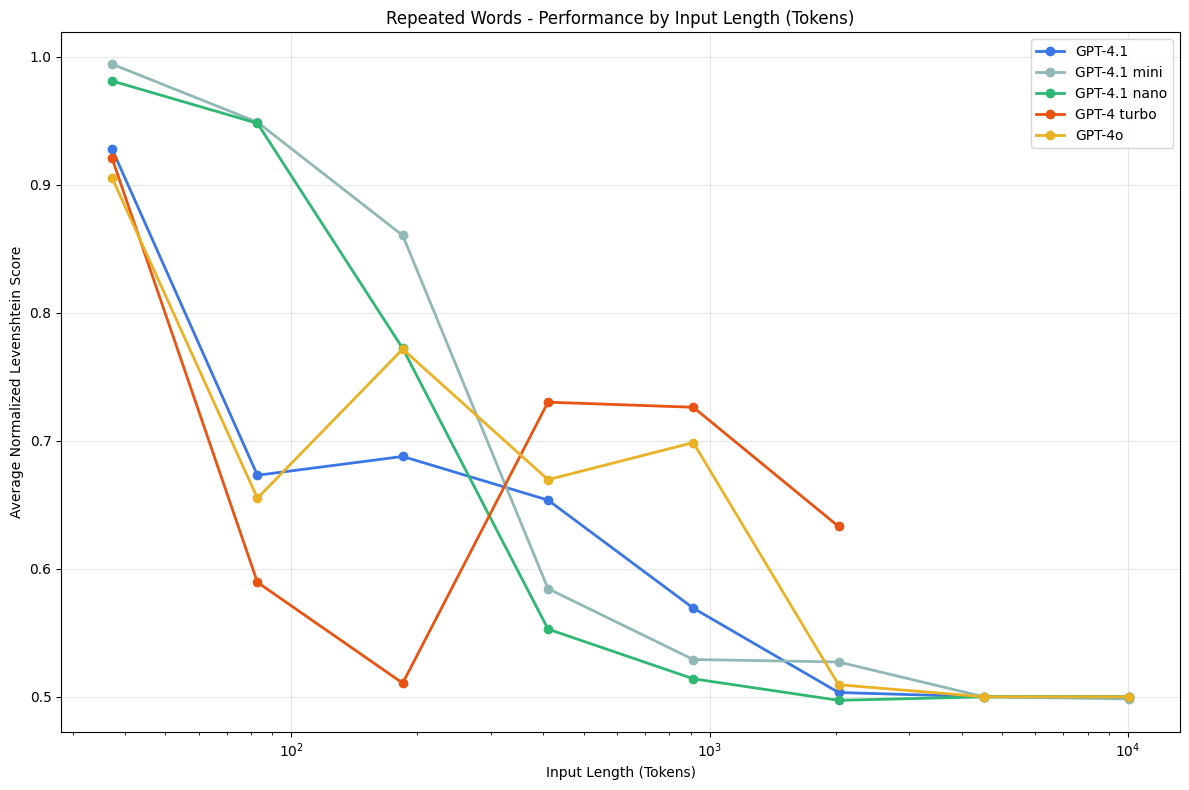

Researchers have documented a frustrating pattern in long-context models: performance can degrade just by moving the relevant information around inside a long context. Models often do best when the crucial detail sits near the beginning or the end, and they do worse when it is buried in the middle.

It’s referred to as context rot, a catch-all term for performance degradation as prompts grow longer, more crowded, and harder to search.

Long context is necessary for real work. But long context can still produce brittle behavior if the model cannot reliably use what you provided.

It is no longer, “How many tokens can I shove in.” It becomes, “How do I curate the right tokens, at the right time.”

Enter context engineering, the iterative practice of selecting what should go into the limited context window from an expanding universe of possible information.

The 2026 story: protect the context window at all costs

Once you see the context window as a scarce resource, many recent “agent innovations” stop looking like separate ideas. Tools, MCP, skills libraries, orchestration loops, retrieval, they are all variations on the same theme: preserve the window for judgment, and push everything else out.

Here is the simple mental model:

Tools and plugins: reach. The model does not try to be a browser or a database. It calls existing systems, then uses the results. This keeps the conversation lighter and the work more reliable.

MCP: plumbing. A standard way to connect agents to external tools and data without turning every integration into custom prompt glue.

Skills libraries: know-how. Reusable playbooks packaged for an agent. Instead of pasting your entire SOP into the prompt, the agent loads the right instructions only when needed.

Orchestration loops (the Ralph Wiggum idea): reset and re-anchor. Long-running work stays grounded in artifacts, files, diffs, tests, not an endlessly growing chat transcript, because the system keeps refreshing the context window and re-centering on the current source of truth.

Retrieval and summarization: select, then compress. Pull the few documents that matter, summarize them into something the model can use, and move on.

Different names, same objective: treat context like a budget, and spend it on the decisions that matter.

Why it matters

When context is high-quality and well-structured, the AI produces excellent output. When context is noisy or inconsistent, less so. Garbage in, garbage out.

Software development may be getting easier. Software engineering is being redefined. The bottleneck is no longer writing code, it is assembling systems that preserve intent across time, tools, agents, and workflows, systems that are reliable, secure, composable, and understandable. It’s context engineering.

So the real craft of building AI Agents in 2026 looks like:

Deciding what must be in the window.

Deciding what should live outside it in tools, files, and skills.

Building mechanisms that load the right context at the right time.

Having the discipline to keep the working set clean.

In other words, the context window is becoming what RAM was to early computing. It is the scarce resource around which the whole system organizes, at least for now.

This feels like the future of software engineering. And I don’t think we’re going back.

Best of the rest:

🛰️ The Palantirization of Everything – Palantir’s real edge is not the model, it’s the hard-won ability to wire AI into real workflows, data, and decision loops, and that playbook is about to spread everywhere. – a16z News

🤖 My agents are working. Are yours? – Jack Clark captures the real shift in agentic AI: not better chat, but a managed fleet of synthetic coworkers that multiplies your output while you hike, sleep, and live. – Jack Clark on X

🏢 The future of enterprise software; Aaron Levie makes the case that agents will not replace SaaS, they will ride on top of systems of record as guardrails, forcing a shift from seats to consumption pricing and expanding enterprise software markets. – Aaron Levie on X

🛠️ Compound Engineering: How Every Codes With Agents; A practical four-step loop that turns agent-written code into a flywheel, where each change teaches the system and speeds up the next one.; Every

🤖 2026: This is AGI. Pat Grady and Sonya Huang argue that long horizon agents can iteratively figure things out in messy, ambiguous environments, which is the practical shift from AI that talks to AI that does, and it pulls a surprising amount of the future into 2026; Pat Grady on X.

Charts that caught my eye:

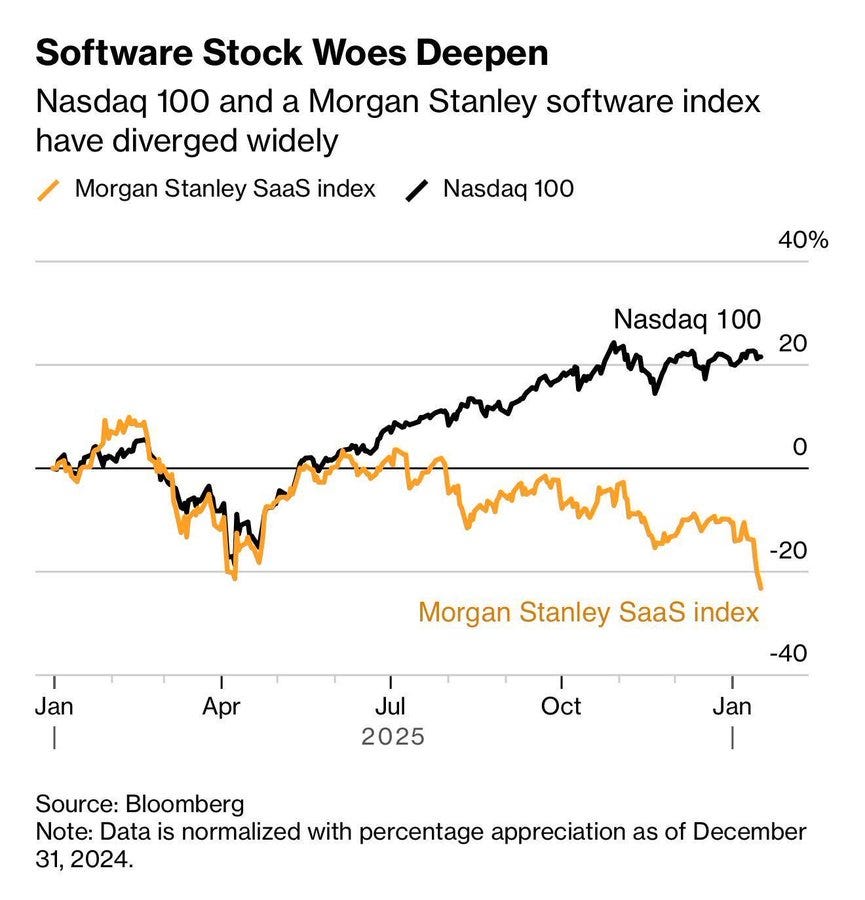

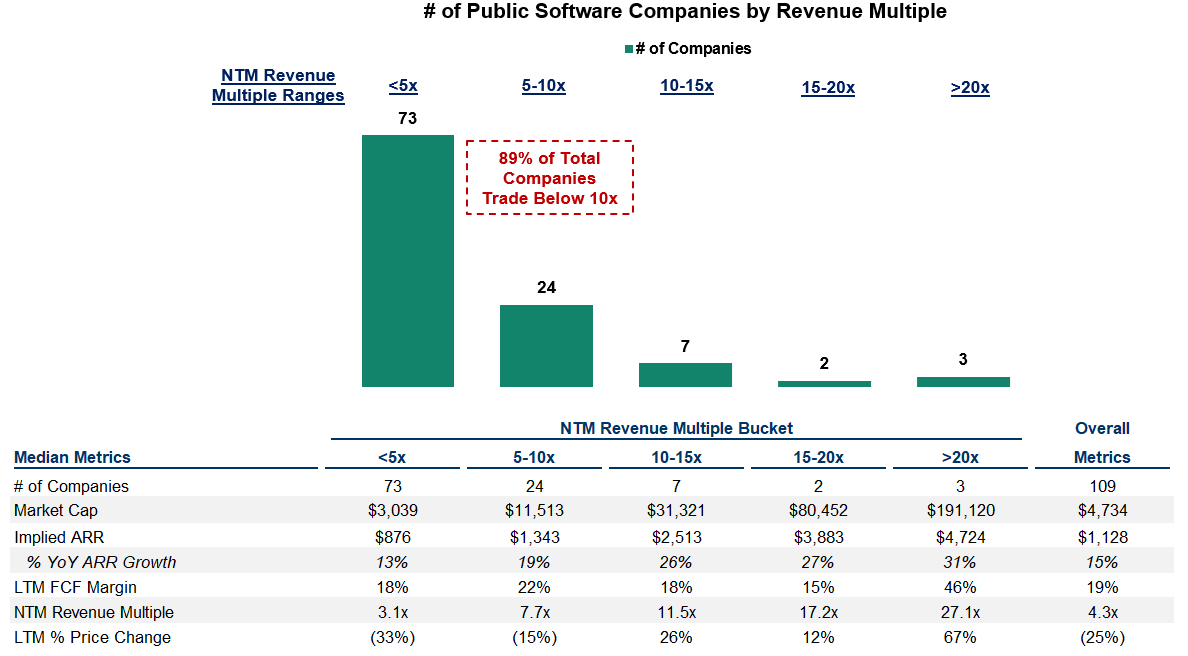

→ Why does it matter? It’s an interesting time for SaaS. One clean way to see the scale is the BVP Nasdaq Emerging Cloud Index, which still represents about $2.0T in market cap and trades at an average 7.3x revenue. Cloud software used to be the default public-market compounder, but it keeps trading down under the logic that AI will replicate big chunks of what these companies do. I’m not sure that story holds up in the places that matter. AI will pressure the thin layers, but it also raises the value of the hard parts, distribution, integrations, and the domain data sitting underneath systems of record. Like most mature markets, that feels like a barbell, the giants get even bigger, and the specialists get even more valuable. Perhaps you won’t want to be in the middle.

→ Why does it matter? Additional data from Meritech on the above.

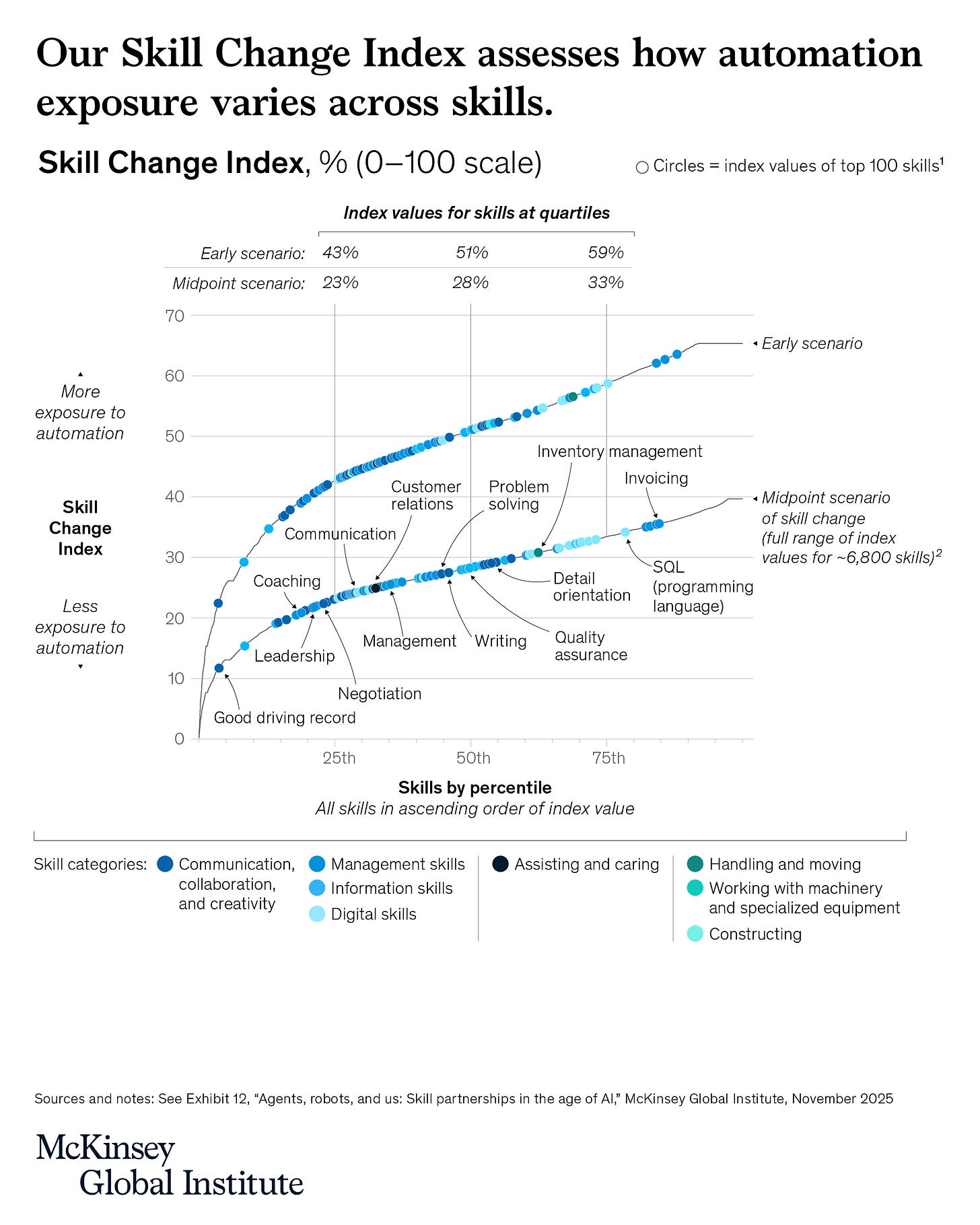

→ Why does it matter? AI won’t make most human skills obsolete, but it will change how they’re used. According to McKinsey, Negotiation, problem-solving, and leadership will matter more than ever as people work alongside agents and robots.

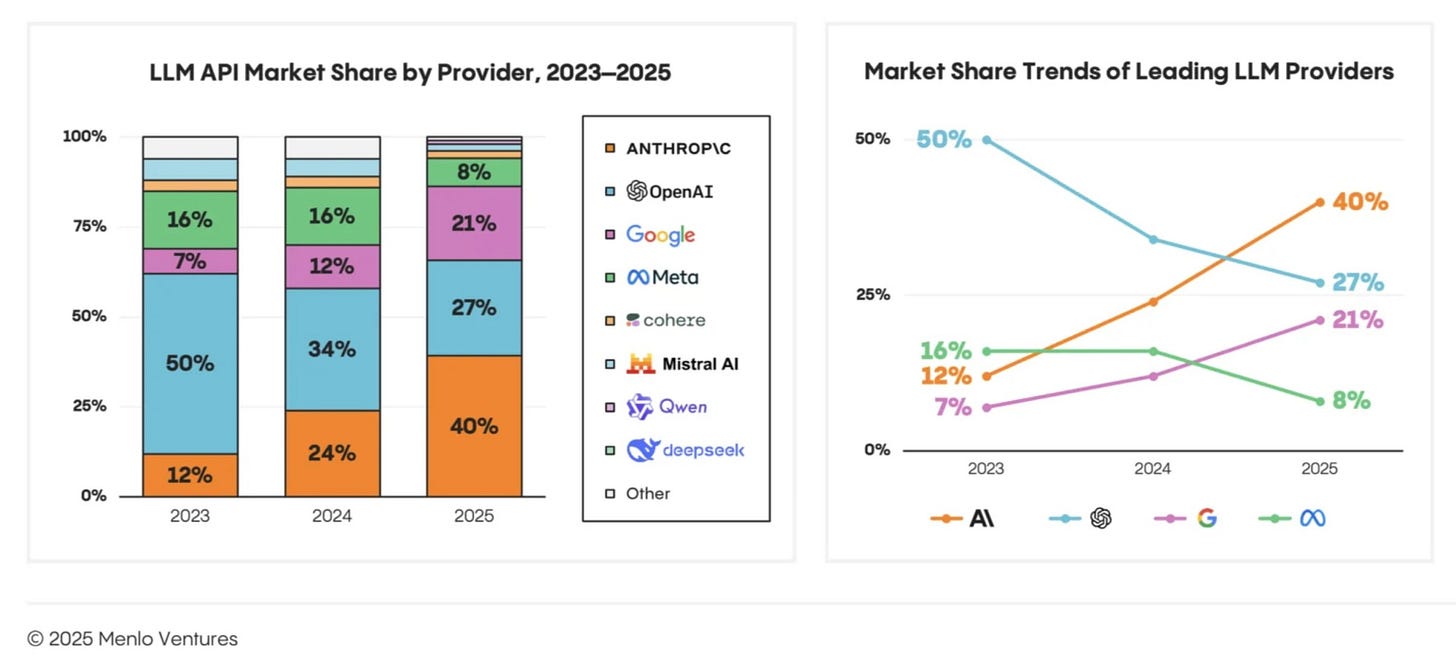

→ Why does it matter? Enterprise market LLM share. Interesting to see Anthropic up and to the right as OpenAI focuses heavily on consumer.

→ Why does it matter? Have always enjoyed Avenir’s state of SaaS; this year doesn’t disappoint. Check it out here.

Tweets that stopped my scroll:

→ Why does it matter? Watch the exact moment that led to winning a Nobel Prize. Full documentary is here (bookmark for your next plane ride).

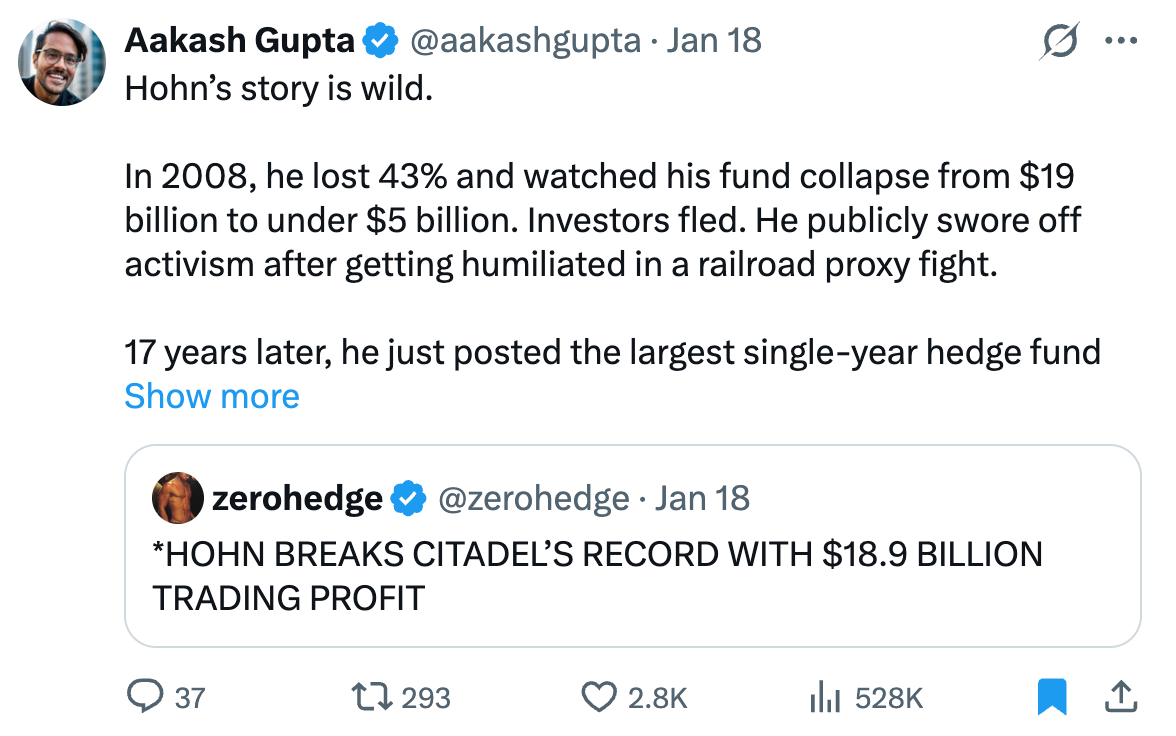

→ Why does it matter? Own monopolies. You can check out TCI’s holdings on Whale Wisdom here.

Worth a watch or listen at 1x:

→ Why does it matter? Had a blast earlier this week with Diane King Hall on Schwab’s Morning Movers discussing a wide range of topics in AI and investment management! Check it out here.

→ Why does it matter? There is a lot coming out of Davos this week, including this conversation with Anthropic’s founder Dario Amodei. Dario is a great thinker, and it’s worth gaining his perspective on all of the most pressing topics in AI.

→ Why does it matter? This McKinsey & Company presentation explores how AI impacts software development, moving beyond Agile methodologies. Worth a watch to see what could be beyond agile.

Quotes & eyewash:

→ Why does it matter? Wow! More than $520 million in contributions from Dave Duffield, including a new pledge of $371.5 million and a 2025 commitment of $100 million, combined with previous gifts, will establish the Cornell David A. Duffield College of Engineering. Go Big Red!

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.

This piece really made me think: while the 'context is king' argument is incredibly compelling for current LLMs, I wonder if a completely new paradigm could emerge that shifts this fundamental dependency, and honestly, you've truely articulated this race so insightfully.

hey Jack, Really enjoyed this framing. Especially the idea of treating the context window like RAM and organising the whole system around preserving it for judgment.

I’ve been thinking along similar lines and recently wrote about context engineering through an “intent” lens.. not as memory management, but as a way to make agent behaviour debuggable and trustworthy.

curious to know if that framing lines up with what you’re seeing as agents get more capable.