Reading Ambitiously 2-14-25

AI Moats & Buffet, GPT 4.5 & 5, Workday Agents, OpenAI Chairman Brett Taylor, YC top 40 most valuable, Cursor 100M+ ARR fastest ever

Enjoy this week’s Reading Ambitiously as a podcast generated by AI.

In the news:

→ Why does it matter? I’ve been thinking about this Tweet a lot. Balaji nails the central question in AI today: Where does lasting value get built?

If raw model performance continues to converge across providers, the evolution of cloud computing may offer an important clue: The moat will move up the stack.

The future AI arms race is now about building those castles.

In the early days of cloud computing, AWS dominated by offering the best raw infrastructure. But as cloud services matured, compute and storage became commodities.

The biggest winners weren’t AWS, Azure, or GCP alone—but also the SaaS giants built on new cloud computing infrastructure: Salesforce, Workday, Snowflake, Datadog.

The same pattern is playing out in AI. OpenAI, Anthropic, and Meta are competing at the infrastructure level, but differentiation is eroding.

If anyone can plug into an LLM via API or use open-source alternatives like Meta’s LLaMA and DeepSeek, where’s the moat?

Answer: It may not be in the model—it’s may be in what you build around it.

This is why OpenAI is racing to build plugin ecosystems, API integrations, and app layers—they know owning the end-user experience is the real prize.

They’re turning ChatGPT into a full-fledged platform, not just an AI interface. With Operator and Deep Research locked behind a $200/month Pro license, they’re pushing premium capabilities. And they’re serious about distribution—reportedly spending $15.5M to acquire Chat.com as a Google-like home for these products.

We’re seeing other players take the same approach. AWS Bedrock, IBM Watson X, and Google’s Vertex AI are designed to turn AI into a sticky enterprise offering—not just a raw model but a deeply integrated solution designed to lock in.

Cloud computing followed this exact pattern:

AWS initially sold raw compute but knew they needed more than just EC2.

They launched Lambda (serverless computing), S3 (object storage), and RDS (managed databases)—not just to provide new services, but to create lock-in. We used to joke the “L” in Lambda stood for “lock in”

Once companies built applications that relied on AWS’s managed services, it became significantly harder to switch providers.

The same thing is happening in AI. If companies build their entire AI workflows using OpenAI’s function calling, fine-tuning APIs, and enterprise AI tools, it won’t matter if another LLM is marginally better—switching will be too painful.

Where AI Moats Are Emerging:

Verticalized AI applications (Harvey AI for legal, Ridgeline for Investment Management)

Proprietary data integration & access (the pipes that feed data from systems of record such as CRMs and ERPs to AI applications)

Workflow lock-in (embedding AI deep into business processes)

Network effects (usage data + fine-tuning feedback loops)

For AI startups, the message is clear:

Thin wrappers over GPT are risky —unless you have deep distribution or defensibility.

Full-stack AI applications with embedded workflows and proprietary data have the best shot at real moats.

Feedback loops and network effects will create self-reinforcing advantages over time. The best AI companies aren’t just selling one-off models—they’re creating self-improving systems. (i.e. GitHub CoPilot, Cursor, TeslaFSD).

The first wave of AI startups rode the momentum of OpenAI and Anthropic’s research. The next wave will need real moats.

The future isn’t just about who is building state-of-the-art LLMs—it equally hinges on who builds the best AI-powered businesses.

Best of the rest:

💡 Three Observations by Sam Altman - OpenAI CEO Sam Altman shares insights on the rapid advancements in AI, highlighting the exponential decrease in costs, the predictable gains from increased investment, and the profound societal impacts anticipated from these trends. - Sam Altman’s Blog

AI intelligence scales with log of resources (compute, data, inference)

Cost of AI drops 10x every 12 months (vs Moore’s Law 2x/18mo)

Value of increasing AI intelligence is super-exponential

🤖 Workday Makes a Play to Manage Your AI Agents - Workday introduces new tools to help businesses deploy and manage AI agents within their operations. - Josh Bersin

🚀 OpenAI Roadmap Update for GPT-4.5 and GPT-5 - OpenAI outlines plans for GPT-4.5 (“Orion”) and GPT-5, aiming to simplify product offerings, unify models, and enhance AI capabilities. - Sam Altman on X

Charts that caught my eye:

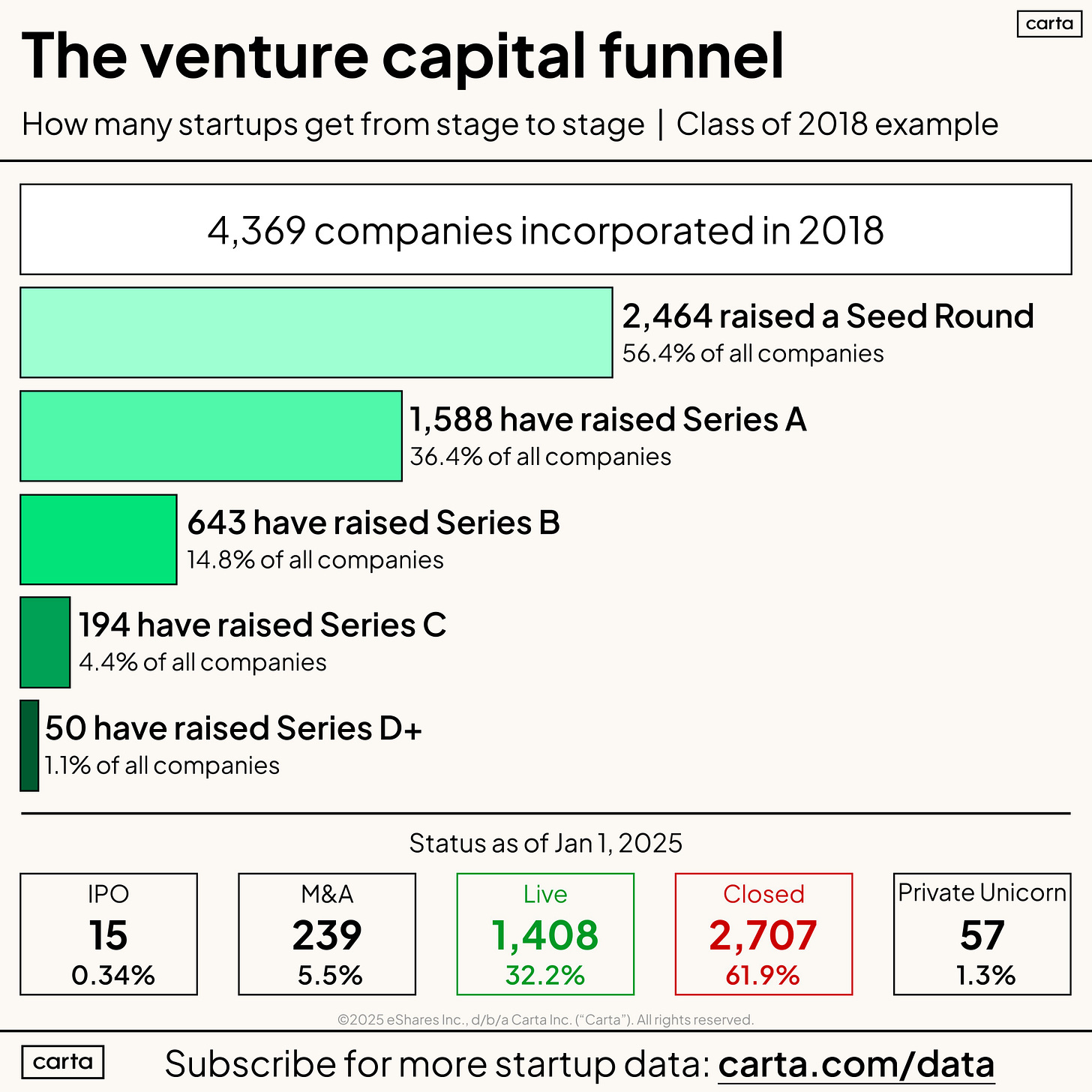

→ Why does it matter? Startupland is ruled by the power law.

🚀 0.34% of companies founded in 2018 have IPO’ed.

💰 1% have raised a Series D+.

💀 62% have shut down.

The odds are brutal—but the upside is massive for those who break through.

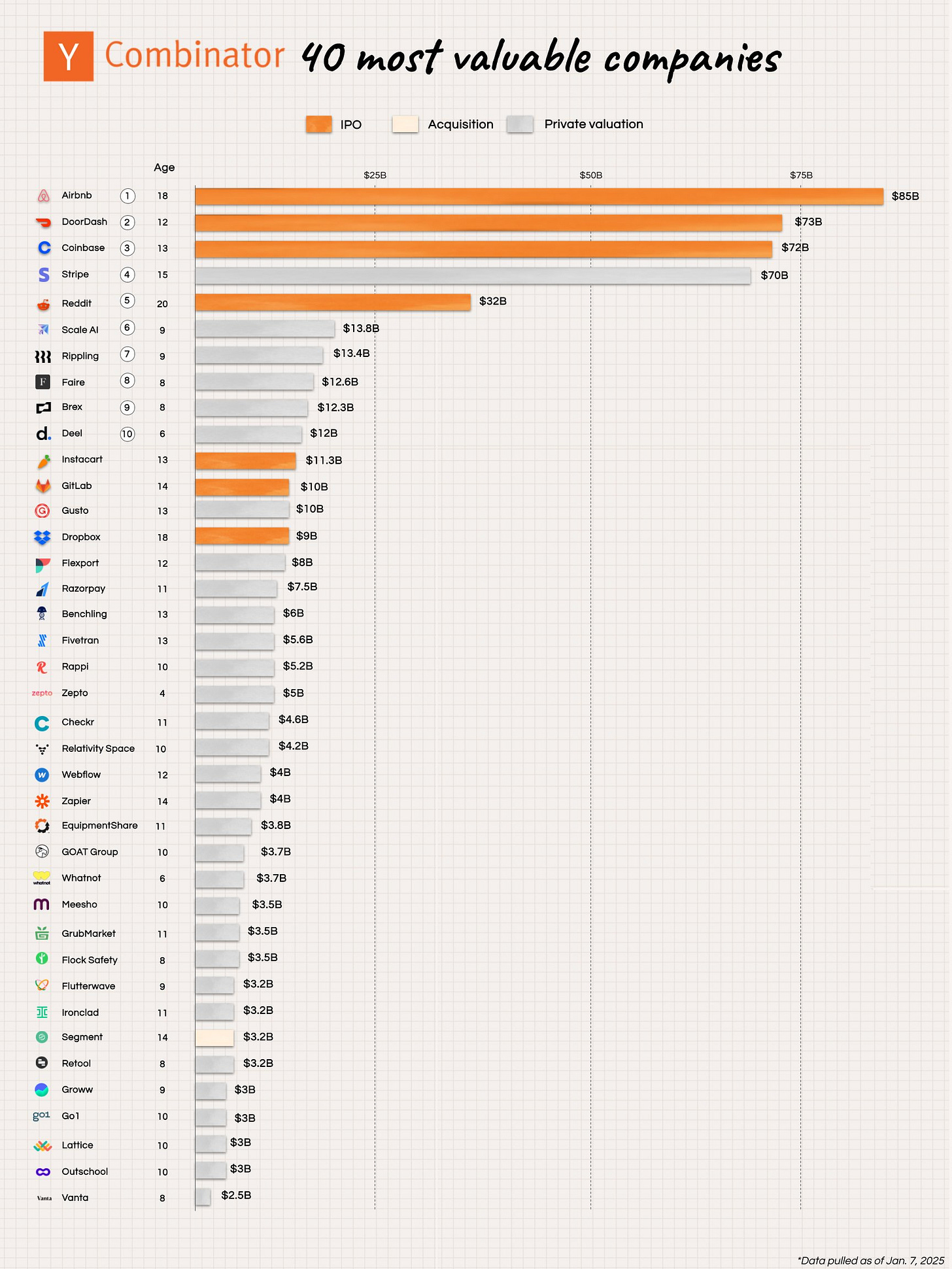

→ Why does it matter? Y Combinator plays the power law like no one else. All 40 of their top companies are valued at $2B+—an astounding hit rate in a venture world where most startups fail. From Airbnb ($85B) to Vanta ($2.5B), YC has a proven ability to attract and identify founders who build market-defining companies. They aren’t just betting on startups—they’re minting category leaders.

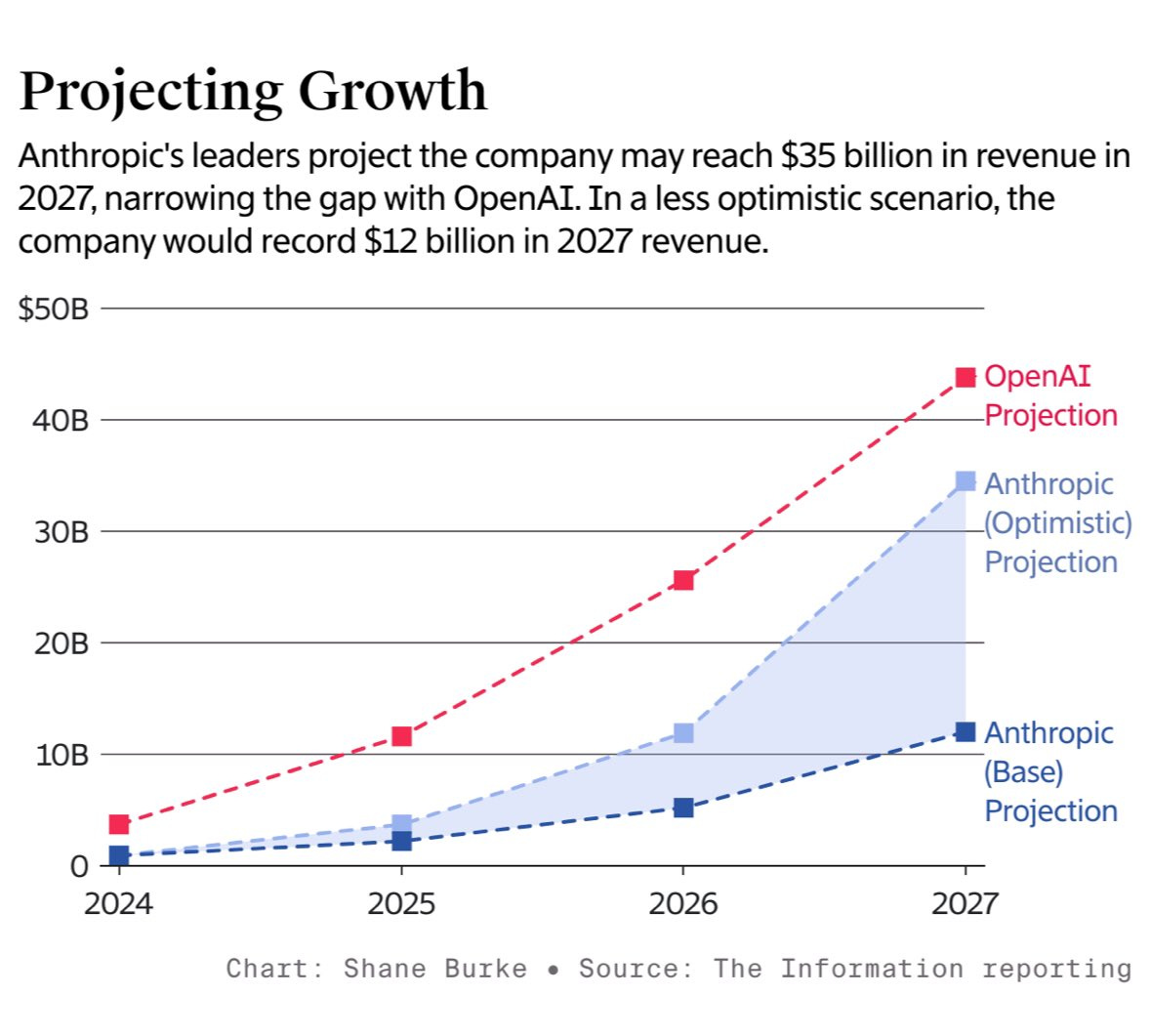

→ Why does it matter? OpenAI and Anthropic are growing remarkably fast. OpenAI is ~5x Anthropic by revenue, projects $44B in 2027

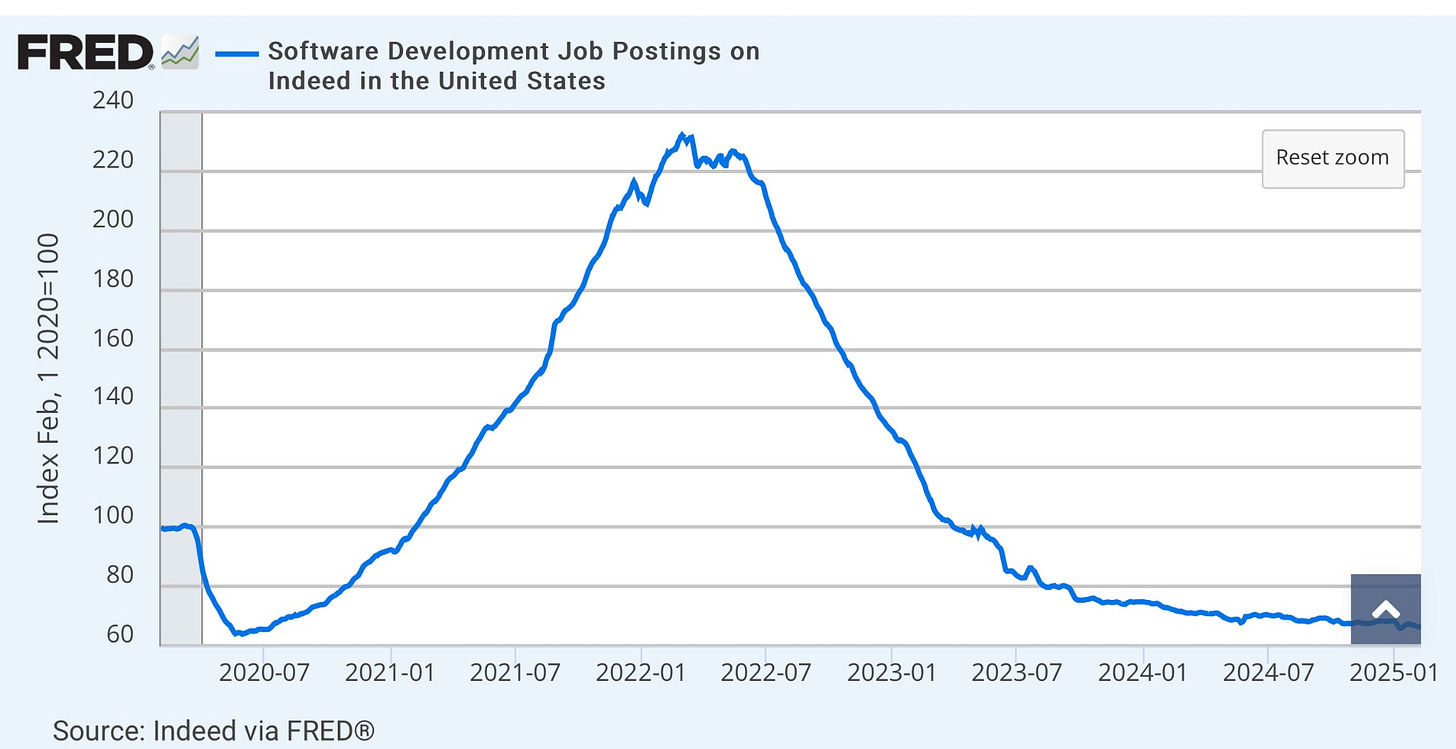

→ Why does it matter? This chart is crazy—software development job postings are back to 2020 levels, down nearly 4x from peak ZIRP in 2022. With higher interest rates and AI reshaping work, the market has sharply corrected.

Tweets that stopped my scroll:

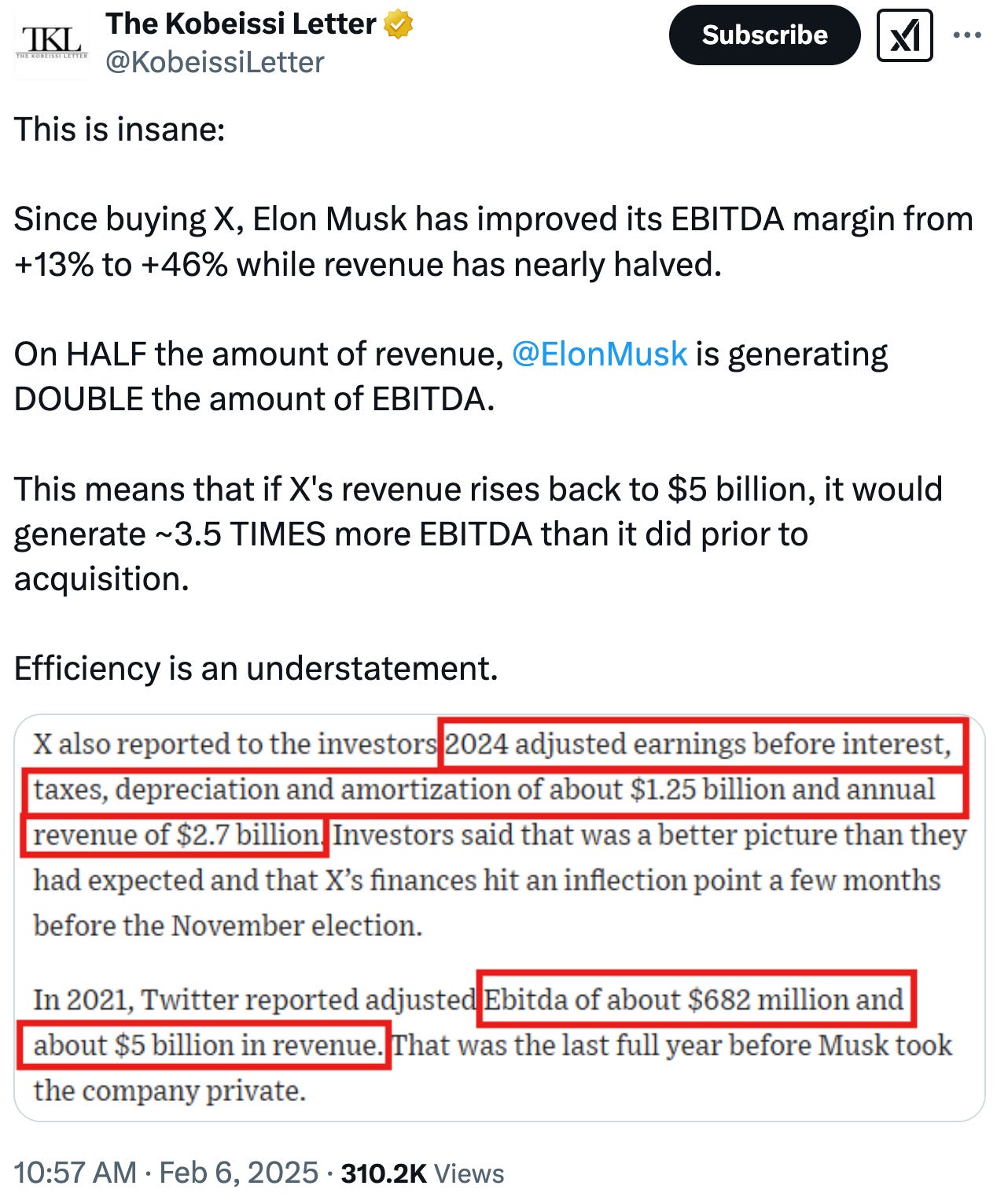

→ Why does it matter? X is operating with 50% of the revenue yet delivering 2x the EBITDA—all with 80% fewer people. Wow!

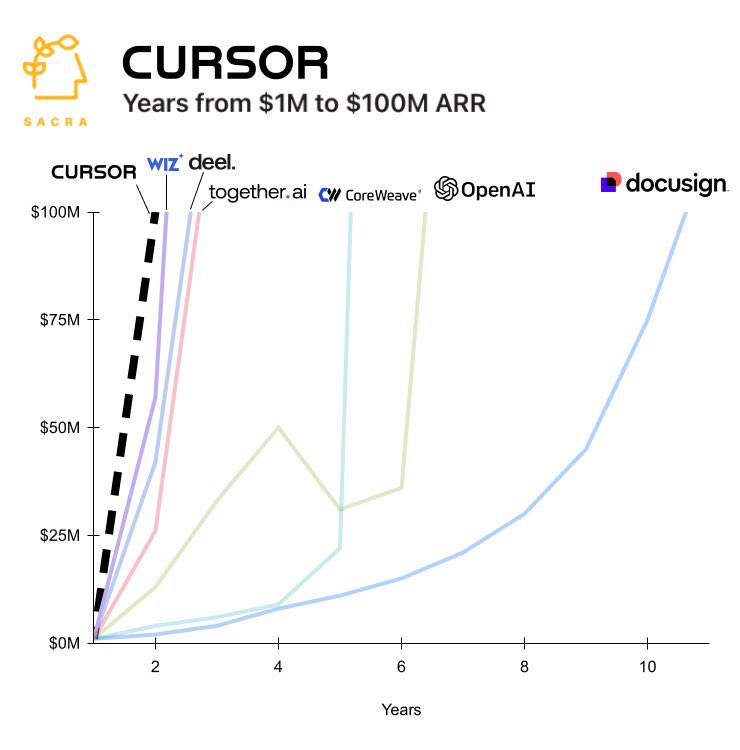

→ Why does it matter? Andrej Karpathy, a founding engineer at OpenAI, calls it “vibe coding”—but it’s really a glimpse into the future of knowledge work. With AI, the user experience is shifting: instead of typing, clicking, and navigating complex interfaces, you can now simply talk to AI tools. Karpathy’s tools of choice? Cursor, an AI-powered composer that turns voice commands into code. This shift could redefine how we interact with software—making AI more intuitive and accessible than ever. Oh, and one more thing—Cursor just became the fastest-growing company to hit $100M. Ever.

Worth a watch or listen at 1x:

→ Why does it matter? Brett Taylor, Founder and CEO of Sierra AI, was once the Co-CEO and heir apparent to Marc Benioff at Salesforce—until their split left Benioff as sole CEO. He’s also the Chairman of OpenAI’s Board, giving him a unique vantage point on the AI opportunity. Revered across the tech industry, his perspective on AI agents—and how he defines them—is worth paying attention to.

Quotes & eyewash:

→ Why does it matter? Hard work starts with agitation. The key? Accept it. We often wait to feel good before diving in—but it’s the act of working, pushing through that initial friction, that actually creates momentum. Lean in, get going, and let the effort fuel your motivation.

“In God we trust; everyone else, we follow up on.”

"The most important thing to me was you have to pick your own strategy, not try to duplicate what others are doing, and you have to make your product great at a few important things. You don't have to compete for each feature, but if you really make it amazingly good at a few things, people buy a product not for all the functions it does, but is it great at something specific. And as long as you pick something that's important and do it amazingly differentiatedly, you can win." - Thomas Kurian, Google Cloud CEO

The Wall Street Journal once used ‘Read Ambitiously’ as a slogan, but it became a challenge I took to heart. If that old slogan still speaks to you, this weekly curated newsletter is for you. Every week, I will summarize the most important and impactful headlines across technology, finance, AI and enterprise SaaS. Together, we can read with an intent to grow, always be learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Great article! The comparison to cloud computing and Sam’s comment comparing Moore’s Law with AI model cost is an interesting perspective. LLMs have already indexed everything we have ever made so commoditization is moving waaay faster than anyone expected. Google’s in a decent position with the range of their tech and distribution to capitalize on their backlog of data but OpenAI building a standalone platform seems more in line with how people are using AI currently—going to a separate and cleaner interface apart from other tech.