Reading Ambitiously 2-21-25

Meet Grok3 from xAI, ex-OpenAI CTO's new gig, Replit + Agents = we can all build software, Unbundling BPO & Service as Software from a16z, The Best Teams Win, Groq & Apple's $19 polishing cloth

Enjoy this week’s Reading Ambitiously as a podcast entirely generated by AI.

In the news:

Musk's xAI unveils Grok-3 AI chatbot to rival ChatGPT, China's DeepSeek (Reuters)

→ Why does it matter? At CES 2025, Jensen Huang (CEO, Nvidia) outlined what he sees as the three critical scaling laws that drive AI breakthroughs:

Pre-training scaling (build a massive model with massive data)

Post-training scaling (refine it through techniques like reinforcement learning)

Test-time scaling (give the AI time to actually think through problems instead of rushing to answers).

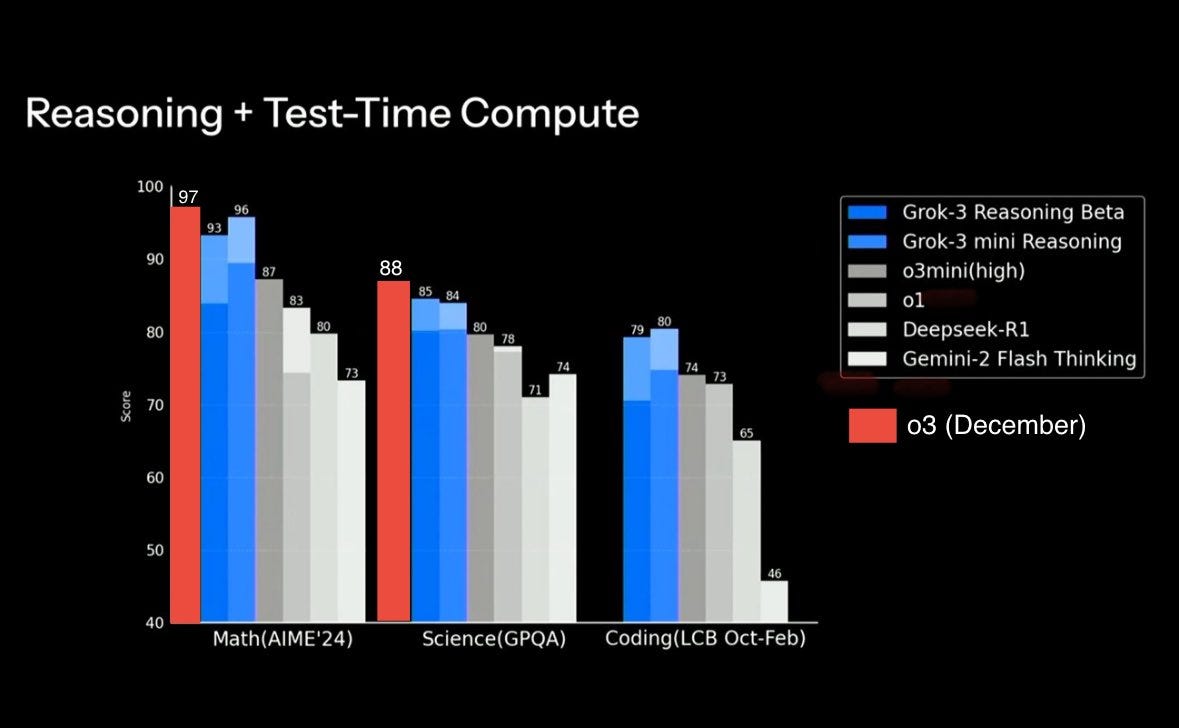

There's intense focus on test-time compute right now - you can see it in OpenAI's o3 and Google's Gemini Ultra reasoning models. This emphasis comes as the industry grapples with a tough question: are we hitting diminishing returns on massive pre-training CAPEX investments?

Yet we're watching these scaling laws being tested in fascinating ways. Take DeepSeek, which just upended conventional wisdom. Their model matches leading LLMs at just 7% of the typical API cost and runs on standard workstations. It's a powerful demonstration that engineering ingenuity - born from capital constraints - can drive breakthroughs in model optimization and selective activation.

Enter Grok 3. Announced Monday, it represents the polar opposite approach. In an old Electrolux factory in Memphis, xAI is pushing pre-training compute to levels that seemed impossible just months ago. They've deployed 200,000 GPUs - about $4 billion worth of NVIDIA's latest chips - assembled in just 214 days. For perspective, that's over 6x larger than any previous AI training cluster. That's not a typo - the previous record was 32,000 GPUs.

The results? While Grok 3 shows impressive performance in mathematical reasoning and coding benchmarks, the most telling detail might be what's missing - direct comparisons to OpenAI's latest o3 model were notably absent from xAI's materials. The internet (h/t @12exyz) decided to add them.

But here's what makes this truly significant: xAI doubled their compute capacity in just 92 days, from 100K to 200K GPUs. Consider the timeline: OpenAI was founded in 2015, Anthropic in 2021, and xAI in 2023. In just 18 months, a company that didn't exist during COVID has built an AI infrastructure rivaling organizations with nearly a decade of experience and billions in investment. This isn't just about raw computing power - it's about the unprecedented speed at which new players can now enter and compete in advanced AI development. The question isn't just whether bigger is better, but whether the barriers to entry in frontier AI are fundamentally changing.

We're witnessing a defining moment in AI's evolution, reminiscent of previous paradigm shifts in computing. Just as the industry split between open and closed approaches during the transition from mainframe to client/server (Sun vs Microsoft), AI is reaching its own crossroads. xAI's massive infrastructure investment betting on scale faces off against DeepSeek's efficient, open-source approach that's already seeing rapid community adoption. The stakes couldn't be higher - the winner of this architectural battle may determine how AI develops for the next decade.

Best of the rest:

💻 New Junior Developers Can’t Actually Code - A deep dive into how AI tools are changing the way new developers learn—and why it might be a problem. - NNM Blog

🧠 Thinking Machines Lab: Ex-OpenAI CTO Mira Murati’s New Startup - Mira Murati unveils her new venture, Thinking Machines Lab, focused on building AI systems that are more customizable, capable, and widely understood. - TechCrunch

🏗️ Zillow Builds Production Software Using Only AI - Replit and Anthropic's AI agents successfully developed and deployed enterprise software without human engineers, marking a significant milestone for AI-powered development. - VentureBeat

P.s. Sometimes headlines can be hard to fully grasp without visual context. This 45-second video helps demonstrate AI Agents & Replit working together beautifully. (h/t @LamarDealMaker)

Charts that caught my eye:

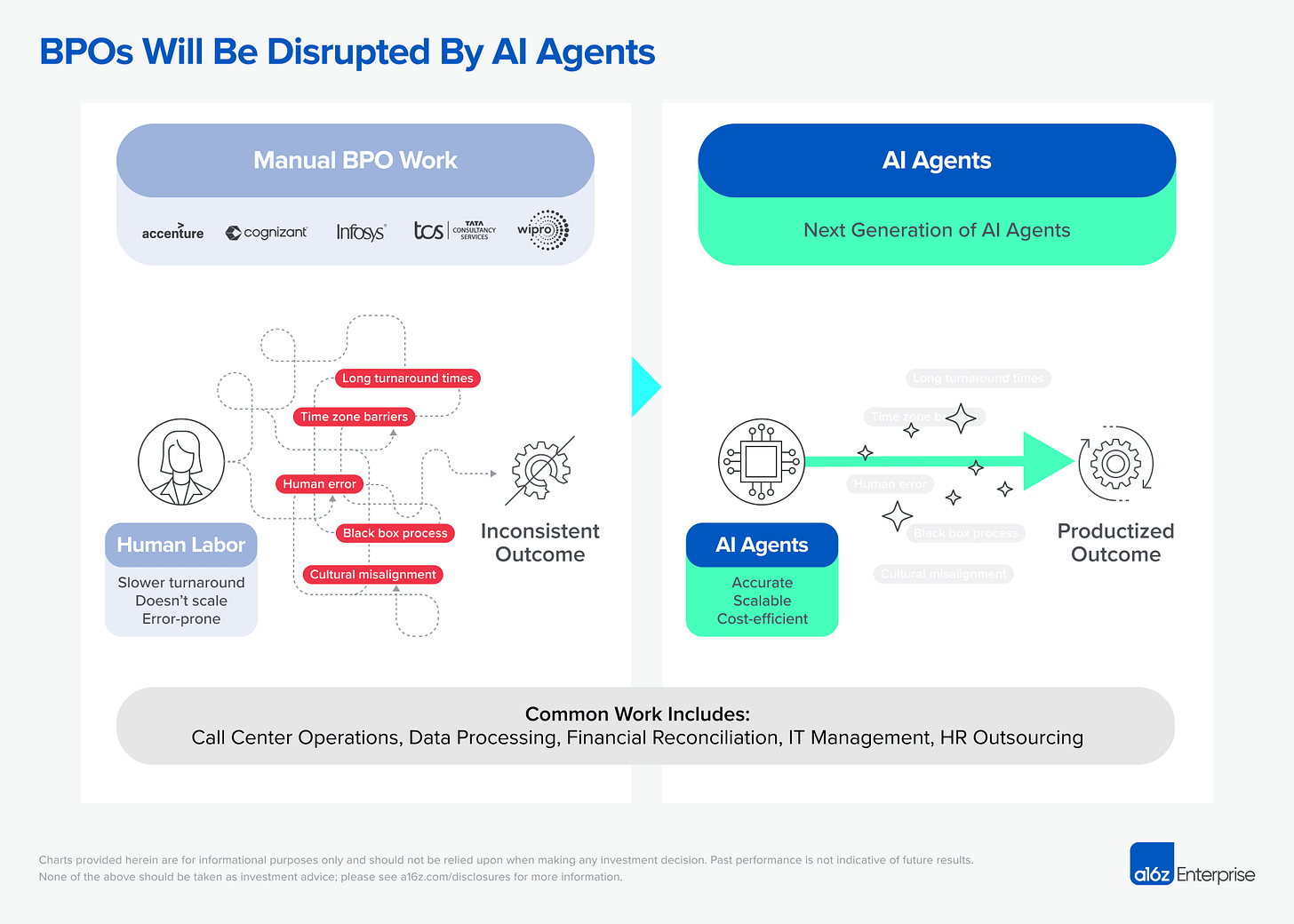

Unbundling the BPO: How AI Will Disrupt Outsourced Work (a16z)

→ Why does it matter? AI agents are revolutionizing Business Process Outsourcing (BPO), enabling companies to bring previously outsourced processes back in-house and automate them with a digital workforce. This transformation is redefining the SaaS landscape, ushering in the era of Service as Software, where software doesn't just help people do their jobs—it does the job too.

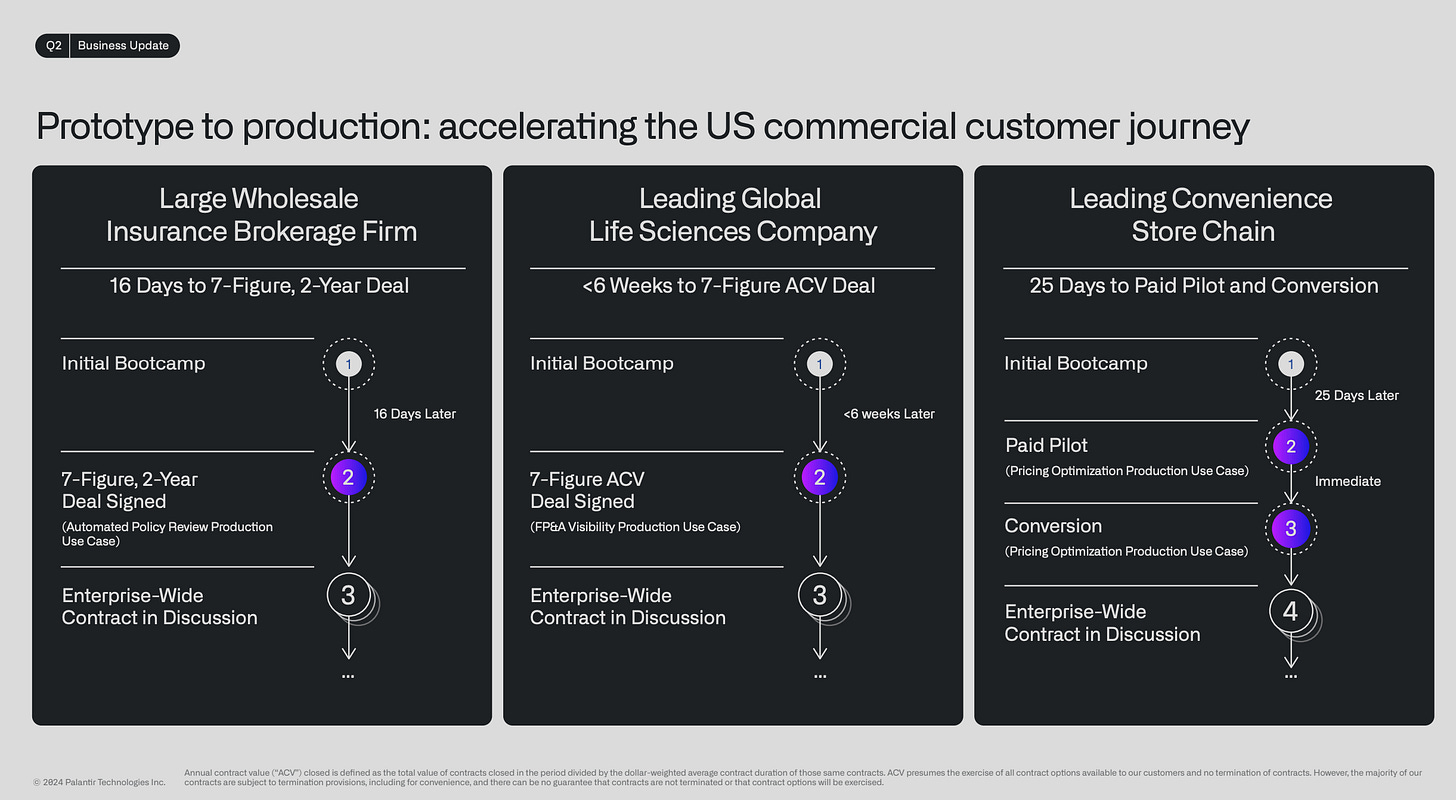

Palantir Q2 2024 Earnings Presentation (Palantir.com)

→ Why does it matter? While last week we discussed Palantir only having 2% of their competitors' total revenues, the speed at which they are executing is unprecedented. Palantir's boot camp led growth (BLG) model is revolutionizing the B2B enterprise sales playbook. By accelerating the customer journey, reducing time-to-value, and fostering deep engagement through immersive bootcamps, BLG enables customers to experience the transformative potential of Palantir's solutions fast!

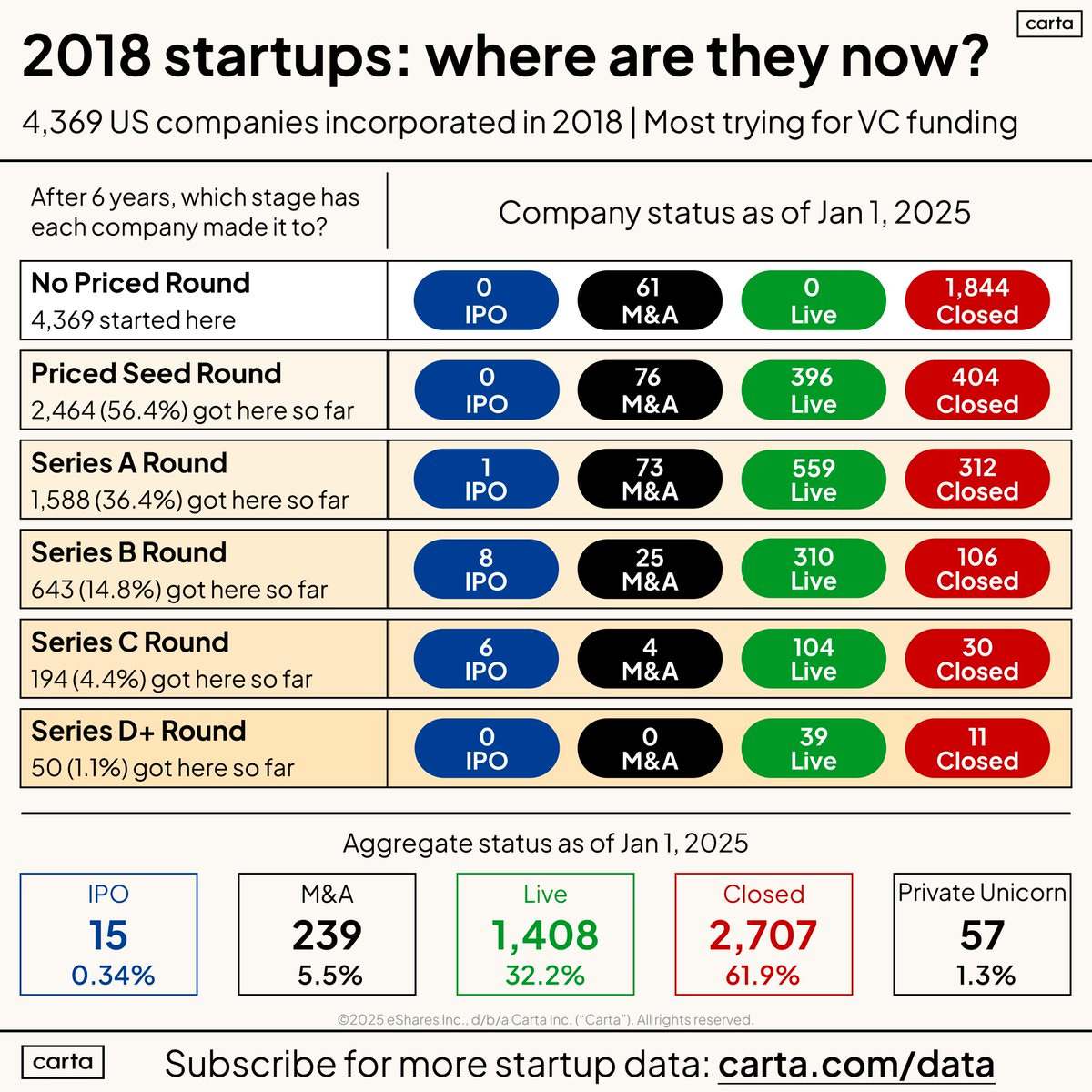

→ Why does it matter? Building a successful company is taking longer than ever. It's a tough journey, with only 1% of companies founded in 2018 reaching Series D - and none going public yet. For founders and investors, this means planning for longer runways and focusing on sustainable growth. Jason Lemkin of SaaStr’s take is spot on.

Tweets that stopped my scroll:

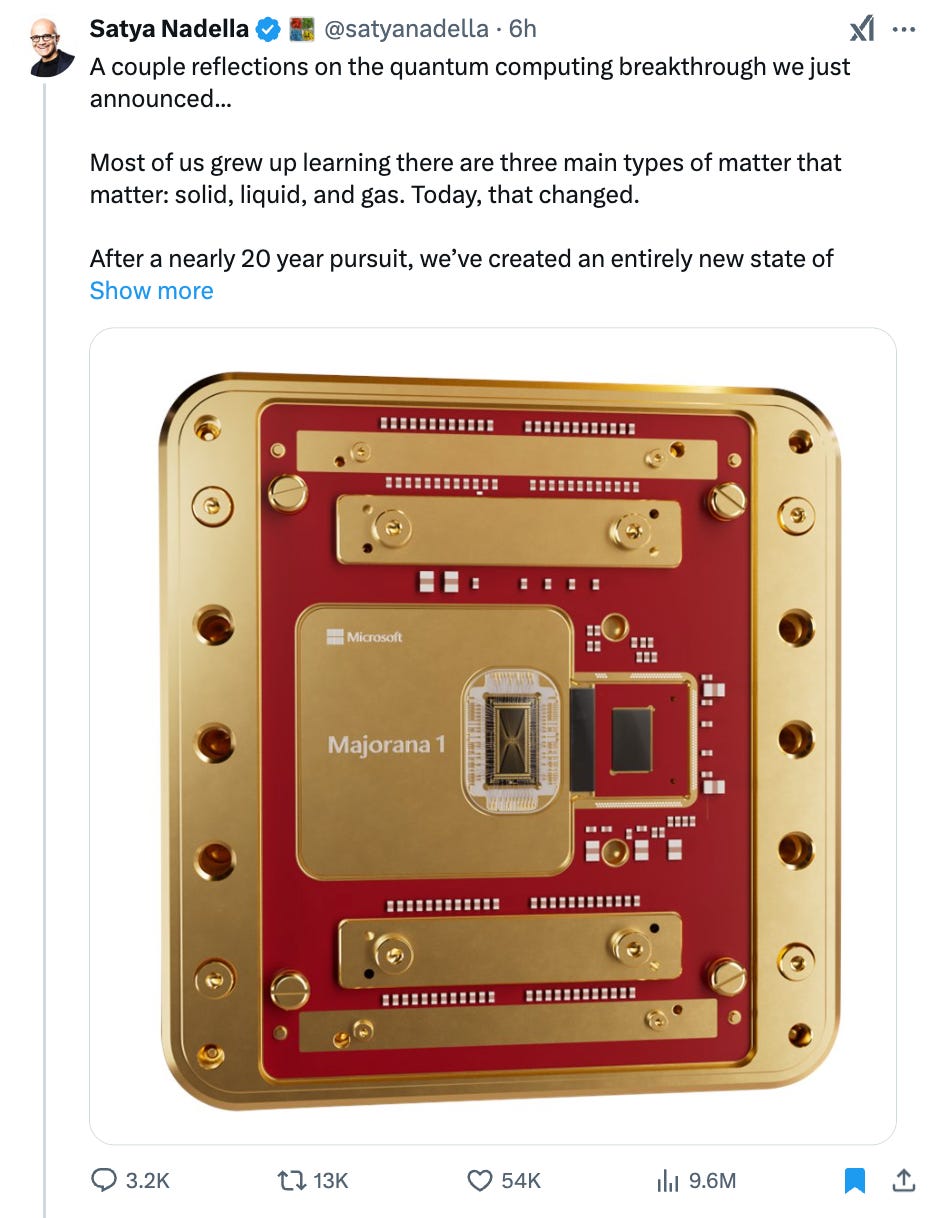

→ Why does it matter? Following Google's Willow chip announcement, Microsoft has made its own quantum leap. Their breakthrough uses topoconductors to create incredibly tiny qubits - just 1/100th of a millimeter. While competitors talk about quantum computing decades away, Microsoft believes their approach could deliver practical results in years. For the first time, we can see a clear path to a million-qubit processor that could revolutionize computing as we know it.

→ Why does it matter? The AI arms race has a clear media playbook: No major player can let another steal the spotlight for long. When xAI launches Grok 3, the emergency press releases seem to fly: Microsoft announces quantum breakthroughs, OpenAI teases GPT-4.5, and Google unveils its "Agent Army." The timing feels less like coincidence and more like carefully orchestrated positioning.

Worth a watch or listen at 1x:

→ Why does it matter? Technology companies are really in the talent business. There is a massive power law when it comes to talent in this industry. For example, if you get the best taxi driver in New York City, you might get from LaGuardia to Manhattan 30% faster, a 3:1 ratio based on the best talent. However, in software, the difference is much more pronounced, with ratios more like 50:1 or 100:1. The teams that have the best talent ultimately win. This interview was a great discussion about the importance of talent in the technology industry.

→ Why does it matter? This episode of 20VC hit 400% above average listen rates for good reason. Groq (not to be confused with xAI's Grok) represents a fresh challenge to Nvidia's AI dominance. While everyone focuses on training large AI models, Groq's founder Jonathan Ross - who created Google's TPU - is betting on inference, the actual running of AI models. With companies spending millions on inference costs, this bet on making AI run faster and cheaper in production could reshape the entire AI infrastructure landscape.

Quotes & eyewash:

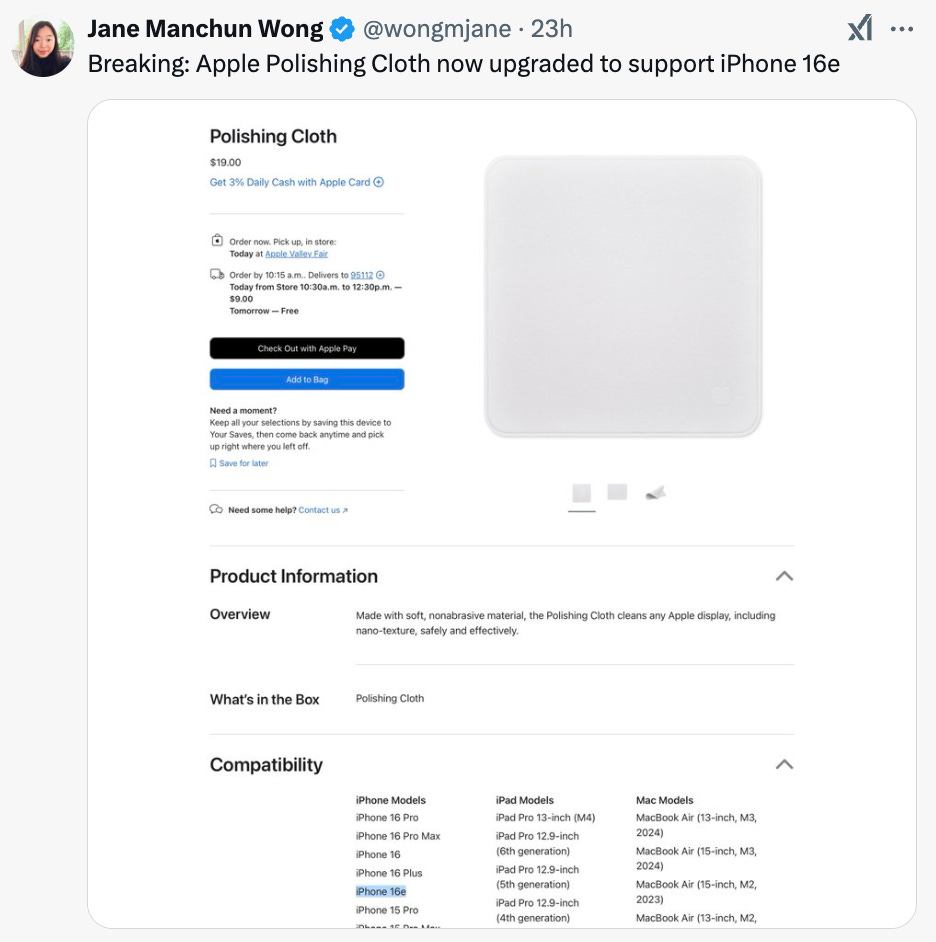

→ Why does it matter? If you haven’t heard about Apple’s infamous $19 polishing cloth—a longtime punchline on the internet—it just got an upgrade. As of this week, it now supports the iPhone 16e. Yes, really.

The mission:

The Wall Street Journal once used ‘Read Ambitiously’ as a slogan, but it became a challenge I took to heart. If that old slogan still speaks to you, this weekly curated newsletter is for you. Every week, I will summarize the most important and impactful headlines across technology, finance, AI and enterprise SaaS. Together, we can read with an intent to grow, always be learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”