Reading Ambitiously 2-7-25

Jevons Paradox, Zuck's 1B Digital Assistants, 2025 IPO Candidates, Palantir Earnings, AI Moats, Shopify CEO Tobi Lütke, Replit's AI App Builder and Thomas Watson's Wild Ducks

The Wall Street Journal once used ‘Read Ambitiously’ as a slogan, but it became a challenge I took to heart. If that old slogan still speaks to you, this weekly curated newsletter is for you. Every week, I will summarize the most important and impactful headlines across technology, finance, AI and enterprise SaaS. Together, we can read with an intent to grow, always be learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Thanks to GenerativeAI and our friends at GoogleNotebookLM, you can enjoy this week’s Reading Ambitiously as a podcast entirely generated by AI. If you haven’t experienced this technology yet, definitely give this a try!

In the news:

Mark Zuckerberg Says This Will Be a Huge AI Breakthrough in 2025 for Meta Platforms (Keithen Drury, The Motley Fool & Yahoo! News)

→ Why does it matter? The key takeaway from last week’s DeepSeek news? AI is getting cheaper.

Microsoft CEO Satya Nadella referenced Jevons’ Paradox to illustrate the impact of falling AI costs. Economist William Stanley Jevons observed that as a resource becomes more efficient and cost-effective, its demand tends to rise rather than decline.

Applied to AI: as costs drop, usage will skyrocket, accelerating a lot of adoption in 2025

AI players largely fall into two categories:

Model Producers – AI research labs spending hundreds of millions to train and develop foundational models. Think OpenAI, Anthropic, xAI, Meta, and Microsoft. These companies are also Nvidia’s biggest customers, spending a lot of CAPEX and fueling demand for GPUs.

Model Consumers – Software and application companies that integrate AI by accessing foundational models (usually via API).

Are some companies both producers and consumers? Yes — but it’s uncommon and costly. Meta develops Llama (LLM) and embeds it across Facebook (app) and Instagram (app). OpenAI builds GPT-4o (LLM) and deploys it in ChatGPT (app). But for now, most applications with AI-enabled capabilities rely on accessing third-party model producers. Down the road, companies will likely develop their own domain-specific AI models, but today, it’s cost-prohibitive and requires scarce expertise.

Accessing an LLM in your application as a Model Consumer isn’t cheap. Pricing is typically based on tokens—the number of words (or word fragments) sent to and received from the model. The most capable LLMs, such as OpenAI’s o1 reasoning model, are expensive.

OpenAI’s o1 charges $15 per million input tokens and $60 per million output tokens. DeepSeek R1? $0.14 per million input and $2.19 per million output. This is a dramatic decrease in cost, which is why the DeepSeek news was such a big deal.

So if the costs come down, this is going to drive usage up, and usage is going to be from Model Consumers building applications and agents. Zuck believes digital assistants could be a big driver of that usage, reaching 1 billion users this year. He told Meta employees last week to “Buckle Up”

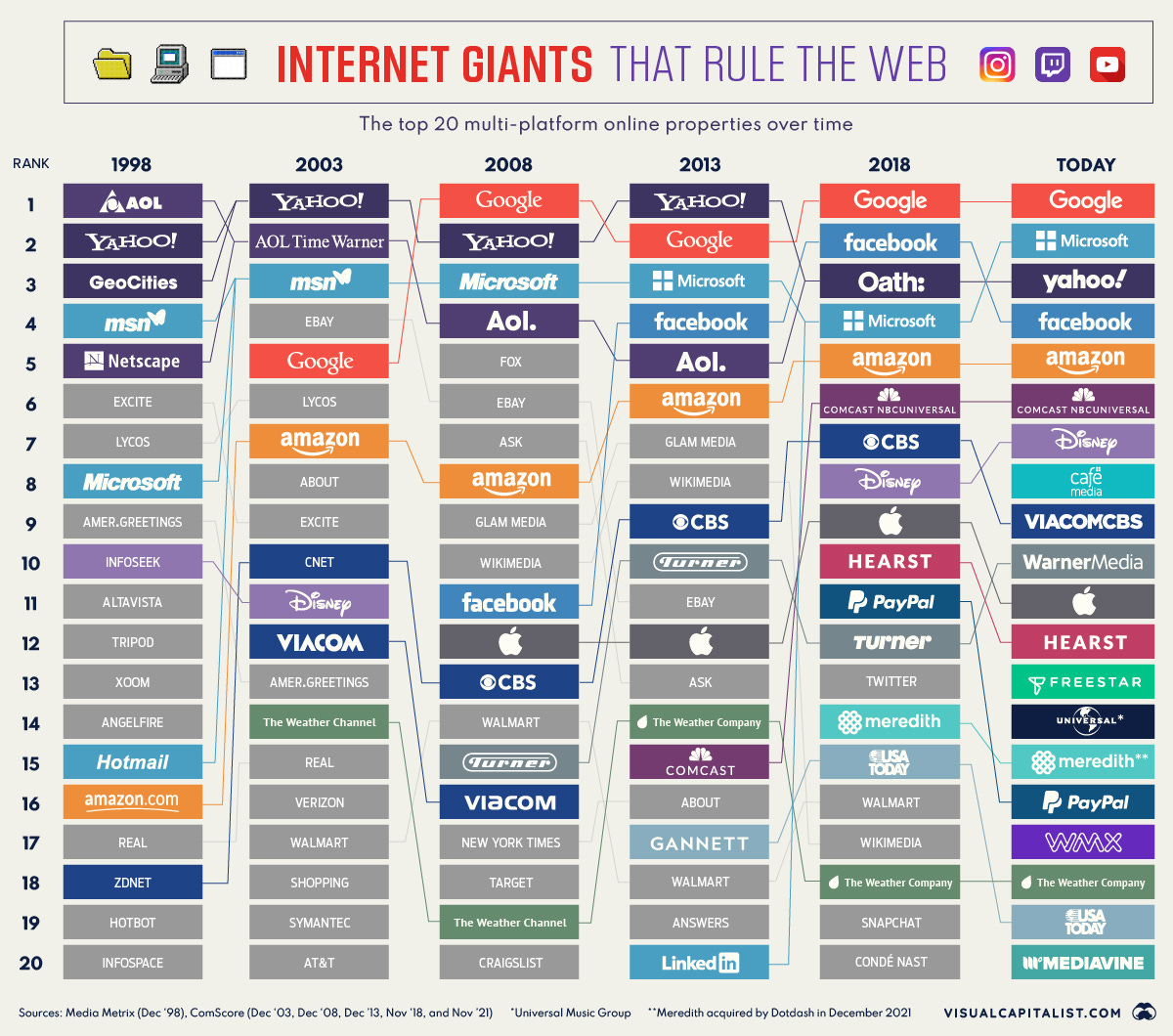

When something becomes cheaper and more accessible, consumption surges. Simultaneously, a paradigm shift in technology often triggers a reordering—of markets, business models, and competitive dynamics all at once.

Why is Zuck convinced this is one of the most important products in history? Because in the last great reordering, the internet, early leaders were not the eventual long-term market leaders. He knows the stakes have never been higher.

Best of the rest:

📈 Here Are All the IPOs Reported to Be in the Works for 2025 - A look at the companies expected to go public this year as IPO activity picks up. -TechCrunch

🗣️ AI Voice Agent Update – 2025 - a16z explores the latest advancements in AI voice agents and their impact on the industry. - a16z

💰 SoftBank Commits to Joint Venture with OpenAI, Will Spend $3 Billion Per Year on OpenAI’s Tech - SoftBank deepens its AI push with a major investment in OpenAI, committing $3 billion annually to its technology. - CNBC

Charts that caught my eye:

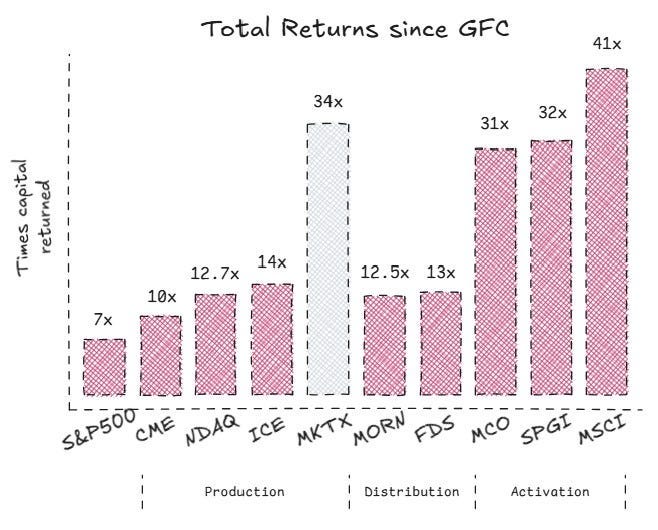

10,000x. Bloomberg’s return and why financial data is so darn lucrative (The Terminalist)

→ Why does it matter? Selling financial data is extremely lucrative. The Terminalist reports that despite our collective intelligence, the human mind has a peculiar blind spot. It simply doesn't grasp the power of compounding.

FactSet has compounded at 19% for 28.5 straight years. An initial investment of $10,000 in FactSet at its IPO in Jun 1996 would be worth nearly $1.4 million today (year-end 2024). Let that sink in. A similar investment in the S&P 500 would be worth a tad over $130k. FactSet has outperformed the S&P500 by a factor of 10 since its IPO. TEN!

→ Why does it matter? Palantir reported earnings this week, with Q4 revenue up 36% YoY to $828M. The company expects annual revenue of $3.74B–$3.76B this quarter, beating analyst estimates of $3.54B. Palantir is now valued higher than Lockheed Martin, Northrop Grumman, and L3Harris combined—despite generating just 2% of their total revenue. You have to love CEO Alex Karp’s enthusiasm about it!

Tweets that stopped my scroll:

→ Why does it matter? The real competitive moat may not be in the LLM itself but in the wrapper—the specialized UX, fine-tuning, integrations, and proprietary data that make AI useful for specific industries. If foundation models become interchangeable, the companies that build the best distribution, workflows, and ecosystems around them will hold the advantage. AWS, Azure, and GCP built moats around services and infrastructure, AI wrappers may prove more valuable than the raw models. The battle for AI dominance could shift from model development to application-layer innovation.

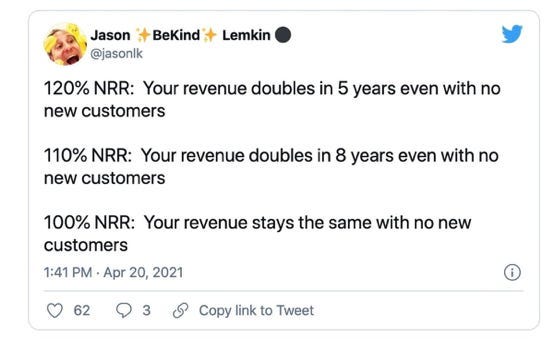

→ Why does it matter? This 2021 Tweet is a keeper. Net Revenue Retention (NRR) measures the percentage of recurring revenue retained from existing customers over a specific period, accounting for expansions, downgrades, and cancellations. An NRR exceeding 100% indicates that revenue growth from existing customers surpasses revenue lost from churn and downgrades. For instance, an NRR of 120% suggests that $1 today will be worth $1.20 next year, implying that you could double your revenue in approximately 5 years without acquiring new customers. The key is to consistently deliver innovative capabilities at reasonable costs to your existing customers. The power of compounding!

→ Why does it matter? Replit just made building software even easier. Their cloud-based development environment now lets you describe an app, and AI will generate it for you—handling everything from setup to deployment. No manual configuration, no local installs—just instant coding in the cloud from your iPhone. A glimpse into the future of software development.

Worth a watch or listen at 1x:

→ Why does it matter? Graham Duncan, co-founder of East Rock Capital, joins Patrick O’Shaughnessy for a conversation rich with wisdom and perspective. It’s rare to hear an interviewer who’s such close friends with their guest, and that dynamic makes this a must-listen. Patrick is uniquely positioned to bring out the very best of Graham. Enjoy!

→ Why does it matter? Even if you don’t have time to listen to the full episode, I highly recommend checking out the key takeaways on Lenny’s Substack. My favorite: “As leaders, it’s our job to help others see the best version of themselves—even if it means pushing them outside their comfort zone.”

Quotes & eyewash:

Marc Andreessen explains IBM founder Thomas Watson‘s famous “Wild Ducks”.

"When on a roll of any kind, always maintain it as long as possible. Momentum isn't always easy to conjure…" -Rick Rubin