Reading Ambitiously 2.6.26 - Not all software is created equally

The market is trying to re-price roughly $3T of software as if it were.

Enjoy this week’s Big Idea read by me:

The big idea: Not all software is created equally

Reading time: 4 minutes

The market is trying to re-price roughly $3T of software as if it were.

The market’s shorthand is “vibe coding kills software.” There is truth inside the fear. Vibe coding compresses software creation into natural language. Code is cheaper to generate, and substitution gets easier.

But not all software is created equally.

Some software is nice to have. It makes life a little easier. It has that one feature the other system doesn’t. Other software is needed. It runs the business. We rely on it.

So the question is: how do you tell the difference?

Moats with sharks

If the cost of writing code approaches zero, where are the moats? Here are some good questions to ask.

Where does the company keep the “official truth”? When finance, legal, compliance, or a customer challenges something, which system is the final answer? Where is the ground truth?

Which system is trusted to take action? What has the keys to do real work: approve, release, provision, pay, book, ship, or deprovision?

What happens if you try to rip it out? Not “can you migrate the data,” but “does the business still run after it is gone,” and “what breaks on day two?”

Where does work actually start? What is the default place teams go in the first 15 minutes of their day: the queue, the workflow, the command center, the system they live inside?

When it’s wrong, how expensive is wrong? Does failure show up fast, get contained, and get reversed, or does it pollute the business and surface weeks later?

That checklist, when applied, helps separate software that is easy to recreate from software that is hard to replace.

The barbell

Industries tend to move in cycles. First, you win by owning the whole stack, because coordination is hard.

Then the stack breaks into layers, and specialists flourish.

Then coordination becomes hard again. The “middle” gets commoditized, and value concentrates at the ends: big platforms on one side, deep specialists on the other.

A barbell forms.

SaaS is approaching this phase in the cycle, and something similar happened right before the cloud.

Oracle ran the consolidation playbook, buying up all the pre-Cloud market leaders, including PeopleSoft in ERP and HR, Sun Microsystems, and Siebel in CRM.

It was a moat construction through getting big. Oracle pulled more truth into a single footprint. Once it owned the main systems of record and the workflow, it centralized procurement and renewals, bundled them into enterprise agreements, and let compounding handle the rest.

AI accelerates the same shape. It floods the middle with plausible substitutes, because code is cheap and interfaces are easy to copy.

And value concentrates at the ends.

On one end, systems that hold ground truth and are trusted to take action, and on the other, specialists that handle the messy parts of a domain where “mostly right” is not enough.

The middle will be a tough place to be. More clones, bundling, consolidation, and churn. Less pricing power.

The dangerous place is the middle. You don’t want to be in the middle. Software that is neither the source of truth nor trusted to act, and not protected by hard-earned expertise.

Why it matters

AI is not killing software. It is forcing a natural evolution we’ve seen before.

The debate is no longer whether something can be built. It is going to get built.

And if you’re a software company building it or you’re thinking about vibe coding an alternative to what you have today, ask the moat questions:

Is this the system that owns truth? Where we decide who’s allowed to act? And take that action? What breaks if we switch? What happens if the data in here is wrong? If our regulators call, is this the system we’re looking at?

In a software-abundant world, those answers decide who compounds, who commoditizes, and who gets acquired.

Best of the rest:

🦀 OpenClaw Shows What Personal AI Assistants Should Be – Federico Viticci burned through 180 million tokens testing an open-source assistant that controls his smart home, apps, and can even improve itself. Runs on a Mac mini. – MacStories

💰 Nvidia’s $100B OpenAI Bet Gets Complicated – Jensen Huang called the deal “nonbinding” and criticized OpenAI’s business discipline, though he’s now walking it back. The stock dipped 1.1% on the uncertainty. – CNBC

💸 Anthropic Eyes $350B Valuation – The Claude maker is planning an employee tender offer at a staggering valuation, signaling just how hot the AI race remains – Bloomberg

📈 Time's up for SaaS, Grow Faster or Die , A blunt reminder that in a slower, pickier market, “good retention” is not a strategy, SaaS winners are redesigning go to market, pricing, and product velocity to earn growth again. – Meritech

🚀 Musk Merges SpaceX and xAI in $1.25T Deal – The combined company will build data centers in space to solve AI’s massive power demands. xAI is currently burning $1B per month. – TechCrunch

Charts that caught my eye:

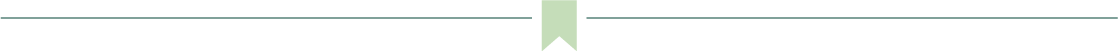

→ Why does it matter? An LLM census that was outdated the second it was printed. We’re looking at 150+ foundation models released in 2025 alone, spanning 30+ organizations from Google and OpenAI to Alibaba and DeepSeek. The sheer density tells you everything: we’ve moved from a world of scarce AI capabilities to abundant, commoditized intelligence. Notice the mix of open-weight (yellow) and closed (orange) models. That’s the real story. The open-source ecosystem (Kimi, Qwen, Llama, DeepSeek, Mistral) is now matching proprietary players release-for-release. For builders, this means unprecedented choice. For incumbents, it means defensibility lives in distribution and data, not model weights.

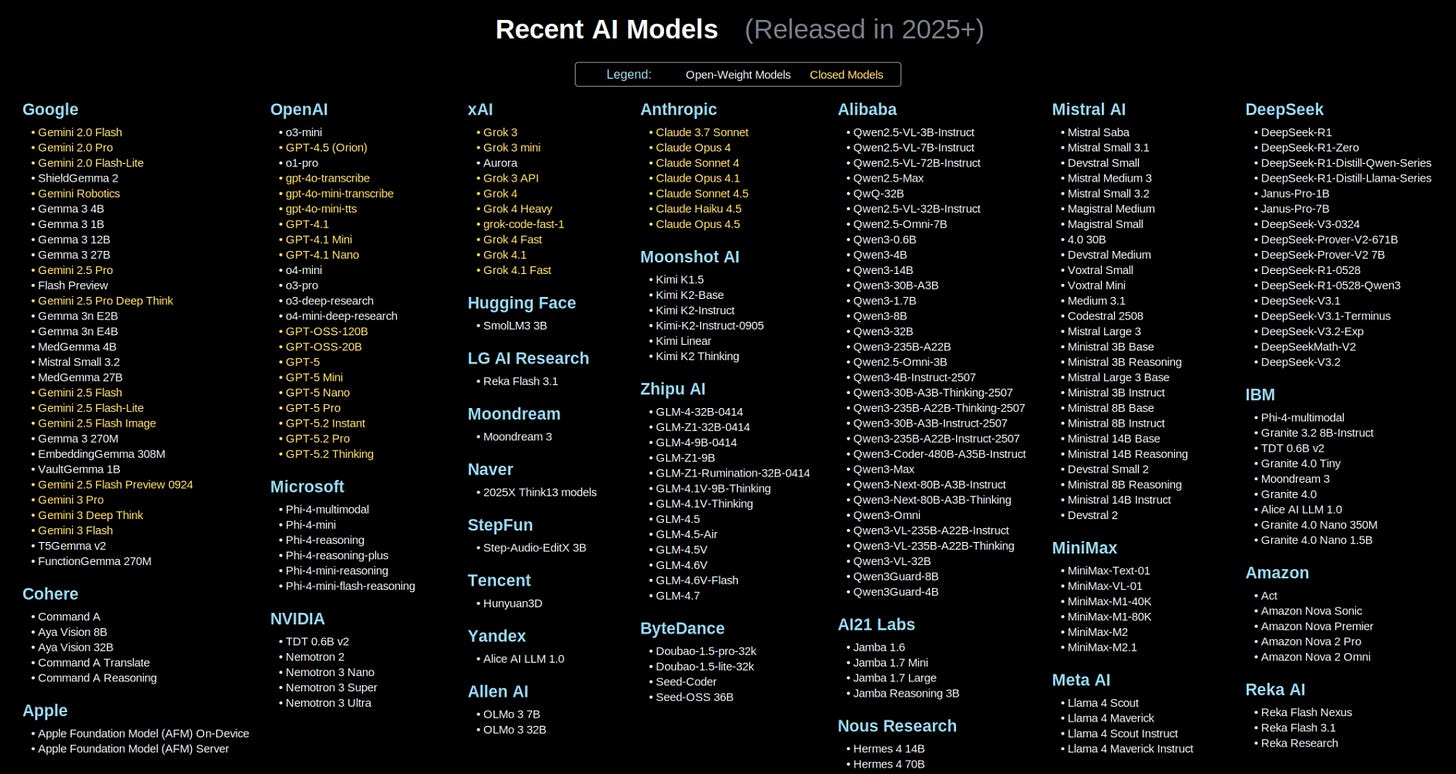

→ Why does it matter? Claude Code now accounts for roughly 4% of all public GitHub commits. That’s AI becoming a meaningful fraction of global code production. The inflection point around October 2025 and the near-vertical climb since suggest we’re watching the adoption curve go exponential, not linear. Where will we be in 1 year? 20%? 30%?

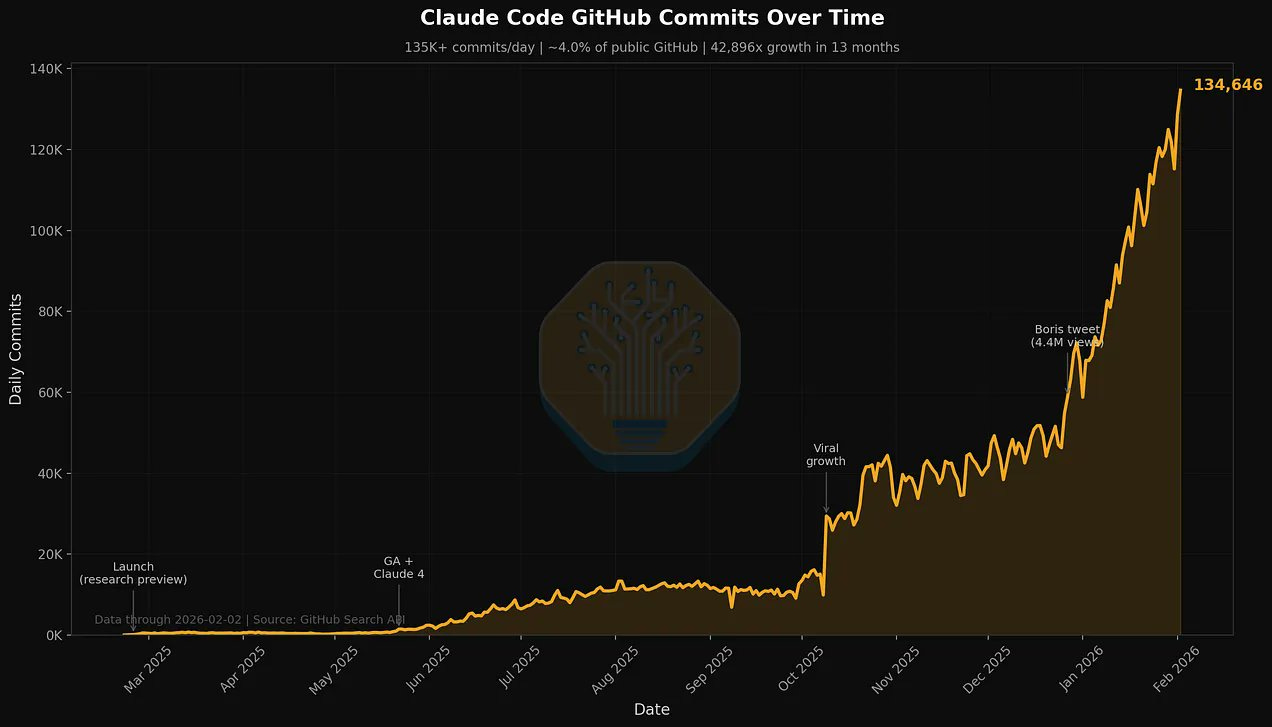

→ Why does it matter? This Bain framework maps AI’s threat to SaaS. The existential risk isn’t Scenario 5 (total compression). It’s Scenario 4: AI captures the lion’s share of value while SaaS becomes commoditized plumbing. Notice the bar heights in Scenarios 3 and 4: total spending stays robust, but AI keeps growing while SaaS shrinks. That’s the barbell. Value flows to the AI layer and the systems of record underneath. The middle gets squeezed.

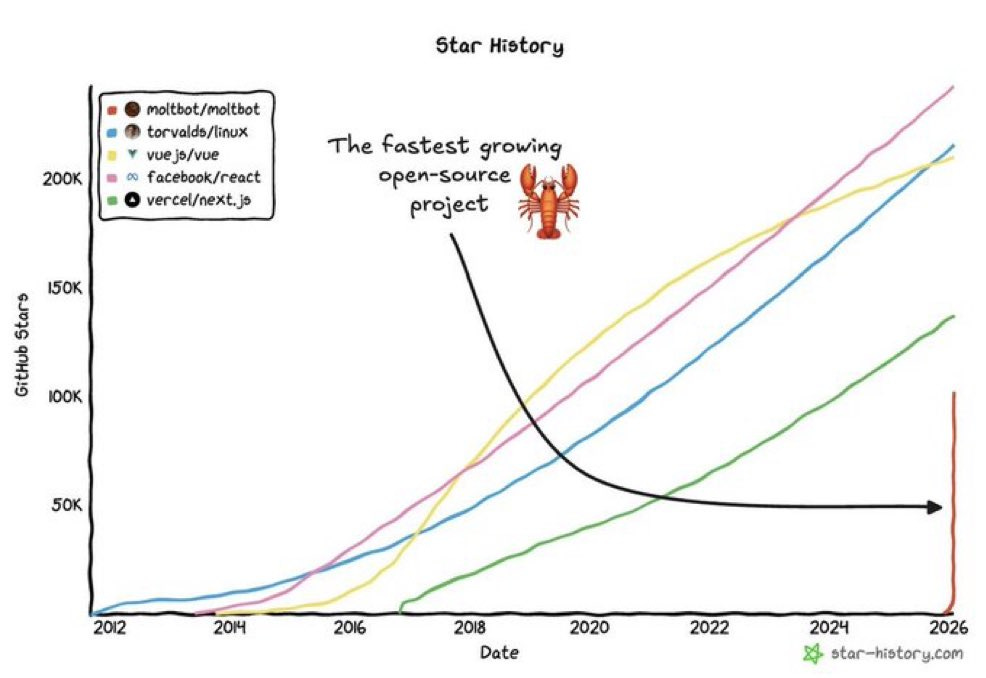

→ Why does it matter? That near-vertical orange line is OpenClaw (formerly Clawdbot and Moltbot), growing from zero to ~100K stars in a matter of months. For context: React, Vue, and the Linux kernel took a decade to reach similar numbers. The established frameworks show consistent, organic growth curves. OpenClaw’s trajectory looks nothing like that. We’re going to need a new name for hockey stick growth!

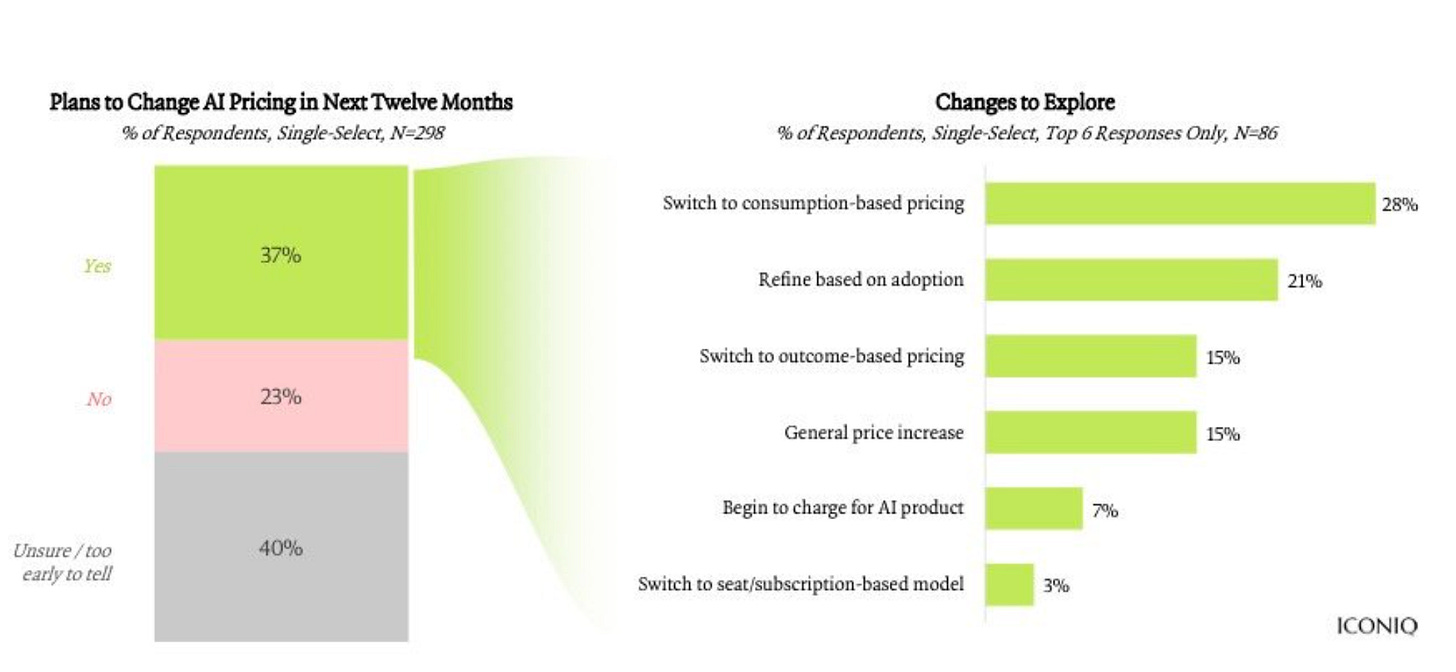

→ Why does it matter? The AI pricing model is in flux: 37% of companies plan to change their approach in the next year, and the top choice is consumption-based pricing (pay for what you use). That’s a direct response to the cost unpredictability of AI workloads. Traditional seat-based SaaS pricing assumed predictable per-user costs. AI breaks that model. A single power user can burn through 100x the compute of a casual one. Companies are waking up to this mismatch, and the shift to consumption and outcome-based models signals something bigger: we’re moving from “how many people use it” to “how much value does it create.”

Tweets that stopped my scroll:

→ Why does it matter? Moonshot AI’s founder doing his first on-camera appearance to announce Kimi K2.5 signals the Chinese AI labs are shifting from “build in silence” to “compete for global attention.” And they’re doing it with open source. Kimi K2 is already one of the best open-source models available, competitive with closed-source alternatives from major American labs. This is a strategic choice: open source builds developer ecosystems, accelerates adoption, and sidesteps the trust deficit Chinese companies face abroad. The playbook OpenAI wrote was founder-led narratives. The Chinese labs are adding a twist: founder-led narratives plus open weights.

→ Why does it matter? Anthropic is going all-in on brand marketing. These are cinematic, emotionally resonant ads showing real humans using Claude. And they had me laughing out loud. It’s a signal that the AI race is shifting from “best benchmarks” to “best brand.” When your product is a conversation, the story you tell about it becomes the product.

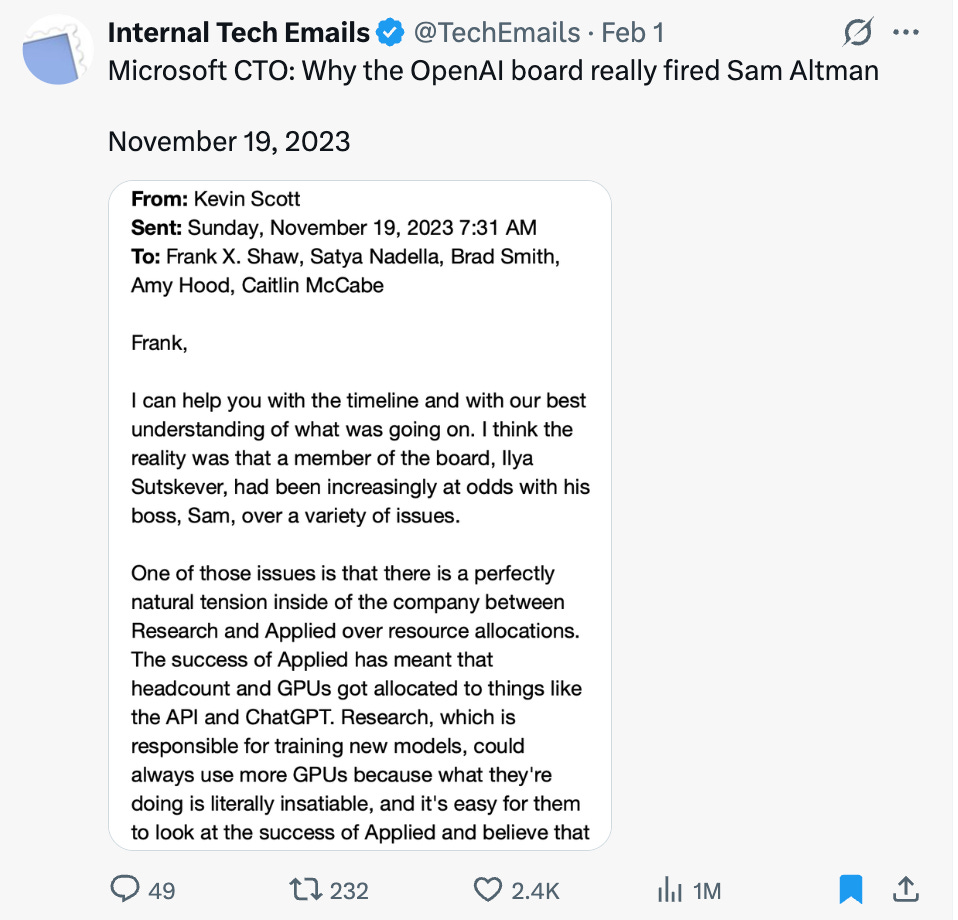

→ Why does it matter? This leaked email from Microsoft’s CTO reveals the internal theory at the time: Ilya Sutskever’s ouster of Sam Altman wasn’t about safety concerns or board governance. It was about resource allocation. Research wanted more GPUs for training frontier models. Applied (ChatGPT, the API) was eating them all. The “AI safety” framing that dominated headlines may have been a cover for a much older conflict: who gets the compute.

Worth a watch or listen at 1x:

→ Why does it matter? Peter Steinberger is the creator of OpenClaw, the open-source robotic claw machine that’s been taking over your feed. But before that, he built PSPDFKit into a $100M+ company. This talk captures the mindset behind both: you can just do things. Want to build a claw machine strangers can control over the internet? You can just do that. Want to cold-email a Fortune 500 executive? You can just do that. Most barriers are imaginary, and most gatekeepers aren’t actually guarding anything. OpenClaw is the latest proof the philosophy works.

→ Why does it matter? Gokul Rajaram built the ad products that turned Google, Facebook, and Square into revenue machines. This wide-ranging conversation covers how AI is fundamentally changing product development: faster cycles, smaller teams, and the death of the traditional PM role. Patrick is so good at getting to the topics that matter. Rajaram’s mental models are worth the full listen.

Quotes & eyewash:

“Everyone must choose one of two pains: the pain of discipline or the pain of regret.” - Jim Rohm

→ Why does it matter? Choose wisely!

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.