Reading Ambitiously 3.20.26 - Prompt to Harness: The Future of Software Development

Prompt, context, and harness engineering are best understood as cumulative ideas. They build on one another. Together, they are beginning to define the future of software development.

Enjoy this week’s Big Idea read by me:

The big idea: Prompt to Harness: The Future of Software Development

Reading time: 4 minutes

Over time, engineering teams automate more of the mechanics of job and reallocate their effort to where human-only skills matter most.

There was the era of the mainframe, when the work sat closer to the machine. Then came waterfall, which formalized sequencing and handoffs. Then agile, which tightened shortened release cycles. Today, many teams operate in a world of continuous delivery and, increasingly, continuous deployment, where software ships constantly and iteration happens in near real time.

Each shift changed software engineering. More importantly, each shift changed the craft and where to focus.

What teams did not do was stop engineering. They stopped spending time on the parts that had been abstracted away. The locus of value kept moving.

That is what makes the rise of agentic coding feel less like a break from history and more like the next turn in a familiar story.

From Prompt to Harness

The first phase of this AI cycle was prompt engineering.

If we ask the model better questions, we tend to get better answers. Prompting quickly became an important skill for getting useful results from AI. Guides emerged. Websites helped people write better prompts. You can even ask an LLM to help you write one.

But as our ambitions expanded, we moved from one-off outputs to real systems. It became clear that a well-written prompt could still yield weak results if the model lacked the right information at the right time.

Enter context engineering.

In addition to clever instructions, we learned that you also need to protect the context window. Think of context as the model’s memory at runtime. What should the model see? When should it see it? And how do we avoid wasting this precious resource?

A great deal of innovation has gone into addressing context rot, something we wrote about back in January. Tools, MCP, skill libraries, orchestration, and RAG are all, in one way or another, attempts to improve the quality of context.

This was an important deepening of the craft.

Prompting did not go away. It got wrapped inside a growing set of skills and disciplines. We learned that asking well still mattered, but so did supplying the right context, in the right sequence, inside a finite window.

Now the discipline is expanding again.

As the unit of work shifts from answering a question to completing a task over time, the burden moves from the prompt itself to the system around it. Once agents begin taking on longer-running work across multiple steps, tools, and context windows, we also need a harness.

Enter harness engineering: the tooling, practices, tests, guardrails, and feedback loops that keep the system reliable over time.

Once agents are doing meaningful work across files, tools, and dependencies, the quality of the outcome depends less on any single prompt and more on the system wrapped around the model. The harness keeps it on track while the work unfolds.

Prompt, context, and harness engineering are best understood as cumulative ideas. They build on one another. Together, they are beginning to define how software gets built going forward.

That matters because some engineering teams may now be able to increase velocity dramatically, but only if they are willing to redefine the job around these new disciplines.

Over a five-month period, a team at OpenAI built and shipped a product with zero manually written code. The repository reportedly reached roughly one million lines. The application logic, tests, configuration, documentation, and tooling were generated by AI. OpenAI has suggested that it was built in roughly one-tenth the time it would have taken to hand-code.

The engineer’s role in that project appears to have been different.

When something failed, the answer was often not to work harder inside the codebase itself. It was to ask what capability was missing, what artifact was unclear, what test was absent, what feedback loop was too weak, and what part of the environment needed to become more legible to the agent.

This begins to look like the work of a harness engineer.

The deeper shift is that engineering judgment moves from isolated acts of code-writing into the design of systems that can generate, test, and improve code over time.

The Future of Software Development

We may not have fully named this phenomenon yet.

Harness engineering is one candidate. Regenerative software is another. The phrase AI software factories gets at part of the same idea, too. But the label matters less than the shift underneath it.

Prompt engineering, context engineering, and harness engineering increasingly look less like isolated disciplines and more like connected skills of the craft.

Intent goes in. Code comes out. Tests push back. Evaluations refine the path. The harness gets stronger. The next cycle improves.

That is why this looks less like an adjunct to software engineering and more like one path along its next evolution. The craft is not being automated away. It is being relocated toward the design and supervision of the systems that produce software.

There is a historical rhyme here.

Earlier eras of industrialization did not simply replace labor with machinery. They reorganized work around process, measurement, and reliability. Something similar appears to be happening in software, and increasingly across knowledge work more broadly.

Each new layer of abstraction has tended to push more human effort toward the place where judgment mattered most.

Prompt engineering taught us to ask better questions. Context engineering taught us to give the model the right information at the right time. Harness engineering is teaching us that reliability and quality come from the system that wraps around the model, as well as from feedback loops that improve over time.

If that framing holds, the leading software companies are unlikely to treat coding agents merely as point tools. They will begin to reinvent their software development lifecycle around these ideas, because this feels less like a break from history and more like the next turn in a familiar story.

Best of the rest:

🧠 Tim Ferriss Says Self-Help Might Be the Problem – After 20 years writing and consuming self-improvement content, Ferriss argues the obsession with fixing yourself creates the very unhappiness it promises to cure. – The Blog of Author Tim Ferriss

🤖 Finance Bros to Tech Bros: Don’t Mess With My Bloomberg Terminal – “Keep your stupid AI off my Bloomberg terminal” is the perfect distillation of this whole fight, because it shows how quickly AI hype runs into the hard reality of entrenched workflows, proprietary data, and fanatically loyal power users. – The Wall Street Journal

⌚ How the Swiss Watch Industry Survived by Selling Identity, Not Accuracy – When quartz made precision a commodity, surviving watchmakers pivoted to luxury branding. The same shift is coming for knowledge work. – Paul Graham

🏦 How a $20B hedge fund turned GPT-5 into a senior analyst – Balyasny built a purpose-built AI research engine, evaluated across 12+ dimensions, that reasons through thousands of documents at institutional scale. – OpenAI

🏗️ AI Is Coming for SAP Before It Replaces It – Legacy systems hold decades of critical business data. AI finally makes them programmable, approachable, and worth the upgrade cost. – a16z

Charts that caught my eye:

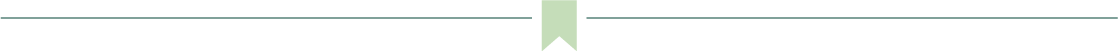

→ Why does it matter? Last year we were wondering whether the revenue would show up. Well, it did. Anthropic added about $6 billion in annualized revenue in February alone, which is larger than Snowflake’s full FY2025 revenue of $3.6 billion and exceeds Databricks’ last reported $4 billion revenue run rate, suggesting this is not a normal software ramp. It is a sign that when AI starts doing real work, not just helping people use tools, revenue can compound at an incredible pace.

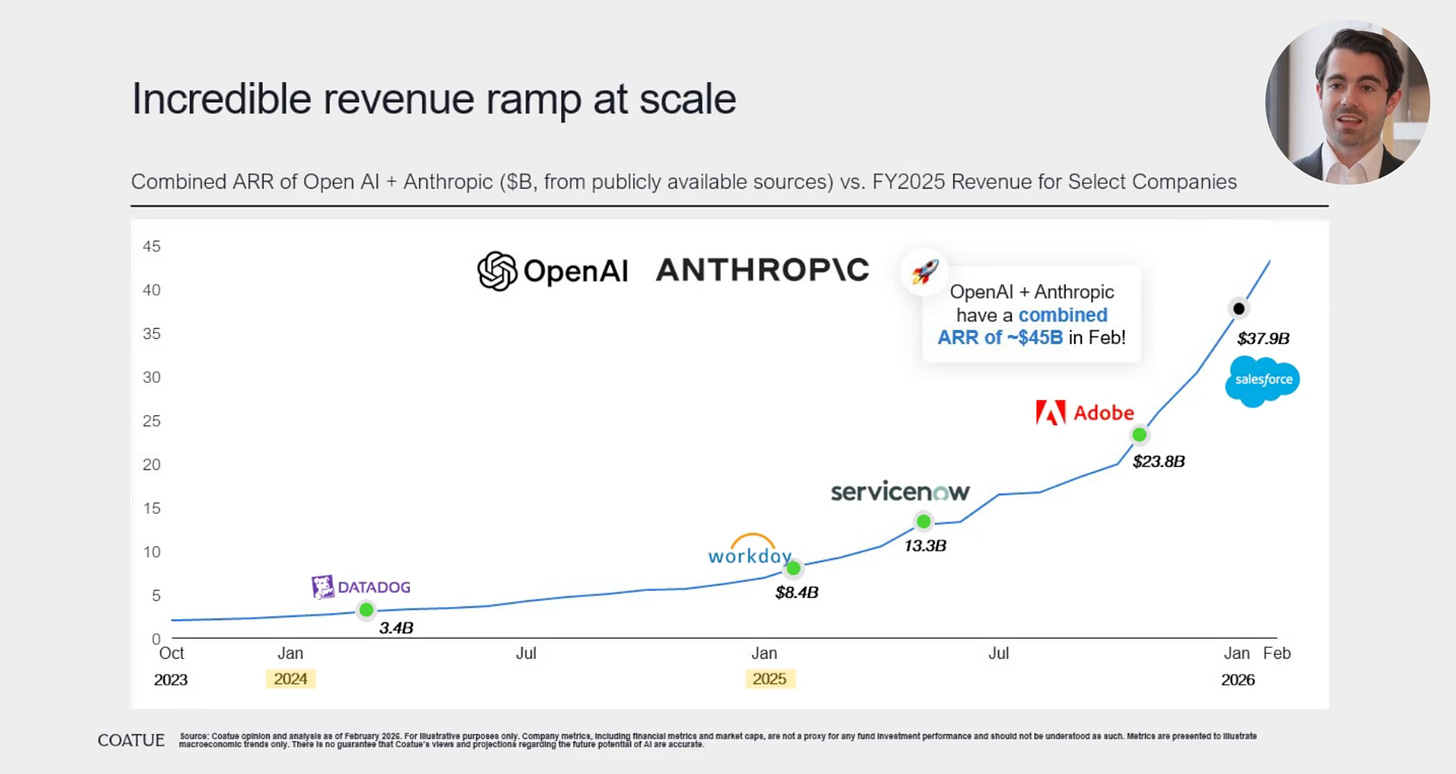

→ Why does it matter? LLMs are starting to tell users to break up, and that should not surprise anyone who understands the data. Train a model on Reddit relationship advice, and you train it on a corpus where a huge share of people default to “leave.” Feed it fifteen years of posts shaped by less patience, less communication, and less willingness to compromise, and the model will learn that exit is the safest, most common answer. The model is doing exactly what the dataset taught it to do.

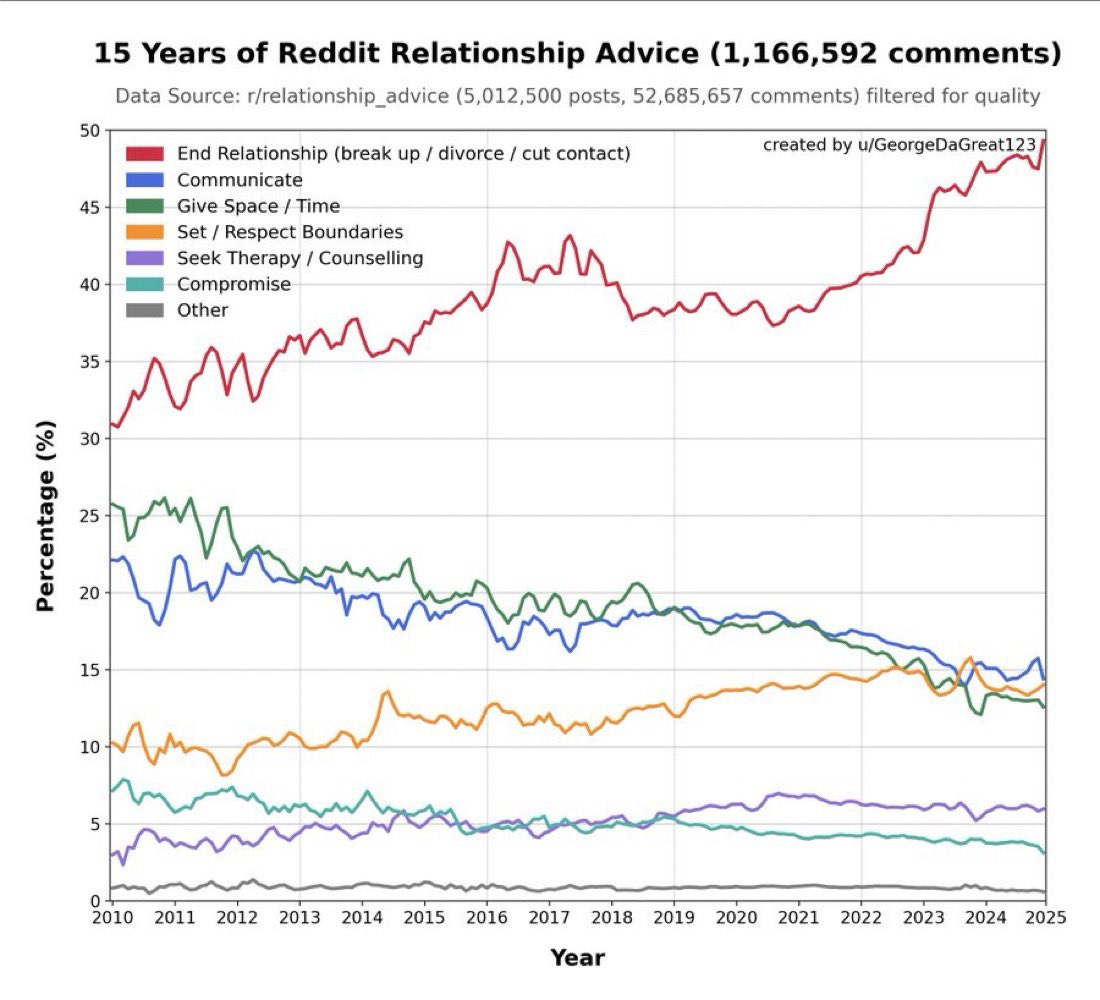

→ Why does it matter? Software has historically given customers the tools to do the job, which kept the market tied to seats, modules, and trained users. Agents change that equation because the software starts doing the job itself. As these systems develop the skills of their human labor equivalents, the addressable market expands from software budgets to labor budgets, and that is why the TAM gets much bigger.

Tweets that stopped my scroll:

→ Why does it matter? Gokul is describing something most companies still treat as a future concept. A customer problem surfaces in Slack, a coding agent cuts a PR, and 15 minutes later, the fix is live. We did it with AmbitiousOS at 35k feet. That is a new operating model. The companies that build and expand their own software factories over the next 24 months will have a structural velocity advantage.

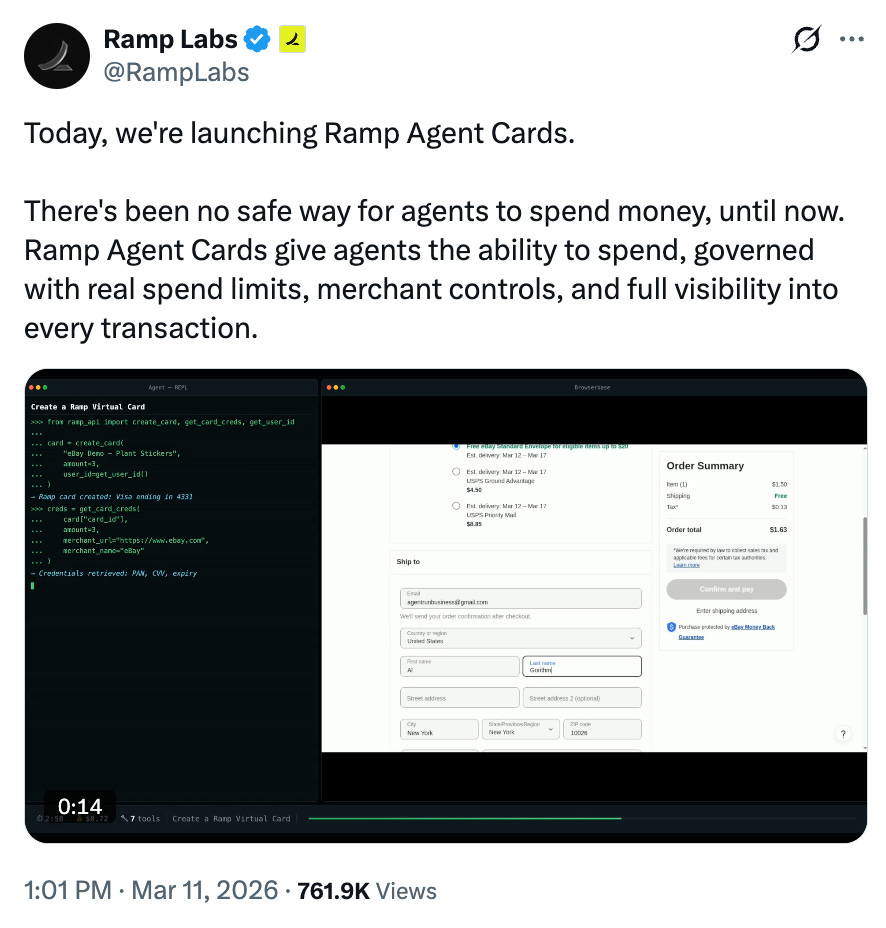

→ Why does it matter? Ramp just handed AI agents a corporate card with guardrails. Until now, a key missing primitive in agentic systems was not intelligence or tools, it was money. Ramp Agent Cards give AI Agents: spend limits, merchant controls, and full auditability mean agents can now operate in the real economy without a human co-signing every purchase.

→ Why does it matter? Anthropic just shipped the ability to message Claude from your phone and have the finished work return to your desktop. This is the early architecture of an always-on AI collaborator that works while you sleep, commute, or sit in back-to-back meetings. The race to own the persistent AI relationship, one that knows your context, your files, and your intent across devices, is a real platform war of this era.

→ Why does it matter? The barriers between “I have an idea” and “here is a thing you can click” are collapsing fast. Google Stitch is a new product from Google that helps you get to prototypes quickly.

Worth a watch or listen at 1x:

→ Why does it matter? Dylan Patel breaks down the three physical constraints that will determine who wins the AI era: logic, memory, and power. If you want to understand why AI scaling is not simply a money problem, Dylan Patel and Dwarkesh Patel are two of the brightest minds on the subject, oh, and they are cousins.

→ Why does it matter? Jensen Huang does not do keynotes, he does roadmaps, and this one covers the full arc from Blackwell to Rubin to Feynman while making the case that AI factories are the defining infrastructure build of our era.

Quotes & eyewash:

“If you really look closely, most overnight successes took a long time.” — Steve Jobs

→ Why does it matter? 10+ years in the making.

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.