Reading Ambitiously 3.6.26 - AI Product Strategy for Model Consumers

If you are not training the model, you are renting it. Where durable moats actually live for AI product teams.

Enjoy this week’s Big Idea read by me:

The big idea: AI Product Strategy for Model Consumers

Reading time: 9 minutes

Most of our readers are not training AI models. We’re building software that consumes them.

That sounds obvious, but it changes where you place your bets.

If you are not building the brain, your job is to build a system around the brain. The part customers pay for. The part that compounds into durable value. The part that stays defensible when the underlying LLM makes meaningful improvements.

One way to make sense of this world is to split it into two camps: Model Producers and Model Consumers.

Model Producers train frontier models, and increasingly, they are shipping products on top of them. In the American closed-source world, that center of gravity sits with OpenAI, Anthropic, and Google. You can make a case that xAI belongs in the conversation, too.

Model Consumers are the rest of us. Mostly software and services companies that rent intelligence and build products around it.

If the brain is rented, what is the most defensible way to build the product around it?

Let’s talk about the brain first.

The Big 3

You know their apps: ChatGPT, Claude, Gemini.

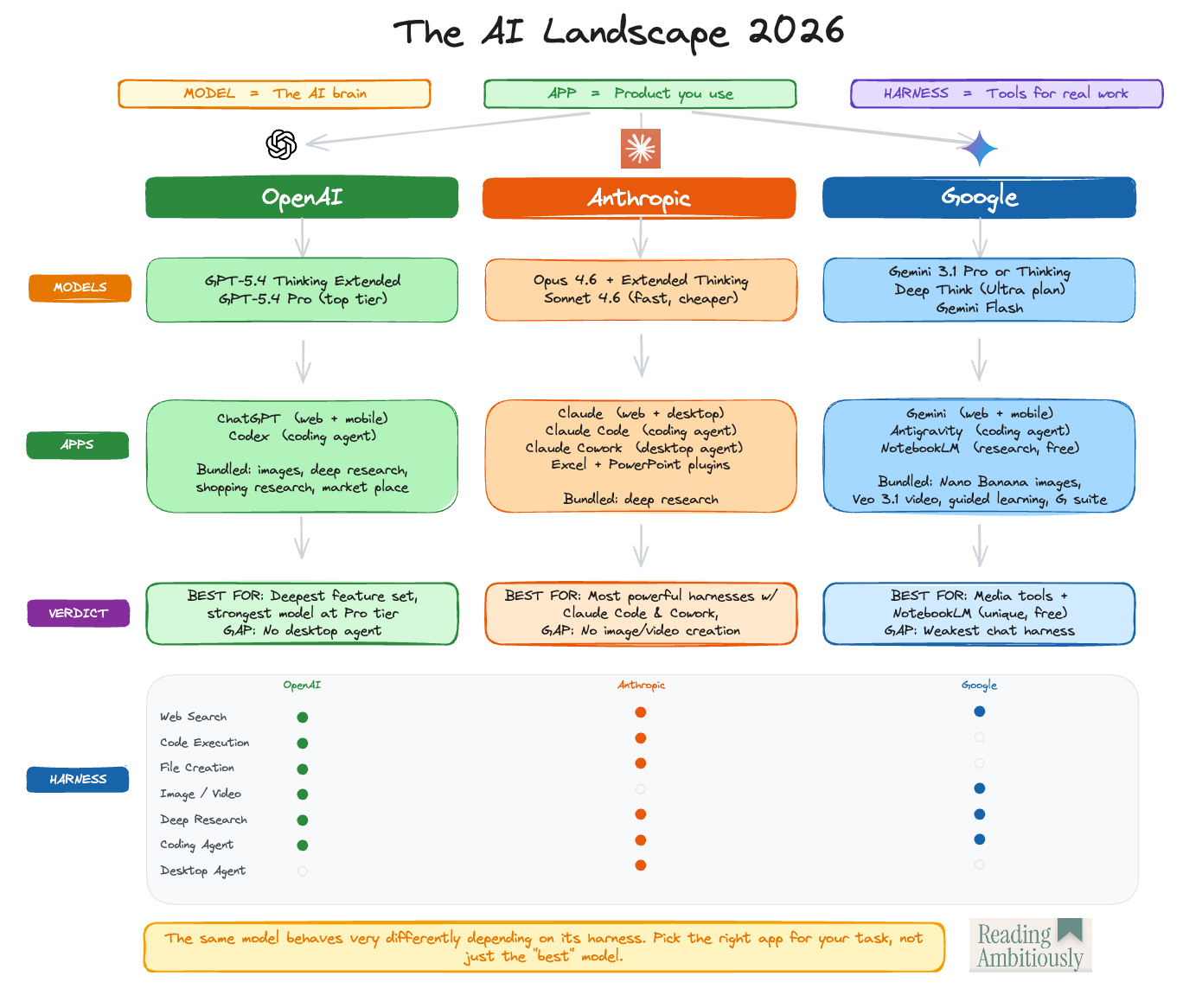

These labs are no longer just building large language models. They are building across three layers: models, apps, and harnesses.

Models are the brains. They determine reasoning, writing, coding, and multimodal perception. They are what benchmarks measure and where labs are in a close race.

Apps are the front door. Apps are the products you use to talk to a model, and how models increasingly complete work for you. More and more, they sit inside productivity suites, developer tooling, and operating systems.

Harnesses are what turn intelligence into work. Apps come with a harness. They give models tools, permissions, file access, logging, memory, guardrails, and the ability to execute multi-step tasks. A harness is the difference between answering a question and completing a job.

If “harness” feels old-fashioned, think of an exoskeleton. You are not adding intelligence. You are channeling the raw cognitive power of an LLM. The harness, or exoskeleton, directs that power toward output you can rely on.

Claude in a chat window is one experience. Claude inside a coding harness is another, because that environment is designed to read a repo, edit files, and run commands. Claude, as a desktop co-worker, is another, because it has local file access and the autonomy to complete tasks.

This matters because Model Producers are not stopping at models. They cannot, because models are quickly becoming commoditized.

They are building apps and harnesses, too, because that is where distribution, retention, and margin live. To quote Jeff Bezos, “Your margin is my opportunity.”

That is why the AI labs are moving into territory that used to be exclusively the domain of software companies.

And if producers are climbing into the app and harness layers, model consumers need to know what they can still own.

Five places defensibility lives

If you are a software company, you are a Model Consumer. You rent the brain. You build around it. This is typically referred to as “wrapping” an LLM.

Here is the simplest stress test.

If your model API disappears, what is your IP?

Here is what I’m seeing.

Integrations: The unglamorous work. Connectors, schema mapping, permissions, and data normalization. In the enterprise, connectors are a product. If your AI cannot reliably access the right data with the right permissions, you have a demo, not a deployable system.

Proprietary data and modeling: LLMs train on public data. What dataset do you own that is not public, and how do you make it legible to the model? This is ontology in practice. A representation of how the business works that the model can reason over safely.

Tools and plugins: These are the proprietary actions you expose to the model, APIs, workflows, and system updates inside your product. Instead of just generating language, the LLM can execute real tasks within your software.

Skills: Reusable workflows, instructions, playbooks. Enterprises do not have one workflow. They have hundreds. A skill library that starts small compounds institutional knowledge over time.

Memory: If the system learns how your team works, replacement gets expensive. Memory turns a generic assistant into something closer to an institutional colleague.

If you want one organizing principle, it is this: everything a Model Consumer builds should ladder to one or more of these five.

Once you’ve made that call, the product strategy gets clearer.

Four product strategy plays for model consumers

You do not need to be a Model Producer to win. You need a playbook that maps to what you can own. Here are four repeatable, product-level plays. Each is a different way to own an app, a harness, or both, while renting the model.

Universal AI Assistants: The front-door play, owning the app layer. The product wants to sit where users already spend time. Microsoft Copilot is the canonical example because it lives inside Microsoft 365 and draws on organizational context. Glean is another, built around permission-aware connectors and enterprise search. Their defensibility is distribution, integration, and grounding.

AI Agents: The outcome play, owning app plus harness. Agents take responsibility for slices of work. They have tools, entitlements, audit trails, and multi-step execution. Convert services into software. The closer you get to outcomes, the more pricing shifts from seats to results. Maybe #6 IP opportunity here, training your own small language model.

Enterprise-Grade Controls: Permissions, governance, compliance, observability, auditability. Enterprises will not deploy AI at scale without trust and transparency they can measure and defend. Build it for them.

Embedded AI Features: The compounding play, owning workflow depth inside your existing product. AI becomes a feature set inside existing workflows. Summarization, triage, autofill, exception handling, and natural language query. Many categories will be won by the product that becomes meaningfully easier to use thanks to AI.

Why this matters

Frontier models will keep getting better. At the same time, they will converge and become harder to tell apart.

The labs know this. That is why they are investing so heavily in apps and harnesses. They are moving up the stack into territory that used to be exclusively the domain of software companies.

If you are not building one of these brains, but building around one, you must be explicit about where your differentiation lives.

Decide where your intellectual property sits. Integrations. Proprietary data and ontology. Tools. Skills. Memory. Pick a primary bet(s) and build around it.

Because here is the uncomfortable truth.

As base models improve, some products will wake up and discover that what they thought was a moat was simply proximity to the frontier model. When the underlying intelligence gets meaningfully better, parts of the value proposition compress overnight.

I’m told Larry Ellison once said something to the effect of, “Your company is a feature on my platform.”

In an AI world, that is the risk.

If what you built can be absorbed into someone else’s app or harness the moment the brain improves, you were never building a platform. You were building a feature. And borrowing the intelligence that powered it.

Make sure you own something that survives when the brain gets smarter next week.

Best of the rest:

🏦 Howard Marks No Longer Sitting on the Fence – The Oaktree founder went looking for an AI bubble, used Claude to build his own tutorial, and came back convinced this is real. – Robert Dee ACSI

🤖 There is exactly one way that SaaS can be saved — Eoghan McCabe argues that survival in the age of agents requires radical creative destruction, detailing how Intercom cannibalized its own legacy business, bet the company on AI, and reignited growth by rebuilding around agents from the ground up. – Eoghan McCabe

⚖️ Anthropic Picks a Fight It Cannot Win – Ben Thompson argues Anthropic’s standoff with the Department of War is a principled but ultimately untenable position, disconnected from how power actually works. – Stratechery by Ben Thompson

👨💻 We stress-tested GPT 5.4 on the hardest UI on the internet – After months inside legacy insurance portals, Pace found GPT-5.4 can finally click accurately, reason across hundred-step workflows, move fast enough for production, and retain UI memory, signaling that computer-use agents are crossing from demo to deployment. – Jamie Cuffe

Charts that caught my eye:

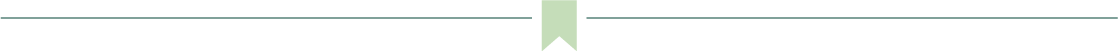

→ Why does it matter? This is a wild chart if true. Anthropic is becoming the dominant player in business AI spend in just 18 months, with Claude Team alone now accounting for nearly half of all enterprise AI chat dollars.

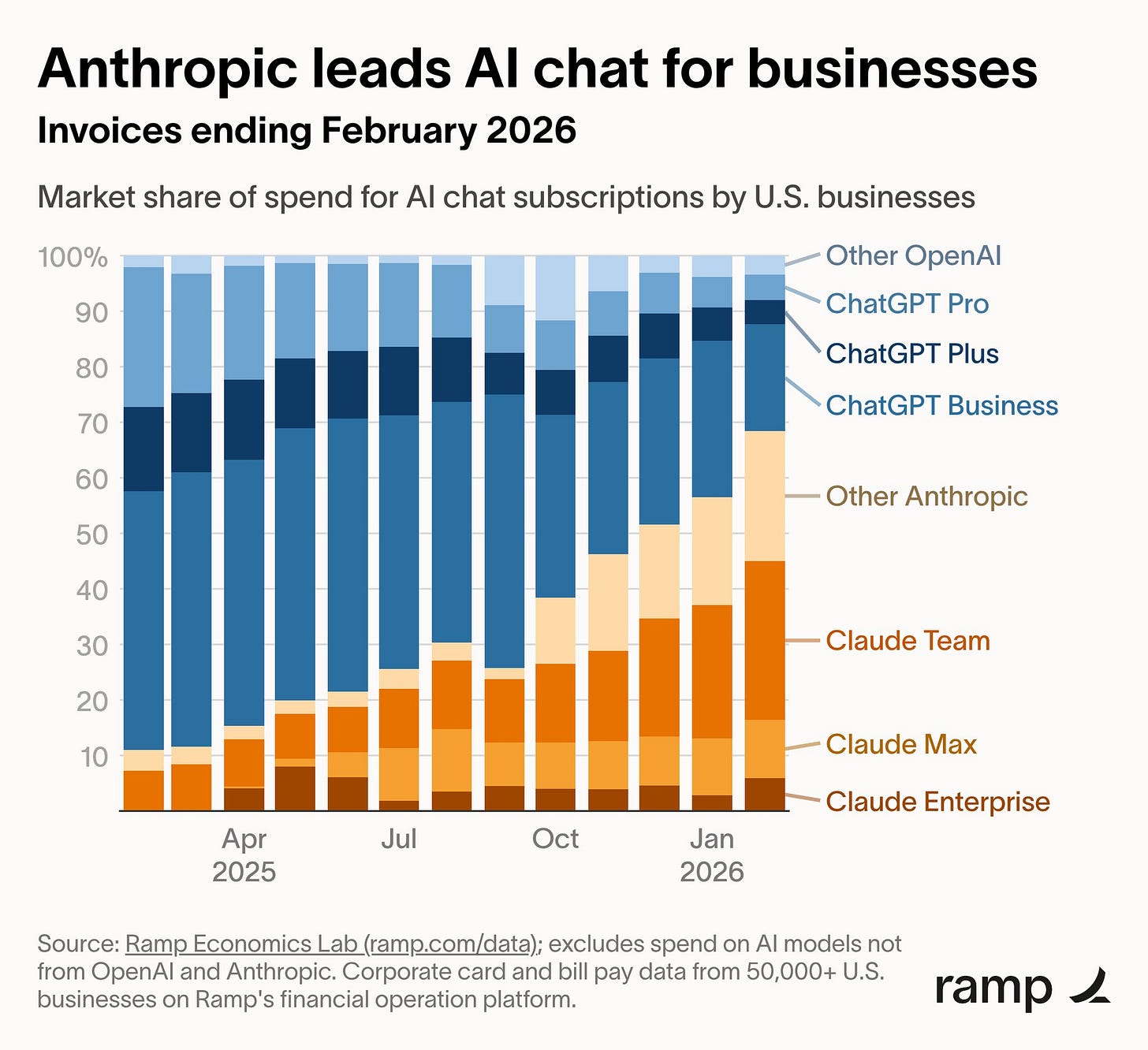

→ Why does it matter? The Financial Times distilled the entire AI debate into a single chart, and the punchline: the most likely outcome is not singularity or extinction. It is a modest 0.2 percentage point bump to trend growth, the same steady line that has held since 1870. That is the base case. The dramatic curves, up toward abundance or down toward human extinction, are the tails. Most of the AI conversation lives in those tails. Most of the evidence points to the middle.

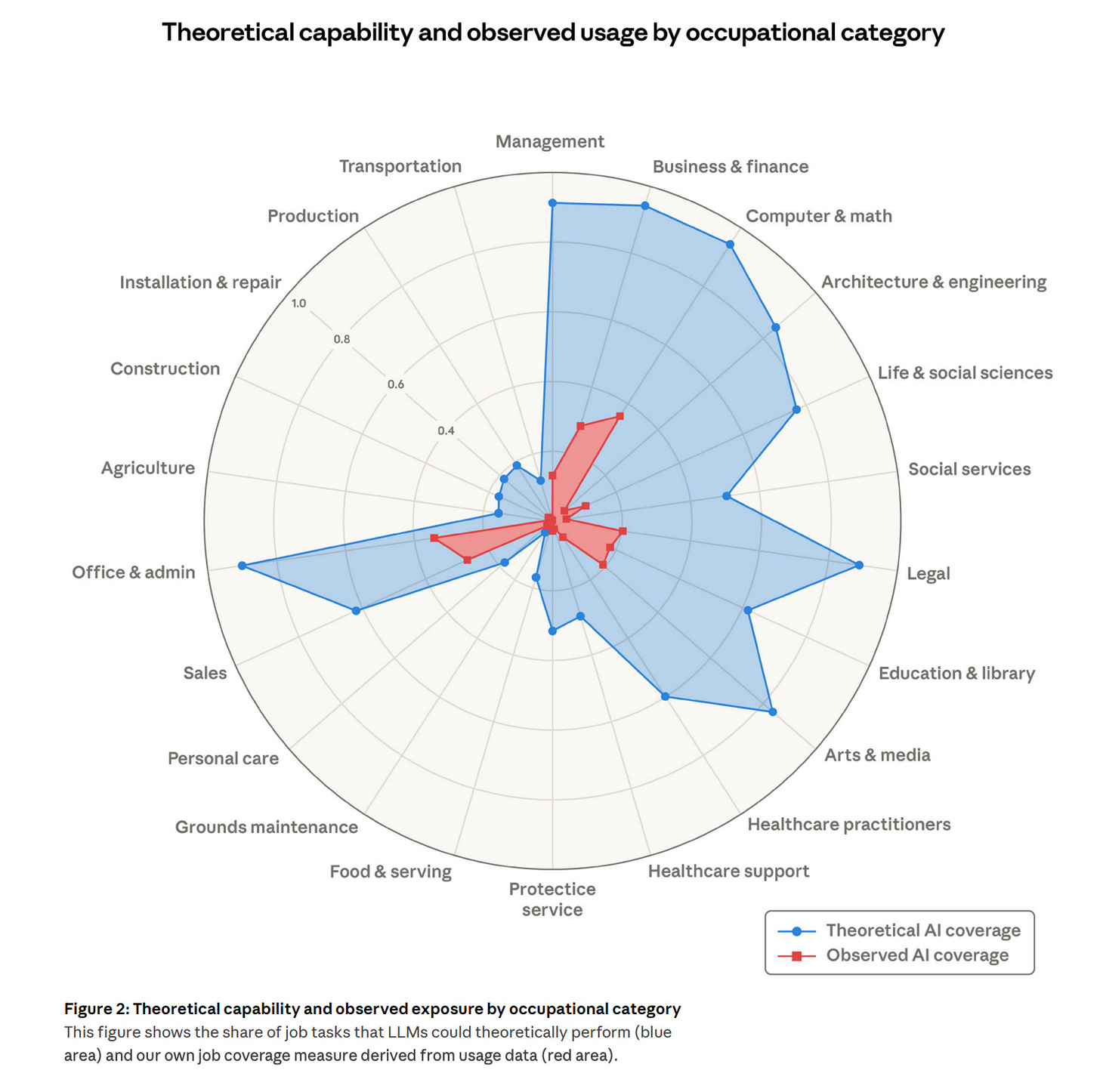

→ Why does it matter? An interesting chat from Anthropic here. The gap between blue and red is the most important story in AI adoption right now. Across nearly every occupational category, AI can theoretically handle a substantial share of job tasks, but observed usage barely registers. Even in the fields leading adoption, legal, software, business, and finance, actual usage sits at a fraction of what is already possible today.

Tweets that stopped my scroll:

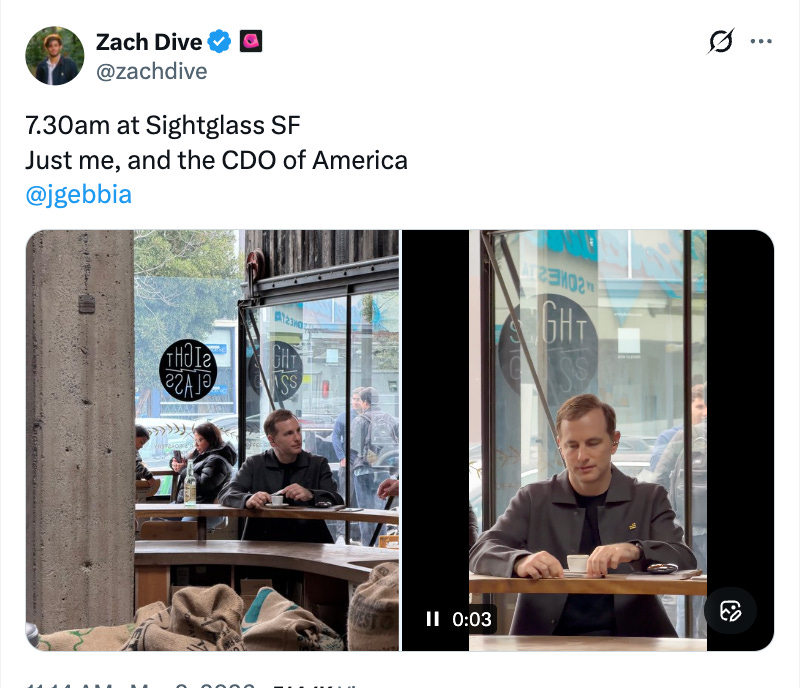

→ Why does it matter? On the evening of the Super Bowl, a rumor circulated about a leaked OpenAI commercial featuring a mysterious new device. I referenced it in that week’s edition of Reading Ambitiously, only to discover there were no confirmed reports of such a spot. For the first time, I felt like I had been pulled into fake news, so I removed the reference before publishing. Then, last week, that same device appeared in Joe Gebbia's hands. Call it conspiracy theory corner if you’d like, but when Jony Ive was at Apple, he prized secrecy and understood that the ecosystem feeds on leaks. The anticipation was part of the product. It is hard not to wonder whether this, too, is part of a similar playbook.

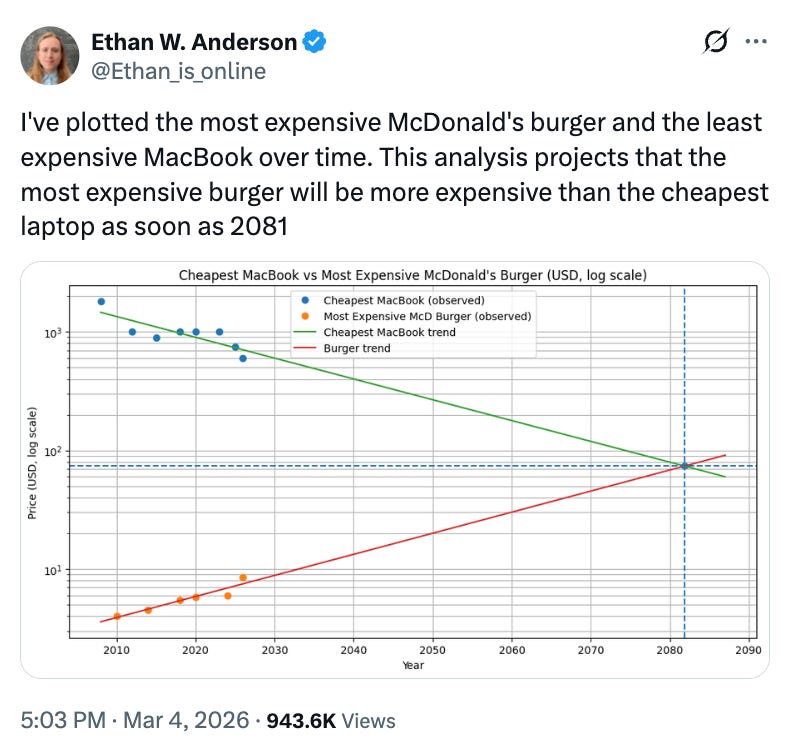

→ Why does it matter? Could a MacBook drop from over $1,000 to a projected $80 by 2081, while a McDonald’s burger climbs toward the same number? The things we build with silicon get cheaper every decade, while the things we grow, staff, and serve in person get more expensive.

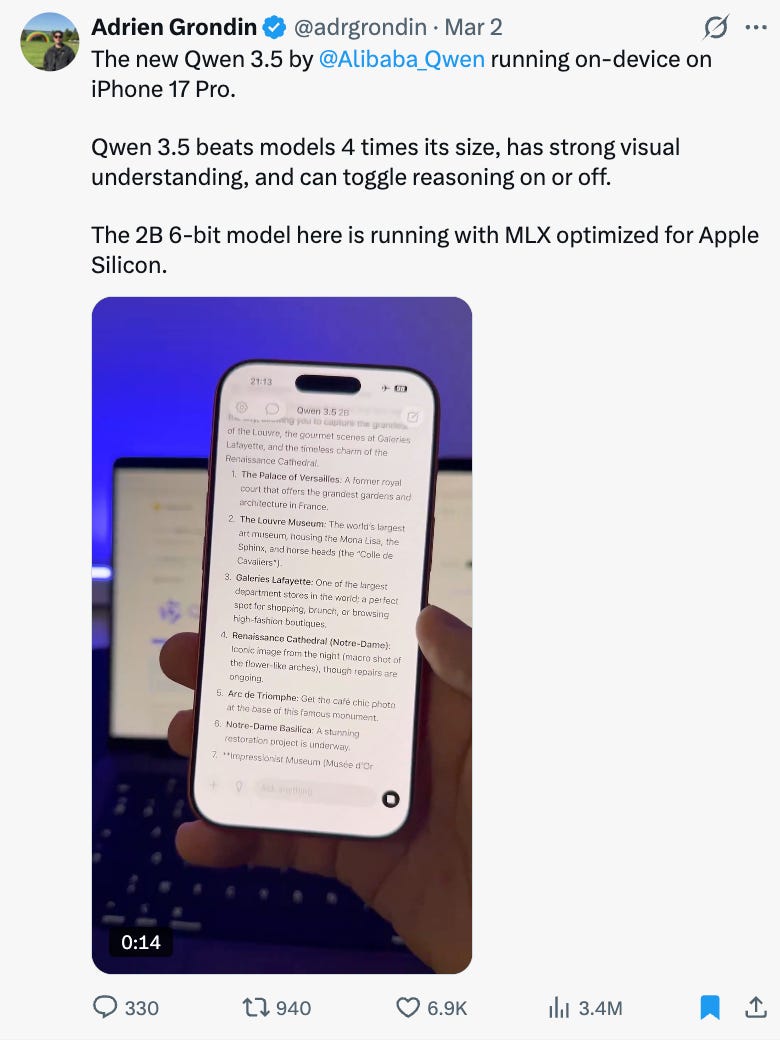

→ Why does it matter? Qwen 3.5 running on-device on an iPhone 17 Pro is a big deal. When frontier-class reasoning runs locally, without a cloud call, without latency, without a subscription, the economics of AI applications change completely. This would finally mean Apple could fix Apple Intelligence! The edge is becoming the new data center, and the phone in your pocket is the new inference engine.

→ Why does it matter? Google just shipped a CLI for its entire Workspace suite. Instead of clicking through polished dashboards, the most productive users are dropping back to the terminal with Claude Code. 2026 feels like the year of CLI’s and skills.

Worth a watch or listen at 1x:

→ Why does it matter? Chris Degnan was employee #13 at Snowflake and the only person to hold the CRO seat across all four CEOs and $3.5B in ARR. He refuses to sugarcoat what scaling a sales org actually requires: relentless travel, paranoia about competition, and staying close enough to the front lines that you could close a deal yourself on any given Tuesday. He warns against becoming a “spreadsheet manager,” a CRO who hides behind dashboards until the org quietly stops trusting them.

→ Why does it matter? John Arnold built one of the most successful trading operations in history, then stepped away to focus on philanthropy. In this conversation, he applies that same systems-level thinking to China’s manufacturing velocity, the hard economics of the energy transition, and what AI-driven demand means for physical infrastructure. His observations on China’s industrial capacity alone are worth the time.

Quotes & eyewash:

→ Why does it matter? Jesse Genet has become one of my favorite recent follows. She is an entrepreneur who is now using AI to homeschool her kids, and the way she applies the most advanced tools to personalize their education is so cool and hands-on. This week, my six-year-old and I walked through a lightweight software design session together. A few prompts later, we had built a spelling test game in Claude Code, complete with Trolls. Thanks for the inspiration, Jesse.

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.