Reading Ambitiously 4.17.26 - What I am teaching my kids about AI

My first-grader can turn ideas into software faster than I ever thought possible. That has me thinking less about which careers will matter, and more about the street smarts kids will need to thrive.

The big idea: What I am teaching my kids about AI

Reading time: 7 minutes

My first-grader and I have been building software to help her learn to spell and type.

She has been doing more of the heavy lifting than you might think. She is six. Give her a whiteboard and Claude Code, and she can now move from idea to software fast.

What fascinates me most, as her dad, is the combination. A six-year-old’s imagination is already wild. Claude turns that imagination into something tangible.

Today, we have a Trolls-centric, Sing 2-themed spelling game with different modes, levels, and mini-game rewards, including her own version of Doodle Jump. And while we wait for Claude to write the code, what do you think we do? We spell the words. Again and again. She has been getting 10 out of 10 on her spelling tests.

When a close friend recently asked me what I am teaching my kids about AI, I found myself coming back to this story.

Not because I think every six-year-old should be building software. And not because I think AI will make the hard parts of learning disappear. It will not. But something has changed. The distance between imagination and execution is shorter than it used to be.

The edge may belong less to the strongest or the smartest, and more to the children who learn how to respond to change without losing themselves, ask better questions, and turn ideas into something real.

That is what I find myself wanting to reinforce at home.

Not a fixed view of which job titles will matter most. Not a prediction about which degrees will age best. Something more basic. A responsible relationship with powerful technology. Character. Curiosity. Communication. Resilience. Judgment.

The new street smarts.

A responsible relationship

I spend a lot of time thinking about what a responsible relationship with technology actually looks like.

With phones and social media, the parental instinct is delay. Hold the line. Buy more time. As a founding member of the Wait til 8 pledge at our daughter’s school, I know that instinct well. It makes sense to me.

But AI feels different.

You may be able to delay a smartphone. I doubt you will be able to delay AI in the same way. AI will not live in one app. It will show up in the software our kids use at school, the devices in our homes, the cars we drive, and the tools they use for work. Software is already everywhere. AI is going to spread through that same surface area.

Which means the job is not just delay. It is preparation.

And preparation, at least to me, does not mean making every decision for them. It means giving them age-appropriate room to make decisions, feel the weight of those decisions, and learn from the outcomes. That is how street smarts are built. Not through lectures alone. Through giving it a go. Through small choices, some good and some bad, while the stakes are still low enough for a parent to help them make sense of what happened.

Too often, what we call protection is really substitution. We make the decision for the child, and then wonder why judgment never strengthens.

I keep coming back to the idea of street smarts. My mom has them. She grew up on the South Side of Chicago. Street smarts meant knowing how to read a situation before it turned on you. Keep your head up. Know who you are with. Pay attention to tone. Leave early when something feels off.

Our kids need a modern version of that.

Not fear. Not techno-doomerism. Good judgment.

They need to know when a confident answer is still wrong. They need to know what information should stay private. They need to know when a tool is helping them think, and when it is doing the thinking for them. They need to know when speed helps, and when speed hides a mistake. They need to know when to close the laptop and ask a human being.

I am nowhere near a complete list. But a few traits keep bubbling to the top, mostly because they help kids build judgment.

The new street smarts

Character.

Every morning on the drive to school, we walk through the Dad Rules. Be kind. Remember people’s names. Treat others how you want to be treated. Do the right thing when nobody is looking.

I ask them because repetition matters. I also ask them because no technology will ever remove the need for character. Powerful tools raise the stakes. AI can help you move faster. It can also help you cut corners, hide bad intent, and avoid responsibility.

Before I care what my kids can do with a tool, I care who they are when they use it.

Curiosity.

A few weeks before we built the spelling game, my oldest walked into my office and pointed at the little door in the ceiling.

“What is that?”

“The attic,” I said.

“Can we go up there?”

“Absolutely. Right now.”

I dropped what I was doing and grabbed the ladder.

Curiosity has a short half-life if adults keep putting it off. Later today. This weekend. Not now. Do that often enough, and a child learns that wonder belongs behind more practical things.

I do not want to teach that lesson by accident.

The world their generation inherits will not hold still for long. The kids who do well in it will be the ones who stay open long enough to notice what changed, ask what it means, and try something new.

Communication.

Speak well. Write clearly. Listen closely. Say what you mean.

More and more, the interface is natural language. The person who can describe what they want, frame the problem cleanly, and hear what is missing has leverage.

My daughter was not just learning words. She was learning how to describe what she wanted to Claude Code. She was learning how to point to an idea that did not yet exist and make it legible enough to build.

There is nothing soft about that skill.

Resilience.

The happy story about AI is that you type a prompt, get a miracle, and move on with your life.

That has not been my experience. The model still gets things wrong. The first version still disappoints. Building software is still messy.

That is part of why I like making things with my kids instead of just handing them polished software. They get to see the real process. You try something. It fails. You revise. You try again. The point is not frustration for its own sake. The point is learning not to fall apart when the first pass is bad. In fact, it’s even better if you can start to love the “bad”.

Curiosity gets you started. Resilience gets you through the broken middle, or what I called a few weeks ago, The Stall.

Knowing which question to ask.

For a long time, a lot of value came from knowing how to answer hard questions with scarce technical skill.

AI is changing the economics of answering questions.

One of the most honest reactions I have seen to recent progress in AI came from someone who had built part of his identity around being very good at math. The models started solving problems that once felt like the province of a tiny elite. What hit him was not just surprise. It was grief.

If your whole identity rests on being the person who can produce the answer, the ground may start to feel shaky. If it rests on knowing what matters, which questions are worth asking, and where to point your attention, you stand on firmer ground.

That is what I saw on the whiteboard with my daughter.

She was not demonstrating mastery of software engineering as a formal discipline. She was doing something more basic, and maybe more durable. She was noticing what she liked. She was imagining what could be better. She was helping shape the thing before it existed. And asking the questions.

That skill will age well.

Why it matters

I am not an absolutist about any of this. I am not an expert on how the next twenty years unfold. I am still making sense of it myself.

But I am starting to know what I want to reinforce in my kids while the ground is moving.

I want them to build. I want them to ask questions. I want them to speak clearly. I want them to treat people well. I want them to stay curious long enough to notice what others miss, and resilient enough to keep going when the first version does not work.

I also want to give them enough agency to feel the consequences of their decisions while the stakes are still manageable. That is how judgment gets built.

I want them to understand that they are more than any one skill the market happens to prize at a given moment. Titles change. Tools change. Status changes. The deeper work is learning how to adapt without losing yourself.

That is what I am teaching my kids about AI.

Not a theory of the labor market. Not a prediction about which degrees will win. Certainly not fear.

Something more basic.

A responsible relationship with powerful tools. Character. Curiosity. Communication. Resilience. Judgment. The instinct to ask better questions.

Those, to me, are the new street smarts.

Best of the rest:

💸 Beyond the Sky — Jeffrey Yan’s Hyperliquid story shows how a brutally focused team can turn crypto market structure, community distribution, and product obsession into a startup so efficient it makes traditional notions of scale look outdated. — Colossus

🤖 Sam Altman May Control Our Future—Can He Be Trusted? - This New Yorker profile captures the growing tension at the heart of the AI era: one of the most consequential technologies in the world may be shaped by a leader whose ambition, judgment, and governance still inspire deep unease. - The New Yorker

⚙️ How to get your company AI pilled — Ramp’s internal playbook is a vivid case study in what real AI adoption looks like when leadership sets the expectation, removes friction, and treats company-wide experimentation as a cultural advantage instead of an IT project. — X

🛠️ Eight years of wanting, three months of building with AI - Lalit Maganti offers one of the most credible firsthand accounts yet of what AI coding actually does well, what it quietly breaks, and why the real leverage comes from pairing agentic speed with human taste, architecture, and discipline. - Lalit Maganti

Charts that caught my eye:

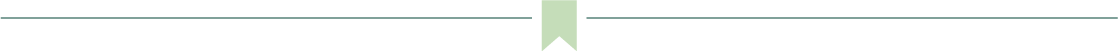

→ Why does it matter? Nearly half of all private equity deals now target software and tech services, a share that has doubled over the last 15 years and is at an all-time high. This is also what has people concerned about private credit. If AI compresses the value of legacy SaaS products the way SaaS compressed the value of on-premise software before it, the unwind may not be contained to a single fund or vintage year.

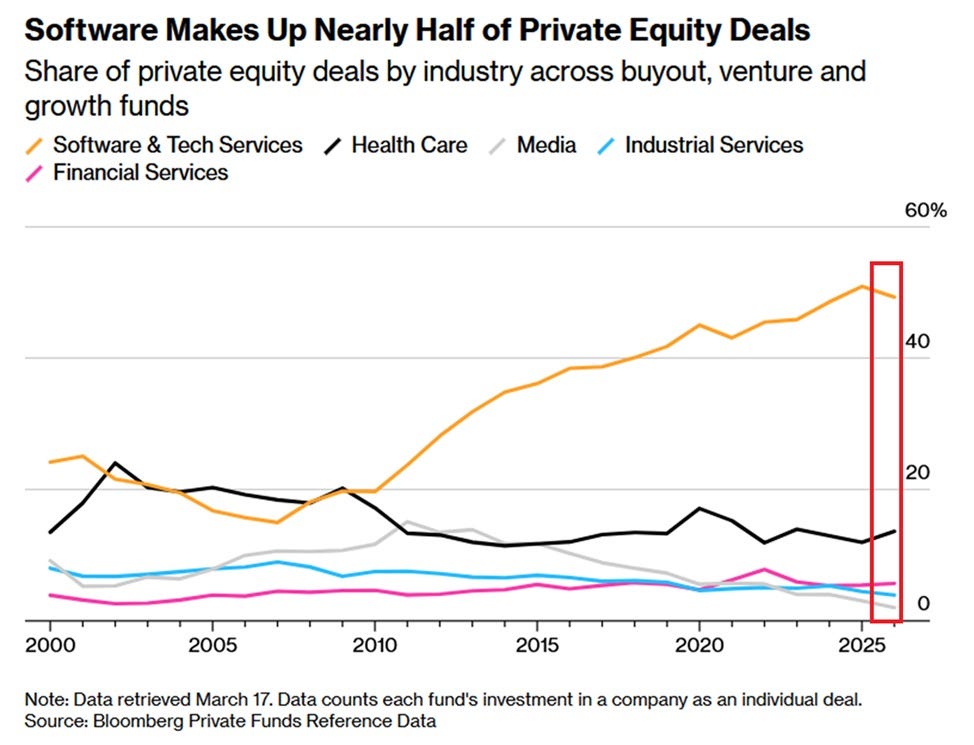

→ Why does it matter? A year ago, the question was whether AI revenue would ever show up. Anthropic answered: $87M to $30B in ARR in roughly 15 months. Salesforce took 22 years to cover the same ground.

→ Why does it matter? IBM tracked every shot at the Masters. The result is mostly blue for bogey. Even at golf’s highest level, bogeys outnumber birdies by a lot. Watching the broadcast, you’d never know.

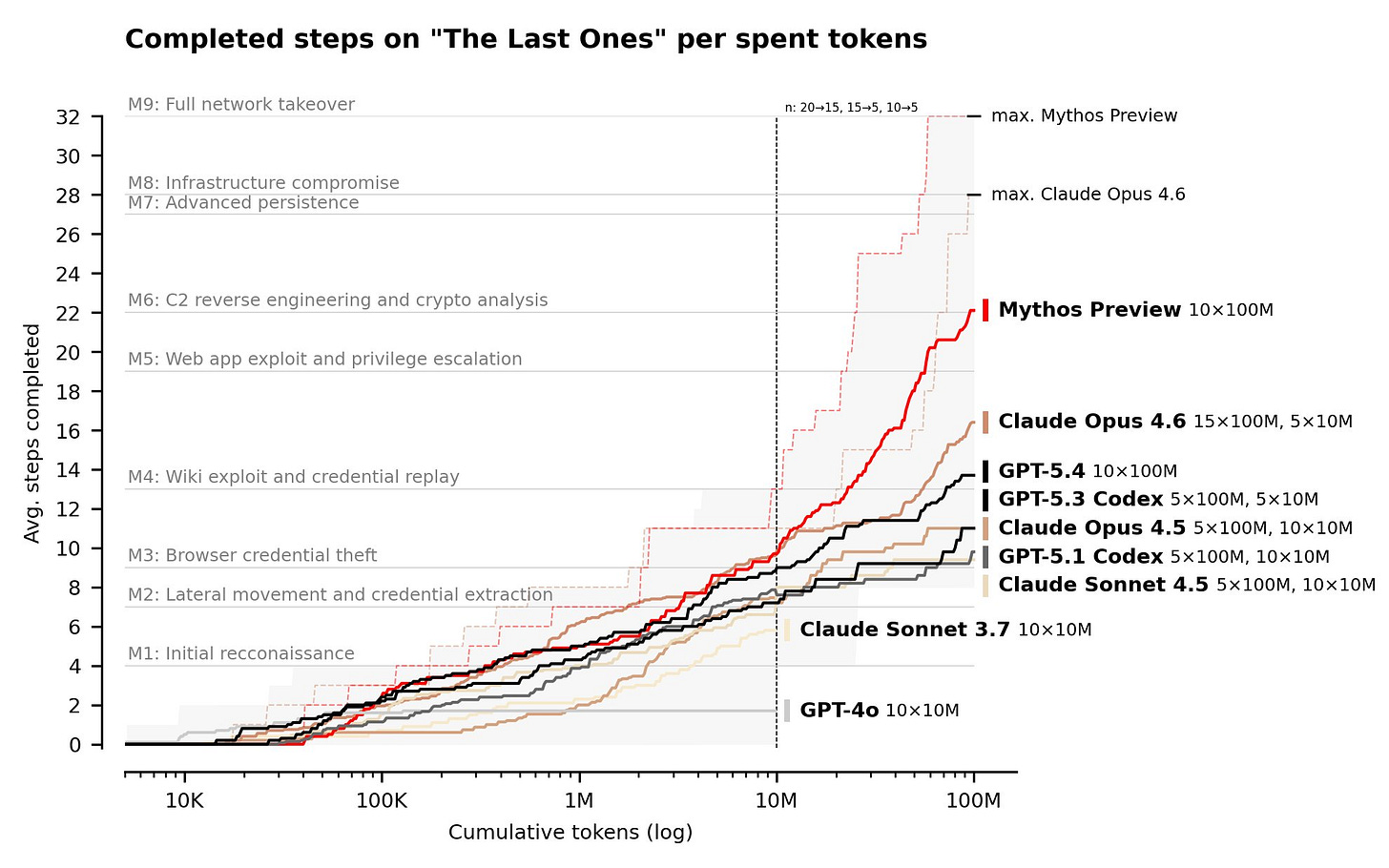

→ Why does it matter? Two years ago, top models stalled at basic reconnaissance. Mythos Preview now reaches the infrastructure compromise threshold with enough tokens. Capable enough to threaten real weakly-defended systems, and the curve is still climbing.

Tweets that stopped my scroll:

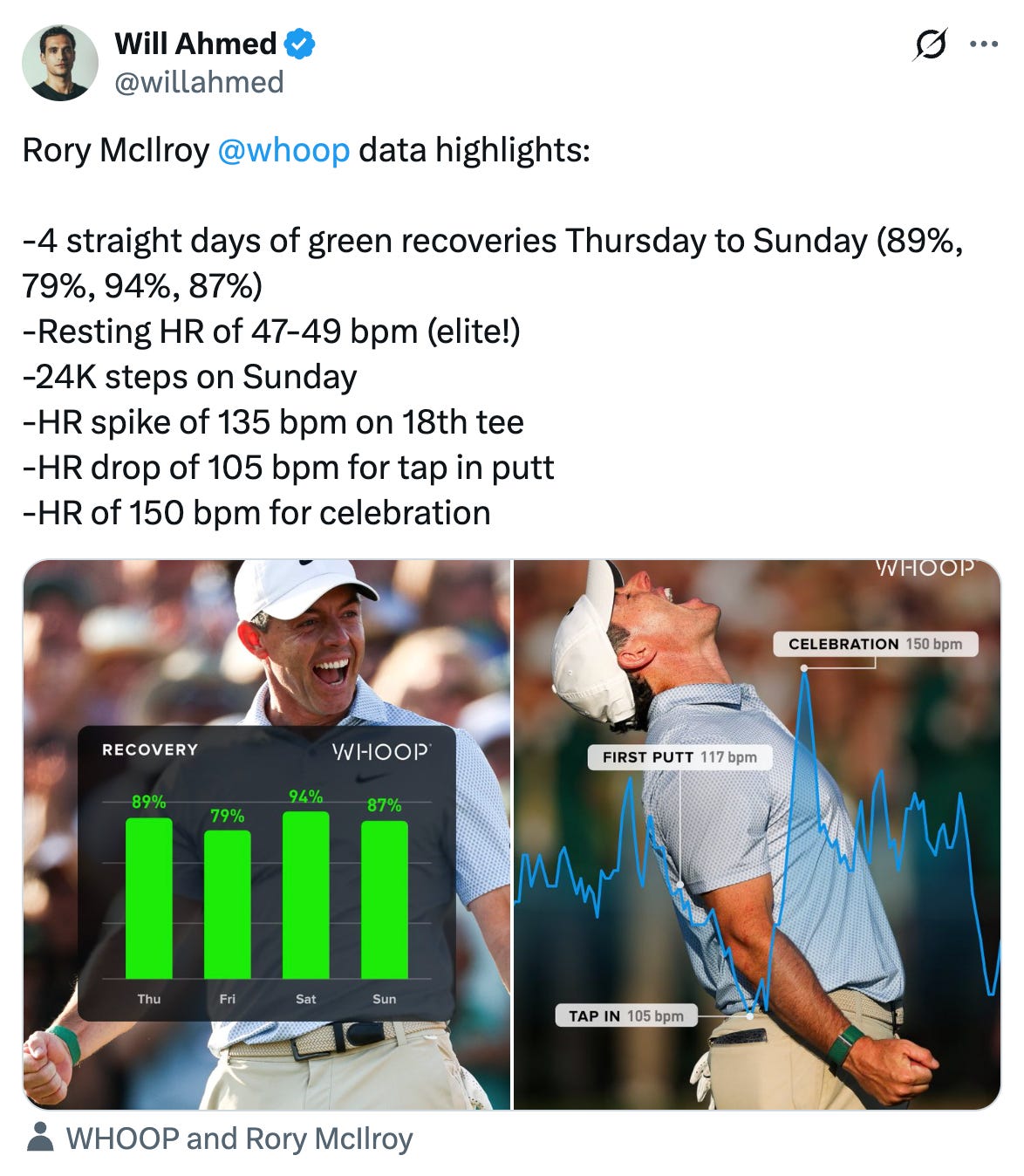

→ Why does it matter? McIlroy’s heart rate hit 150 bpm celebrating, higher than his 135 bpm on the 18th tee. Joy is apparently more taxing than pressure.

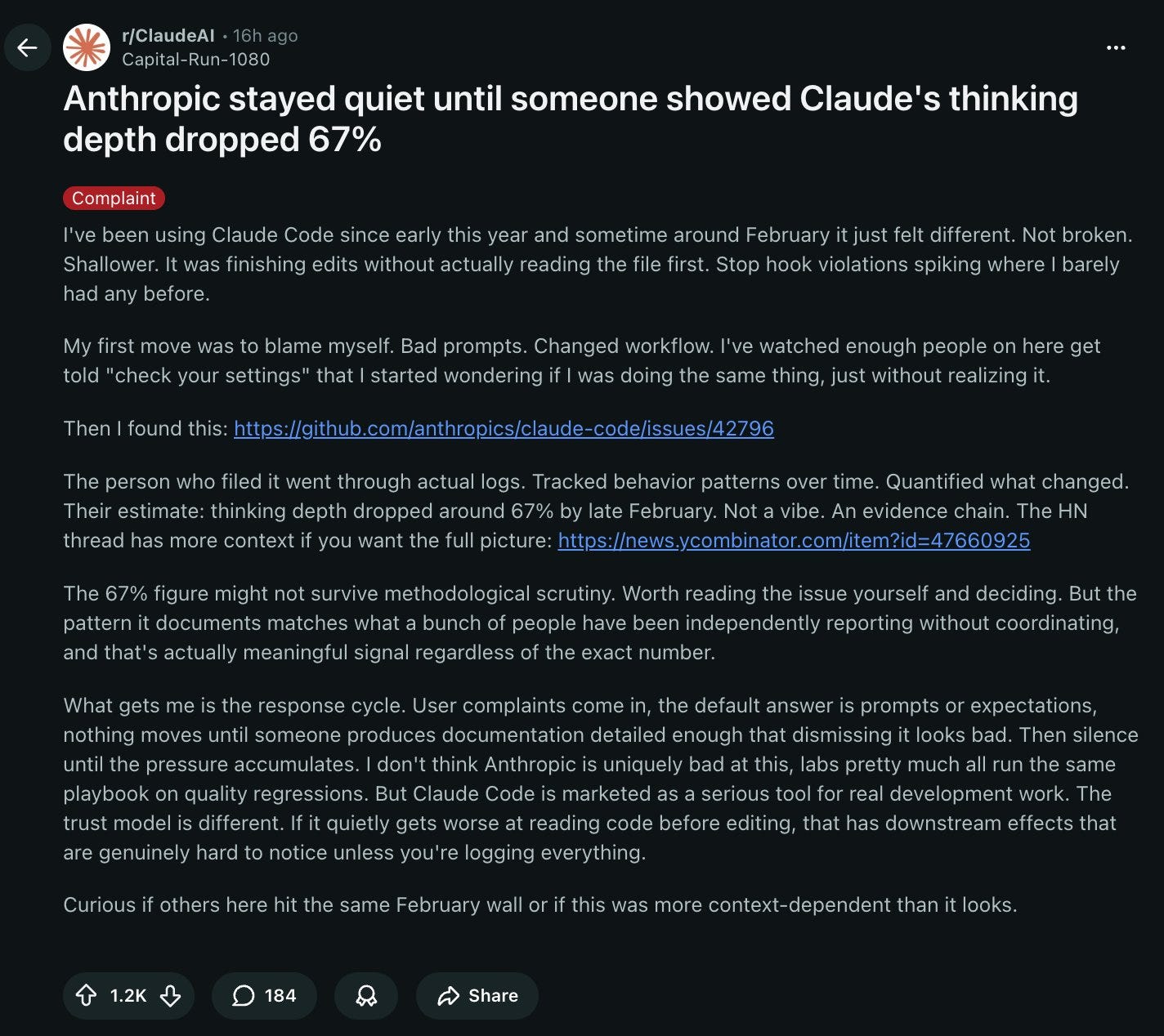

→ Why does it matter? A user logged actual behavior data showing Claude’s reasoning depth dropped 67% in late February. Anthropic stayed quiet until the numbers were too documented to dismiss. The working theory among many users: Anthropic is throttling Opus to push traffic toward training its next flagship model.

→ Why does it matter? Anthropic posted benchmark numbers that are turning heads with Mythos. This is the first model trained on Blackwells. Buckle up!

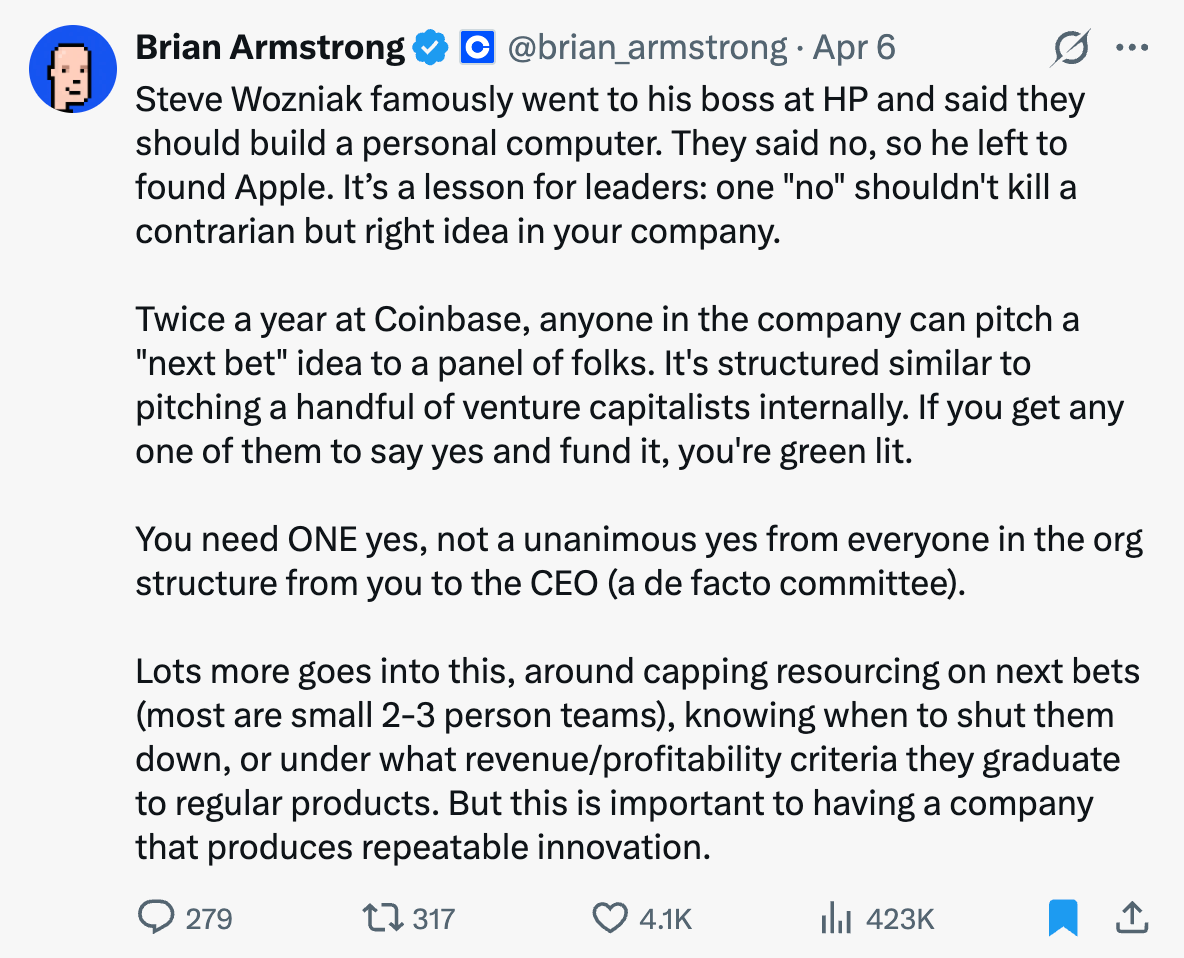

→ Why does it matter? Most big-company ideas die by committee veto. One-yes-wins flips the default: contrarian ideas get a fair shot without needing unanimous buy-in up the chain.

→ Why does it matter? Intercom’s R&D team tripled output in 16 months without tripling headcount. That’s the AI productivity story most engineering leaders are chasing right now.

Worth a watch or listen at 1x:

→ Why does it matter? Jesse Genet is running 11 AI agents in the background of real family life. Not a lab. While homeschooling four young kids. Katherine Boyle and Sarah Wang draw out what that actually looks and feels like: the friction, the workflow decisions, and the mindset required to treat agents like an extension of your parental capacity.

→ Why does it matter? Fair warning: This is a highly technical conversation between Dwarkesh Patel and Jensen Huang. But if you want to nerd out on the economics of AI, this is the one for you. I loved Jensen’s explanation of Nvidia: “We turn electrons into tokens”.

Quotes & eyewash:

“It is not the strongest of the species that survives, nor the most intelligent, but the one most responsive to change.” – Charles Darwin

→ Why does it matter? One to live by with how much change our world is experiencing right now.

“The best way to cherish life is to remind yourself of life's impermanence. It is to remember that every time you see someone that is one less time you see them. It is to remember that everytime you go somewhere that is one less time you visit. By doing this, you naturally slow down. Almost like a reflex, you start to truly live.” - Andrew Anabi

→ Why does it matter? For those of us that don’t live on the coast and aren’t planning to move there, how many times do you think you’ll see the ocean again? Cherish those visits.

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.