Reading Ambitiously 5-23-25

You can think faster than you can type, OpenAI buying Jony Ive's design firm for $6.4B, Google I/O & Microsoft Build, JP Morgans 1799 founding, AI reading x-rays, Shopify 10-year since IPO

Enjoy this week’s Reading Ambitiously as a podcast entirely generated by AI.

The big idea: you can think faster than you can type

OpenAI knows it. That’s why they’re spending $6.4 billion to build it with Jony Ive.

A post on r/WallStreetBets caught my eye the other day. It said if AirPods were a standalone company and were valued like Palantir based on p/s ratio they’d be worth $4.2 trillion. 🤯

Obviously a joke. But not a bad one.

Because if AirPods are just a $129 interface to Apple’s ecosystem… what happens when AI gets its AirPods?

That’s exactly what OpenAI is chasing with its $6.4 billion acquisition of Jony Ive’s secretive AI device company.

Not a chatbot. Not a new model. A product. A piece of hardware. A bet on the interface.

And if you think $6.4 billion is a lot—remember that OpenAI is valued at $300 billion. This is just over 2% of that. Which is double the typical “1% M&A rule of thumb,” but hey… if you’re buying the guy Steve Jobs called “wickedly intelligent” and led design of the iPhone, maybe it’s worth it.

Especially if you believe what I do: the next dominant AI company won’t be the one with the smartest model.

It’ll be the one that builds the experience we want to come back to.

OpenAI knows the models are commoditizing

We’ve said it before in Reading Ambitiously: the application layer:

“Foundational models are converging. The moat moves up the stack—into UX, workflow, and distribution.”

And again in do more with less AI:

“The companies that break out won’t just build better models. They’ll build better products—ones people love to use, talk about, and return to.”

OpenAI knows this too.

Model performance is no longer the differentiator it used to be. Everyone is building fast. Open source is catching up. Claude and Gemini are gaining share. Google just had a huge week at I/O.

The real competition isn’t simply another SOTA model. It’s Apple, Meta, Google—companies with distribution.

That’s why this acquisition makes sense. OpenAI isn’t just fighting a model war. They’re fighting to own the front end of how humans interact with artificial intelligence.

Voice, visuals, ambient assistance—that’s where the margin will live. That’s a big moat.

Owning the interface isn’t optional anymore. It’s existential.

Why this acquisition matters

The iMac. The iPod. The iPhone. The iPad. Each breakthrough product that powered Apple’s ascent to a $3 trillion company had Ive’s fingerprints on it.

Now imagine that level of product thinking aimed not at music or mobile—but at intelligence.

This isn’t about building a gadget. This is OpenAI taking a full-stack swing at experience, emotion, and distribution.

As Jony Ive put it:

“I have a growing sense that everything I have learned over the last 30 years has led me to this moment. While I am both anxious and excited about the responsibility of the substantial work ahead, I am so grateful for the opportunity to be part of such an important collaboration.”

OpenAI also took money from Masayoshi Son. And let’s be honest: you don’t bring in Masa for incremental progress. You bring him in to swing.

Voice has been here—but it wasn’t good

Voice has always felt like it should be the future.

In the video announcing the deal, Sam Altman and Jony Ive talked about the first product they’re working on: an ambient, voice-first AI device. Something that feels more like a companion than a command line.

But if you’ve used Siri or Alexa, you know how far we are from that vision. Voice interfaces aren’t new. We’ve been trying to talk to computers for decades.

Remember Nuance Dragon NaturallySpeaking? You could buy it in a box at CompUSA, load the CDs, and spend an hour “training” it to understand your voice. Then spend the next hour fixing its mistakes. Anyone else remember that? No? Just me?

Siri came next. Then talking to your car. Then Alexa. Then Google Assistant. They worked but only up to a point. Set a timer. Play a song. Check the weather. Not exactly game-changing for knowledge work.

Voice remained stuck in the novelty phase. Too clunky. It was a tool for tasks, not for thinking.

The new generation of voice AI doesn’t just hear you. It understands you. It holds context. It can reason, ask follow-ups, and get smarter over time.

For the first time, voice isn’t a command line. It’s a partner in the conversation.

Every tech wave had its interface moment

Technology doesn’t go mainstream because it’s powerful. It goes mainstream because it’s usable.

In the early 1990s, enterprise software moved from green screens to graphical interfaces. Windows made it possible. A mouse replaced function keys. Point-and-click replaced command-line prompts. That shift opened the door for companies like PeopleSoft and SAP, where founders recognized that usability—not just functionality—was an unlock.

The internet followed the same arc. Mosaic and Netscape didn’t invent the web—they made it usable. Bookmarks, images, and clickable links turned it into something people could explore.

Then came the iPhone. It wasn’t the first smartphone, but it was the first to ditch the keyboard. And people hated it. “Where’s my Blackberry?” was a genuine crisis. They missed typing with their thumbs. But once users experienced multi-touch, apps, and full-screen browsing, there was no going back. People tried…

Interfaces create inflection points.

Carlota Perez describes this as the transition from installation to deployment—from infrastructure to impact. Geoffrey Moore calls it crossing the chasm. In both frameworks, the tipping point is the same: user experience. It’s the interface that brings the next wave of users in.

And now, it’s OpenAI taking its shot—pairing design and intelligence to see if voice can do for AI what multi-touch did for mobile.

Every generation has its unlock: GUI → browser → multi-touch → voice?

Why voice matters now

We’ve had voice interfaces before. But they weren’t good enough for knowledge work.

They were slow. They forgot what you said two sentences ago. And they sounded like robots pretending to be helpful.

That’s what the team at Sesame calls the uncanny valley of voice. For years, voice assistants were close—but not quite natural enough. Close—but not fast enough. Close—but not worth switching to.

Modern voice AI doesn’t just transcribe—it remembers, responds, and reasons. It holds context. It speaks back. And it does it in real time.

Voice removes friction. And friction is the enemy of flow.

It’s not perfect yet. But it’s finally crossed the line from frustrating to useful. Once people experience that shift—once voice becomes the faster, easier way to work—they won’t go back.

Try this: use your voice to work with AI this week

You don’t need special hardware. You don’t need a new app. If you have a phone or a laptop, you’re ready.

Here are a few simple ways to get started:

→ Converse with longform content: upload a whitepaper, a contract, or even your insurance policy to ChatGPT and talk it through instead of reading it. Ask questions. Pause and dig deeper.

That’s exactly what I did last week after a hailstorm. I uploaded my 60-page homeowners insurance policy—something I’d never normally try to read. Then I had a full-blown conversation with it: What are my options? What’s my deductible? What scenarios should I consider?

I used voice the entire time. I even dictated a short, clear note to my insurance agent—with citations from the policy—to prompt a conversation. The whole thing took 15 minutes. And it worked.

→ Dictate your next memo: open your phone’s voice recorder, speak your thoughts, and run the transcript through ChatGPT (if you own a Mac, try out F5 for native dictation). You’ll move much faster.

→ Make it your assistant: use ChatGPT’s voice mode to plan a trip, summarize a meeting, draft a follow-up email, or break down a complex topic. You’ll notice something right away: speaking feels easier than typing prompts.

The goal isn’t to replace typing. It’s to notice when voice becomes the faster interface—and build the habit.

Try one thing this week. You’ll be surprised how quickly it clicks (pun intended).

The interface is the unlock

The history of technology is the history of better interfaces. Each time we found a faster, more natural way to interact with machines, everything changed.

Voice might be next.

We don’t adopt technologies. We adopt experiences. And when speaking becomes easier than typing, when talking feels more productive than clicking, when AI becomes something you can work with out loud—users won’t just try it. They’ll prefer it.

We’re not there yet. But it’s close.

GUI → browser → multi-touch → voice?

Sam and Jony didn’t say much in their video. But what they did say matters. They’re not building a chatbot. They’re building a tool you live with. Talk to. Rely on.

If they get it right, voice won’t just be the next interface. It’ll be the one that lasts.

You can think faster than you can type. And now, for the first time, your tools can keep up.

Seriously, what a time to be alive.

Best of the rest:

🧑💼 Workday’s AI Future: Interview with Gerrit Kazmaier - Workday’s EVP of Product and Technology, Gerrit Kazmaier, joins The Verge’s Decoder podcast to discuss how AI is reshaping enterprise software—and why Workday wants to be the “system of intelligence” for companies. - The Verge

🧬 Regeneron Buys 23andMe Out of Bankruptcy for $256M - Regeneron is acquiring 23andMe for just $256 million—less than 5% of its peak valuation—to tap its 15 million-user DNA database for drug discovery. A stunning fall for a former VC darling. - The Wall Street Journal

🪙 Circle Preps for $5B IPO—While Quietly Exploring Sale to Coinbase or Ripple - As crypto markets reheat, stablecoin giant Circle is eyeing a $5 billion IPO—but is also in talks with Coinbase and Ripple, a move that could reshape the power dynamics of digital finance. - Fortune

Charts that caught my eye:

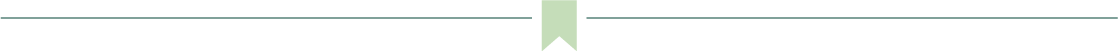

→ Why does it matter? Stack Overflow’s sharp traffic decline—down more than 50% since 2022—is a striking signal of how fast generative AI is reshaping knowledge work. Once the go-to destination for developer Q&A, it’s now being cannibalized by AI assistants like ChatGPT and Cursor, which offer faster, more contextual answers. This isn’t just a shift in user behavior. It’s a preview of what happens when the internet’s knowledge base, built by humans, becomes the training ground for models that then replace it.

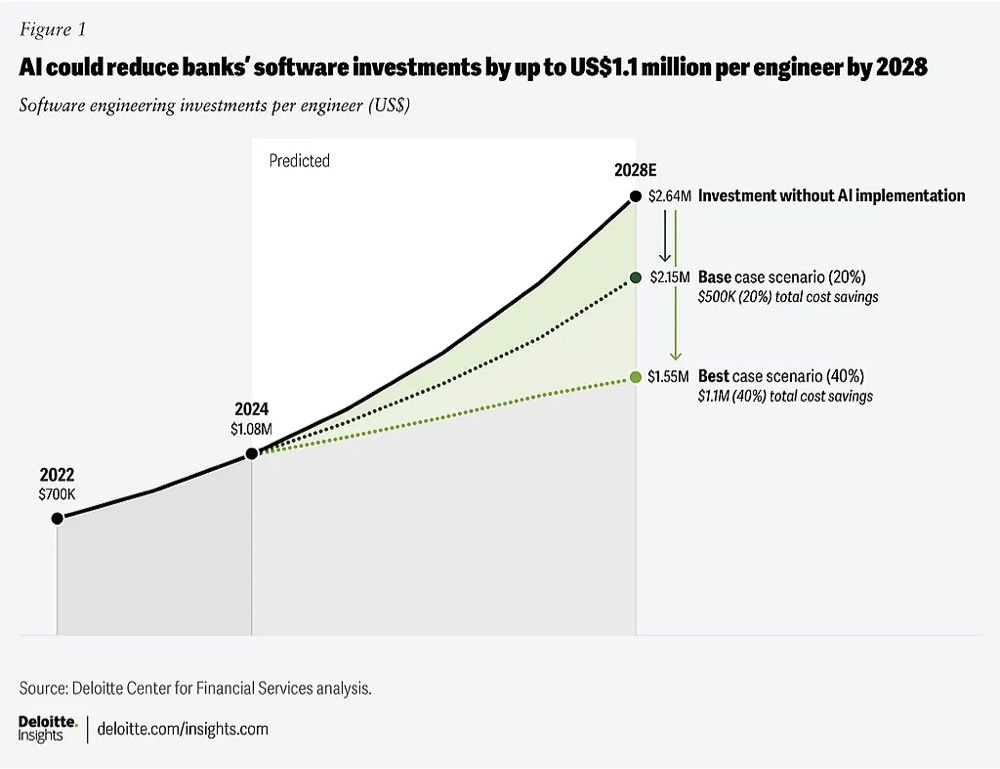

→ Why does it matter? AI is emerging as a powerful deflationary force in software engineering—and the stakes for financial services are massive. According to Deloitte, banks could save up to $1.1 million per engineer by 2028 through AI adoption, cutting software investment costs by as much as 40%. That’s not just incremental efficiency. In a sector where margins are tight and talent is expensive. The institutions that embed AI deeply into their engineering workflows won’t just move faster—they’ll outspend and outlast the ones that don’t.

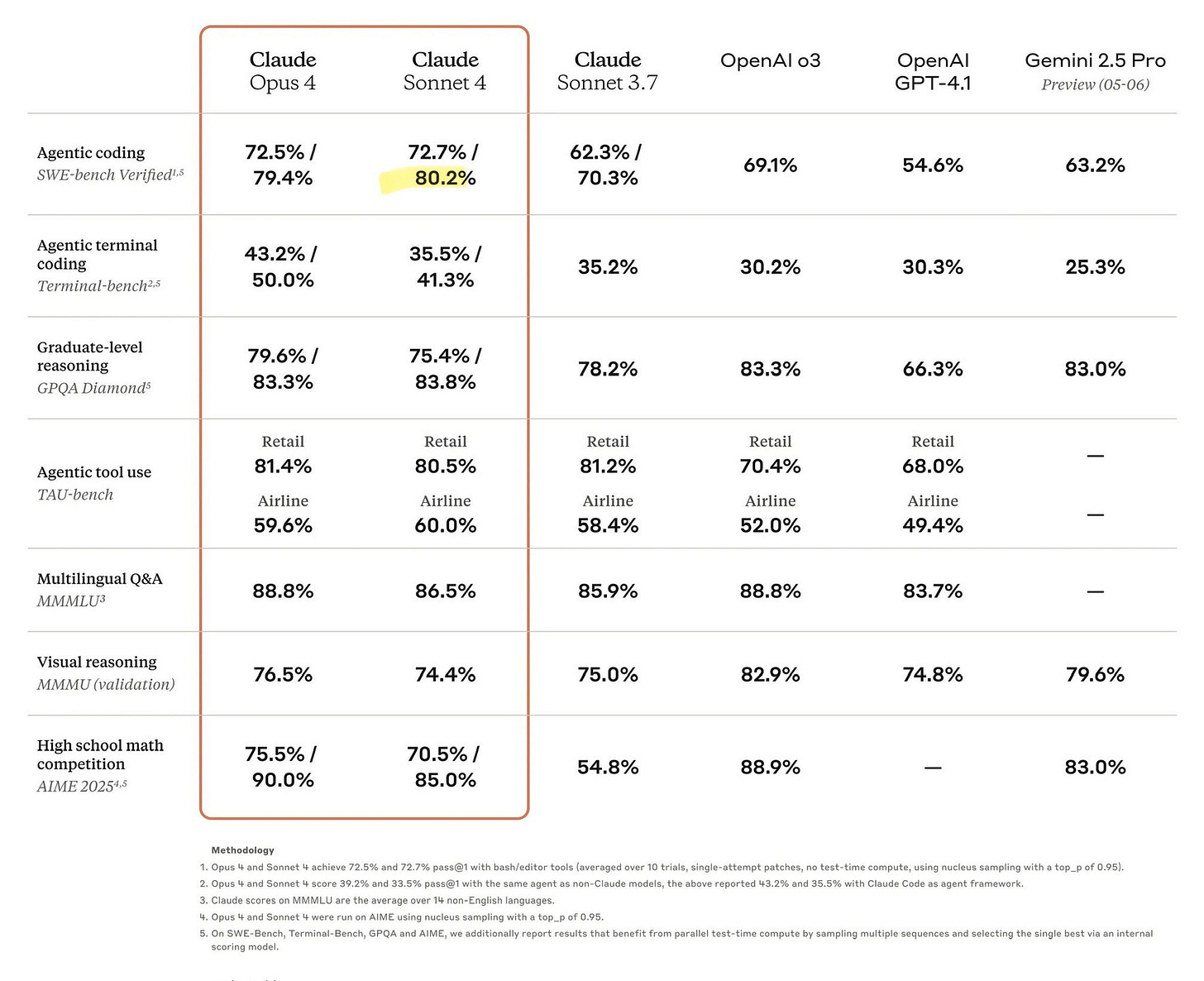

→ Why does it matter? Claude Sonnet 4 launched yesterday and set the state of the art in Agentic coding!

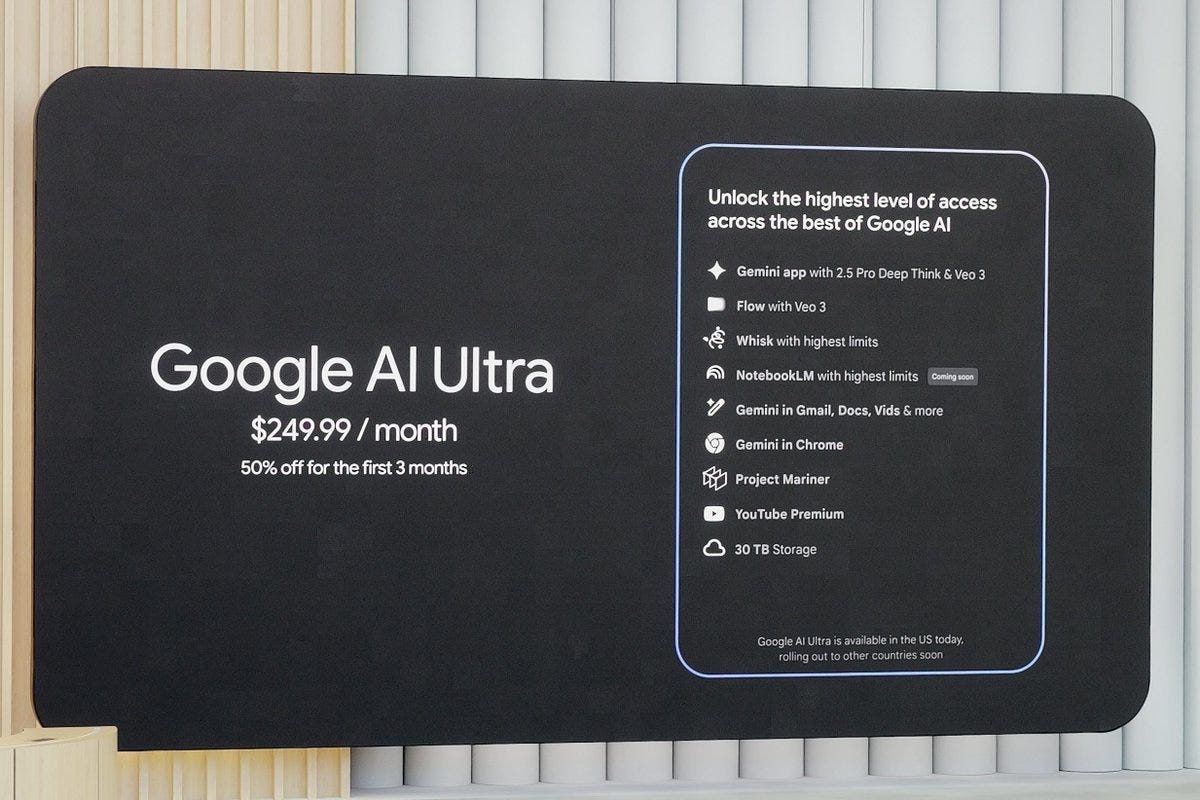

→ Why does it matter? Google’s new AI Ultra package is $3,000 per year! Google I/O was jam packed with new AI product launches and demonstrations. The one that seems to have captivated everyones attention, real time translation on Google Meet! Check it out.

Tweets that stopped my scroll:

→ Why does it matter? In 1799, Aaron Burr couldn’t get a banking charter—so he created a fake water company during a yellow fever outbreak and used the funds to start a bank. The fine print gave him legal cover. Alexander Hamilton, who ran the country’s financial system, was furious. Their rivalry got so heated it ended in a literal pistol duel. Hamilton died. Burr’s bank survived. Two centuries and 1,200 mergers later, it’s JPMorgan Chase. The bank still owns the dueling pistols. 🤯

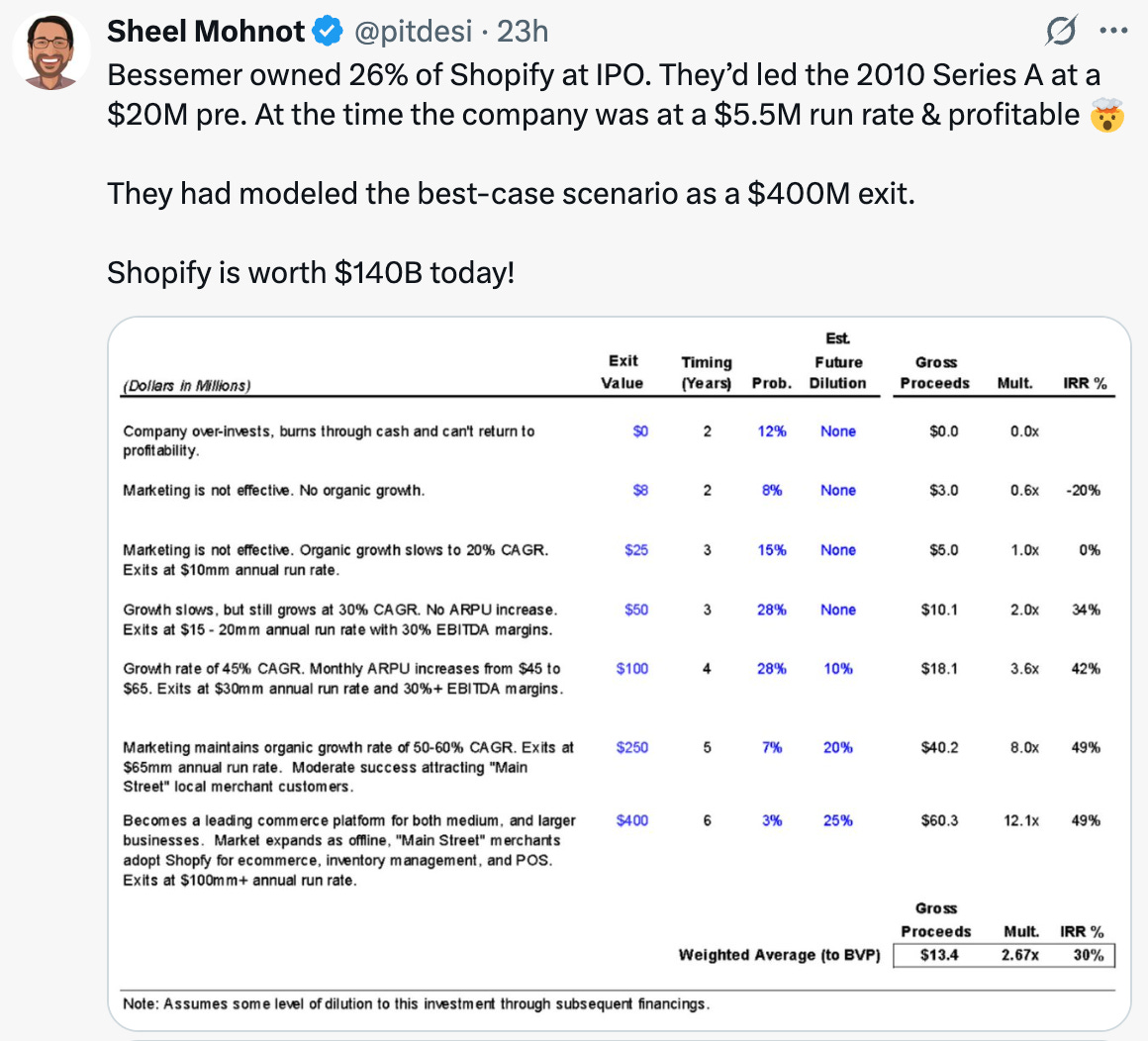

→ Why does it matter? Bessemer’s best case scenario for Shopify was $400M! 🤯

→ Why does it matter? “I developed the skill to read this over 20-years. AI does it in a second”

Worth a watch or listen at 1x:

→ Why does it matter? Microsoft just declared that the next era of the internet will be built for agents, not just people. The shift to an "agentic web" means your software won’t just serve you—it’ll act for you. This is Microsoft betting big on AI-native browsing, identity-aware workflows, and multi-agent collaboration across apps and devices.

→ Why does it matter? Carl Eschenbach is one of the few people who’ve built legendary companies from the inside and backed them from the outside. At VMware, he scaled revenue from $30M to $7B. At Sequoia, he led investments in Zoom and Snowflake. Now, as CEO of Workday, he’s back in the operator seat. Carl’s rare combination of go-to-market depth, boardroom savvy, and cultural clarity makes this podcast a masterclass in what great enterprise leadership.

Quotes & eyewash:

A lesson I wish I learned earlier: Real happiness is found in the anticipation. It’s the quest. It’s the hunt. It’s the process. It's the journey. It's the moment right before you achieve it. Happiness is not in the having, but in the becoming.

- Sahil Bloom

The mission:

The Wall Street Journal once used ‘Read Ambitiously’ as a slogan, but it became a challenge I took to heart. If that old slogan still speaks to you, this weekly curated newsletter is for you. Every week, I will summarize the most important and impactful headlines across technology, finance, AI and enterprise SaaS. Together, we can read with an intent to grow, always be learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Stunning post. Head spinning.