Reading Ambitiously 5-9-25

Shift Left, RSA, JP Morgan CISOs open letter, Agent costs, pre-money startup valuations, Palantir 93.4x ARR, Buffet buying Apple in 2016, Ken Griffin, Marc Benioff, all new cells every 7 years

Enjoy this week’s Reading Ambitiously as a podcast entirely generated by AI.

The big idea: shift left or get left behind — securing the AI supply chain

This week’s Big Idea is co-authored with Andrew Rubin, founder and CEO of Illumio. Illumio invented microsegmentation and helped shape what we now know as Zero Trust architecture. Andrew’s work has influenced some of the largest organizations on the planet—and his operating philosophy is grounded in one powerful truth: we now live in a post-breach world.

We’re writing together just days after RSA, the world’s largest cybersecurity conference, where one idea dominated: AI is moving faster than security can keep up. And just before RSA, JPMorgan Chase’s CISO, Patrick Opet, released a rare open letter calling on software vendors to stop shipping AI features without secure-by-default principles.

It wasn’t a technical memo. It was a challenge to leadership.

The risk isn’t theoretical anymore. And the clock isn’t hypothetical. It’s already ticking.

The post-breach world

Perfect prevention is a fantasy. Today’s reality is different: you will be breached. If not today, then soon.

That’s not alarmism—it’s just how modern infrastructure works. Your enterprise is connected to hundreds of external systems, internal services, and fast-moving teams. Something will go wrong.

Operating in a post-breach world means designing for it. It means reducing the blast radius. It means building like you already have an infection somewhere—and your job is to stop it from spreading.

This mindset shift—from fortress thinking to containment-first design—is foundational to how we need to build in the AI era.

AI and SaaS: twin forces, compounding risk

Pat Opet’s letter wasn’t just about AI. It was also about SaaS.

Modern companies run on a constellation of third-party tools. Every SaaS product you use is a security risk you didn’t build—but now have to own. And every AI tool you build introduces entirely new pathways for things to go sideways.

Here’s the punchline: you’re inheriting risk from systems you can’t see, can’t inspect, and often don’t even know you’ve connected to.

We’re not just facing third-party risk. We’re now exposed to fourth-party risk—the vendors your vendors rely on, the APIs your plug-ins call, the models those APIs chain together. That’s the modern attack surface.

And it’s not on a traditional SOC 2 checklist.

AI is moving faster than any tool before it

The difference between AI and SaaS is speed.

AI systems are being built, adopted, and deployed in weeks—not years. And security, by nature, introduces friction. It slows things down, asks hard questions, and insists on boundaries. But speed and security are fundamentally at odds. The more we accelerate, the more risk we introduce.

You can’t “go fast and break things” when the things you're breaking are your controls.

If you don’t hit pause—at least briefly—to insert architecture, audit, and acceptable use, you’re not moving fast. You’re just losing visibility.

A responsible AI blueprint

If you believe your AI stack will be breached—or fail in unexpected ways—then governance starts at design time. Here’s how to build for resilience, not just features:

Treat model providers like subprocessors - trusted parties who must protect your data and whose accountability you measure as your own. These aren’t casual vendors. If you’re sending data to OpenAI, Claude, or others, they should meet the same bar as AWS.

Ask: Are our AI vendors held to the same standard as our infrastructure partners?

Publish an AI acceptable use policy. Define what data goes in, what comes out, where it’s stored, and how long it’s retained. This is your perimeter.

Map your AI supply chain. Most companies can’t name the plug-ins, APIs, and agents connected to their models. That’s fourth-party risk hiding in plain sight.

Ask: Can we name every external service our AI systems touch—directly or indirectly?

Can our vendors provide a Security Bill of Materials (SBOM)?

Run AI-specific failure drills. Simulate what happens when things go sideways—prompt leakage, model hallucination, broken plug-ins, latency failures.

Ask: Have we ever practiced a scenario where an AI system breaks or gets exploited?

You don’t need a 100-point checklist to get started.

You need to treat AI like infrastructure—not just a feature—and build for the moments when it doesn’t behave.

The bottom line

If you’re feeling the tension between growth goals and security guardrails, you’re not alone. Every executive team is navigating that tradeoff right now.

Speed is the most important force inside an organization. But speed without clarity—without boundaries—is risk. Security isn’t about slowing you down. It’s what allows you to move fast with confidence, not just momentum.

If we agree we’re living in a post-breach world, then we need to design for that reality. That means holding AI to the same standards as infrastructure. It means mapping dependencies before something breaks. It means acquiring the skills and talent required to understand how these systems actually work—not just how they’re marketed. And it means recognizing that incentives—not intentions—shape outcomes.

We’re not powerless. We’re in control of what we build—and how we build it.

The winners won’t just ship fast. They’ll lead with clarity—and know exactly what they’re shipping, and what rides with it. That’s what separates ambition from irresponsible risk.

Your job is to do both: move fast—and know exactly what you’re accelerating. The stakes have never been higher. But the opportunity to lead — earn trust —and win—has never been greater.

Best of the rest:

📊 US Financial Leaders See Private Assets Coming to More Investor Portfolios - "I think that traditional asset managers will be our largest clients in the future," said Marc Rowan, co-founder and CEO of Apollo Global Management, during a conference panel at the Milken Institute. "The new form of active management, I think, is not going to be the buying and selling of stocks. It's going to be the addition of private assets" to portfolios that until now have been made up of publicly traded securities. - Reuters

🏛️ OpenAI Says Nonprofit Will Retain Control of Company, Bowing to Outside Pressure - Following mounting scrutiny over governance and influence, OpenAI reaffirms that its nonprofit board will maintain control of the company. - CNBC

🌬️ OpenAI Agrees to Buy Windsurf for About $3 Billion - OpenAI is acquiring software agent startup Windsurf in a deal reportedly worth $3 billion—roughly 1% of its most recent $300 billion valuation. - Reuters

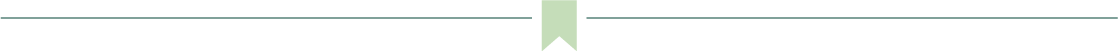

Charts that caught my eye:

→ Why does it matter? Venture capital is getting more efficient—and more aggressive. Investors are crowding into the earliest, riskiest stages, pushing prices to all-time highs. The power-law is compressing timelines: break out fast or get left behind. For founders, the bar is higher. For investors, the margin for error is thinner.

The Agentic Margin: What It Costs vs. What You Earn from AI Assistants (paid.ai)

→ Why does it matter? Today, giving your agents voice, vision, and advanced reasoning means higher costs and thinner margins. But don’t over-index on short-term unit economics. Like every major platform shift, AI costs are on a curve—falling fast. The real game is understanding inputs now while building for a margin-rich future.

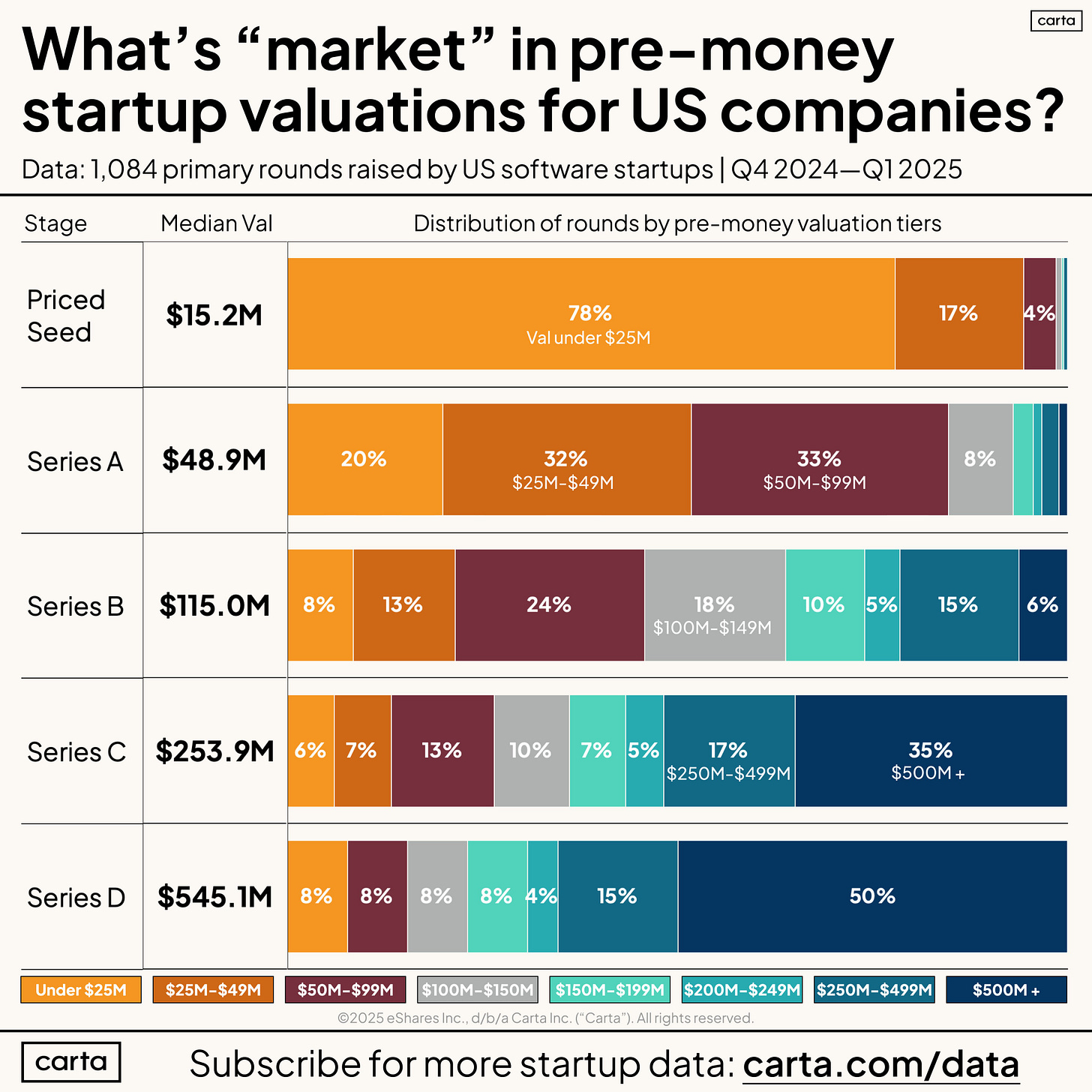

→ Why does it matter? H/t to Alex Clayton and the team over at Meritech… Palantir ($PLTR) is currently the most valuable public software co at ~$315B market cap, and trades at almost 100x run-rate revenue / ARR. The next nearest company is CrowdStrike ($CRWD) at 25x.

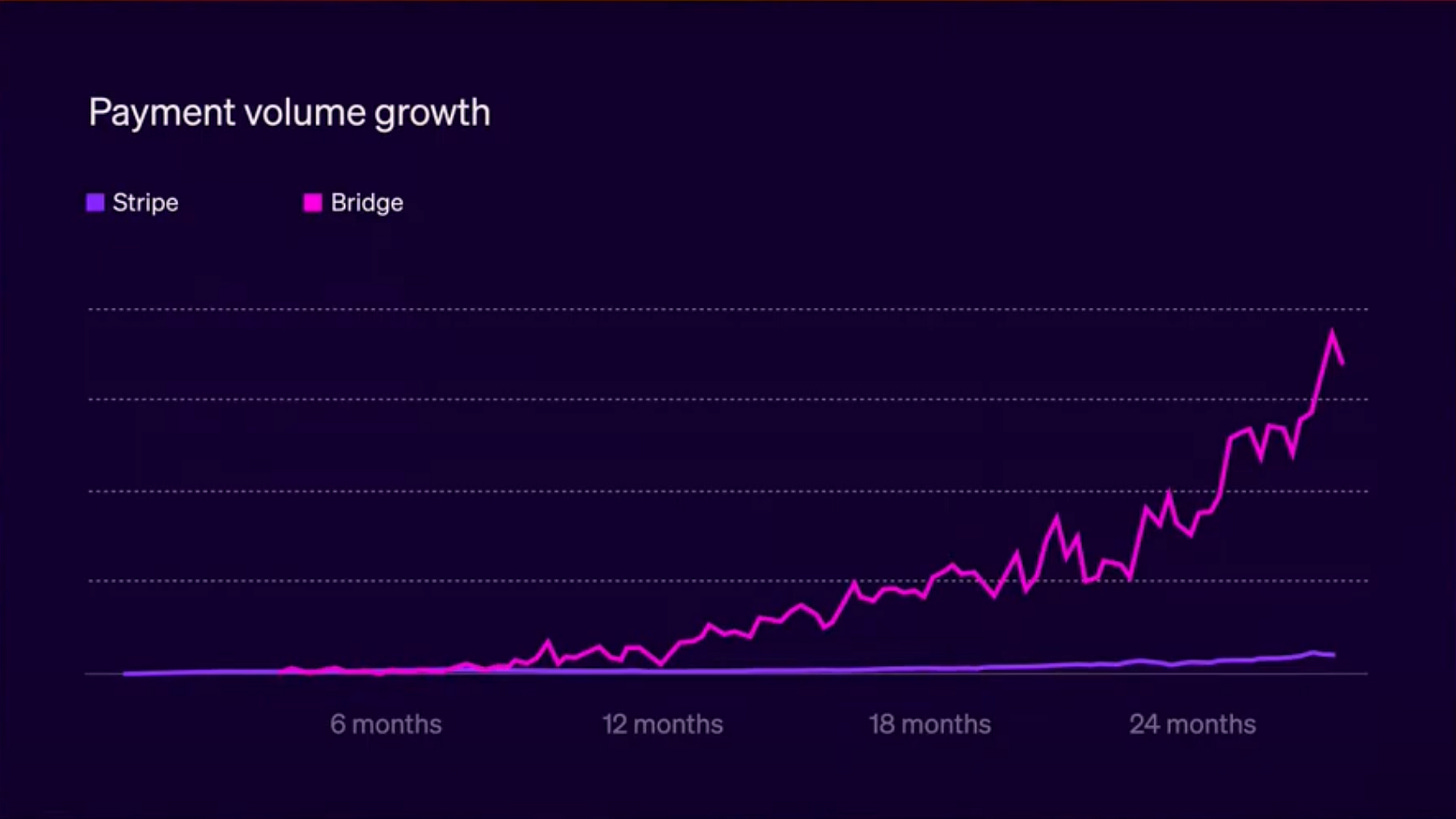

→ Why does it matter? Stripe's Sessions conference just dropped some eye-opening stats on stablecoins, and the Collison brothers shared this chart showing the impressive payment volume on Bridge, Stripe's stablecoin payments network. Back in April, we talked about stablecoins and Circle's IPO, and this chart really brings that to life. If you're curious about how Stripe's shaping the future of internet economics told by master class storytellers Patrick and John Collison, the keynote is worth a watch!

Tweets that stopped my scroll:

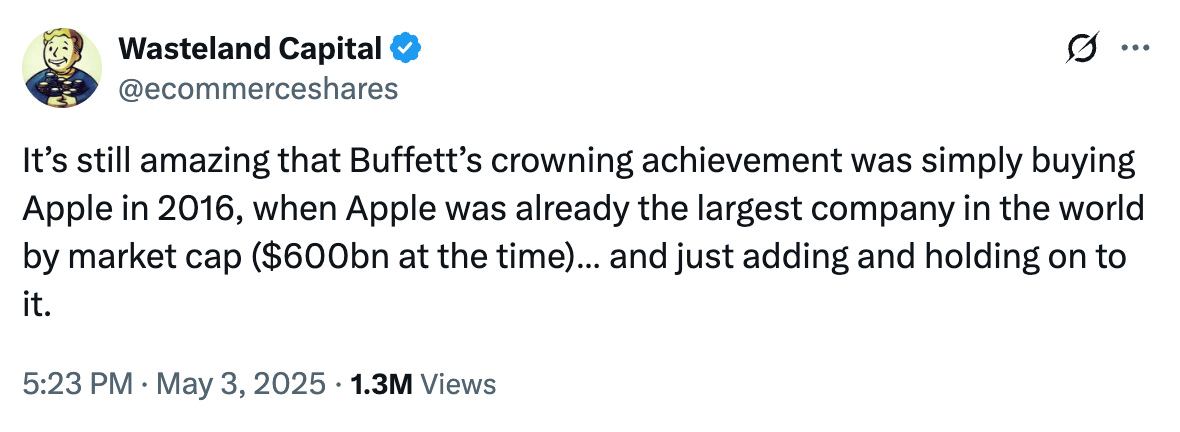

→ Why does it matter? "My wealth has come from a combination of living in America, some lucky genes, and compound interest." Since 1964, Berkshire Hathaway has returned over 5,500,000%. A $10,000 investment in 1964 would be worth $550 million today.

H/t to the team over at MarketSentiment for doing the math on one of their most important investment decisions: buying Apple in 2016 — a company already at scale!

Here’s how the returns of Berkshire would have looked with and without Apple from 2016 to 2023 (removed 2024 since Berkshire started selling Apple at the beginning of last year)

With Apple — Berkshire: 174% | S&P 500: 168%

Without Apple — Berkshire: 142% | S&P 500: 168%2

Here’s another stat: Berkshire would have significantly lagged the market over the last 20 years without Apple (2004 to 2023)

With Apple — Berkshire: 542% | S&P 500: 537%

Without Apple — Berkshire: 442% | S&P 500: 537%

The only difference for one of the world’s largest corporations owning 70+ companies and having 50+ stock positions outperforming or underperforming the market finally came down to the one stock pick they made 10 years back.

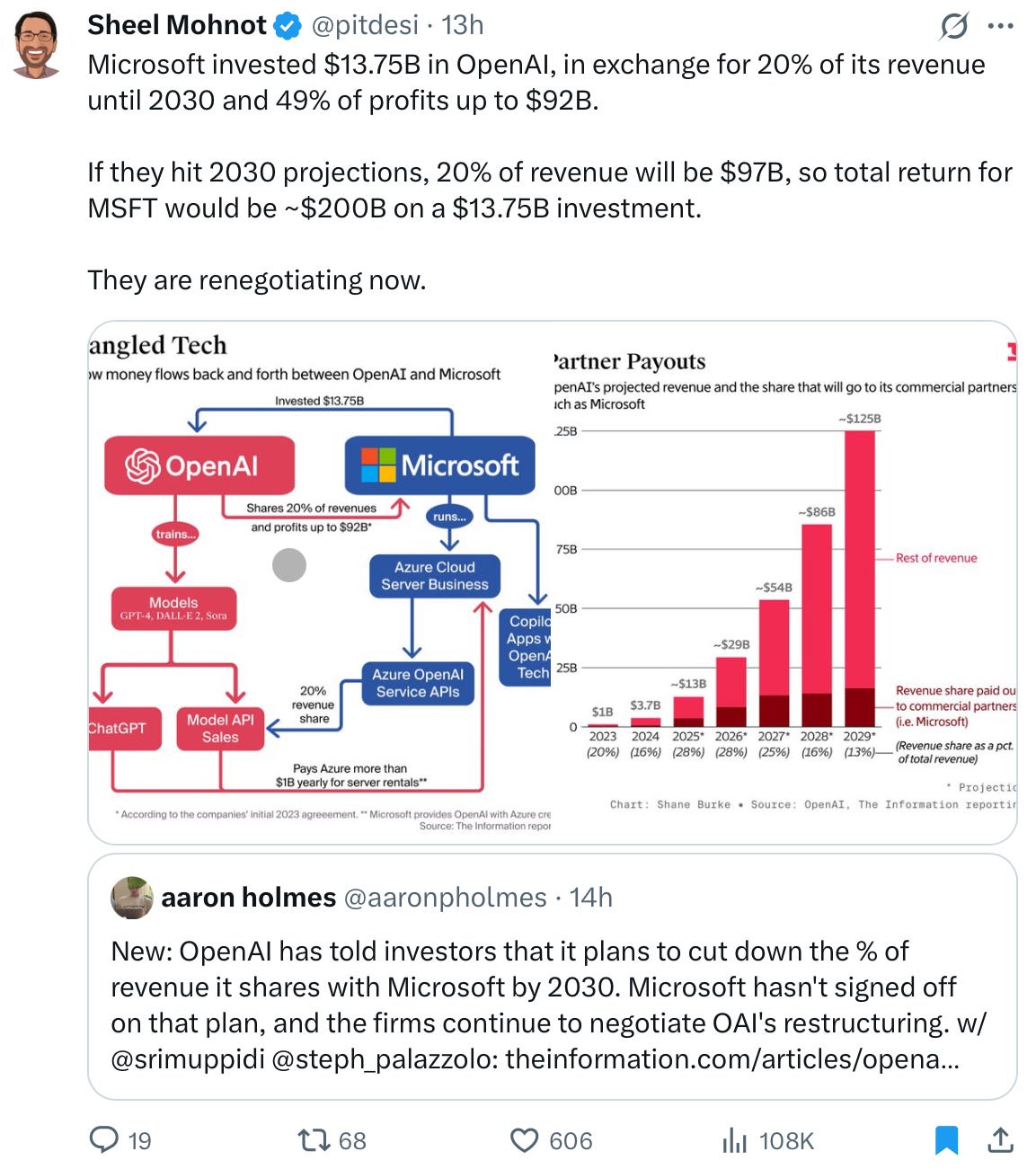

→ Why does it matter? The Microsoft–OpenAI relationship is, shall we say, complex. The press plays it up—but look at the deal structure and it’s hard to see how it wouldn’t be. I’d genuinely love to talk to the person at Microsoft who architected it… it’s creative!

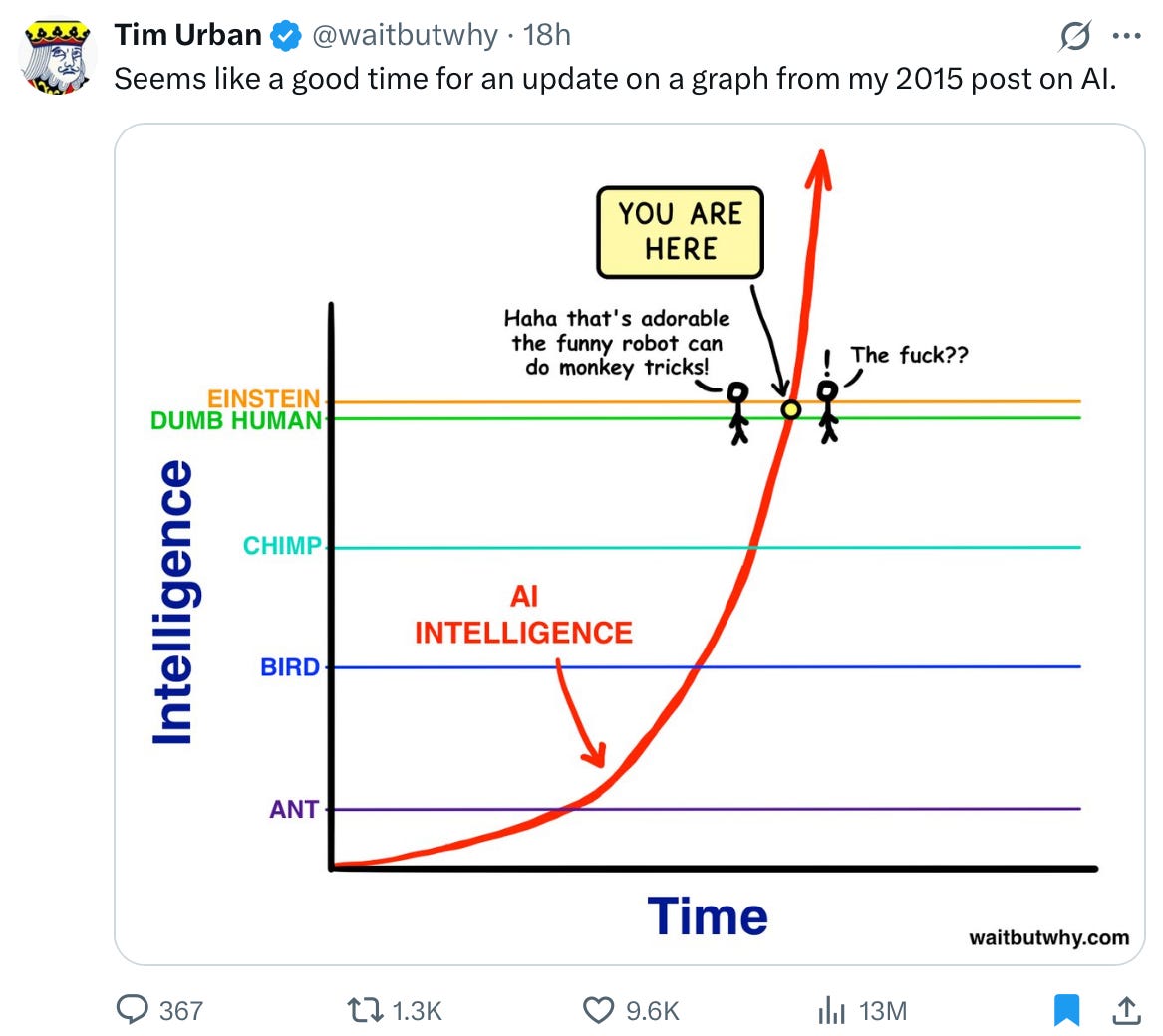

→ Why does it matter? Tim Urban’s most famous post is probably The Tail End, but this one on AI hits differently right now. I love how simply it captures where we are—and how suddenly the mood is shifting. From cocktail tricks to existential questions… all in the blink of a curve.

Worth a watch or listen at 1x:

→ Why does it matter? “If we are all going to eat, somebody has got to sell”. This ~45 minute interview with Ken Griffin (Founder & CEO, Citadel) is a must listen.

→ Why does it matter? Salesforce CEO Marc Benioff, whose V2MOM framework guides the company, talks AI's impact on SaaS. For many enterprise SaaS companies, Salesforce is the one to watch – not just for past wins (their massive SF skyscraper might suggest something's going right… they just hit $40B ARR), but because how they handle this AI shift will offer helpful lessons for everyone. He details AI's strategic integration into platforms like Slack and Tableau, signaling a big industry move. Benioff's perspective is definitely worth checking out to get a better sense of where SaaS and AI are heading.

Quotes & eyewash:

The Seven-Year Rule - David Sparks reflects on how major life and work changes tend to follow a seven-year rhythm—and why recognizing that pattern can lead to more intentional decisions. Every 7 years you’re a new person. - MacSparky

The mission:

The Wall Street Journal once used ‘Read Ambitiously’ as a slogan, but it became a challenge I took to heart. If that old slogan still speaks to you, this weekly curated newsletter is for you. Every week, I will summarize the most important and impactful headlines across technology, finance, AI and enterprise SaaS. Together, we can read with an intent to grow, always be learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”