Reading Ambitiously 8-22-25

Apple Intelligence, small language models, AI supernovas, enter the AI matrix, bitcoin $1M by 2030, the power of compounding, scooby dooby do, Dylan Patel on GPT 5 & semis, Oscar Health

Enjoy this week’s Big Idea read by me:

The big idea: Apple Intelligence is “bad” but their architecture is a great bet

In June 2007, I camped outside a Cingular Wireless store to buy the first iPhone. It had 8 GB of storage and cost $600. I paid in cash. I spent twenty hours in folding chairs meant for kids’ soccer games. People walking by offered judgmental stares. Why wait all night for a phone? It had no 3G, no App Store, and no GPS. And we didn’t care; we were early adopters.

When Apple launched Apple Intelligence last year, I had to have it immediately. This time, Apple’s success worked against them. They had to launch it to hundreds of millions of people at once. Not a group of devout believers who were willing to sleep outside.

The initial reaction: it sucks. Bad enough that it became an internet meme.

Why? Is Apple stumbling on AI? Is the iPhone going to be disrupted by its original designer, now at OpenAI? Maybe. But the real story is different. Apple made the right AI architectural choices, but they haven’t worked yet.

A Small Language Model, or SLM, is at the heart of Apple Intelligence. Apple runs the SLM on-device whenever possible to maximize speed, privacy, and cost efficiency. It relies on an LLM that Apple calls Private Cloud Compute for complex tasks.

While Apple may look behind now, remember that the first iPhone didn’t have apps, stream music, or even get you directions. We only see how great a product it would become with hindsight.

Apple Intelligence feels at a similar early stage. It is limited today, but it is built on the right bet: a Small Language Model on the device backed by a larger one in the cloud.

What are Small Language Models?

Small language models (SLMs) are AI systems light enough to run on a laptop, phone, or single server instead of a hyperscale data center. NVIDIA defines “small” as under 10 billion parameters. For context, large models like GPT-5, trained on the entire internet, are widely reported to run in the hundreds of billions of parameters.

Just as Apple is embedding smaller models on-device to make email rewrites, notification summaries, and quick replies feel instant and private, enterprises can use SLMs for narrow, repeatable, and high-volume jobs. Examples include parsing thousands of daily trade confirmations into a standard format, generating standardized earnings call summaries, filling in CRM records from client emails, powering a helpdesk bot with a fixed set of answers, or writing boilerplate compliance disclosures and risk warnings.

According to Nvidia’s latest research, the SLMs are no longer toys. They’re catching up fast to their larger cousins:

Microsoft Phi-2 (2.7B): Performs like a 30B model on reasoning and code tasks (in practice, it can solve logic puzzles and write software at the level of models more than 10x its size).

NVIDIA Nemotron-H (2–9B): Matches 30B models in instruction following (it takes directions precisely, which is critical for agents that need structured outputs like JSON or step-by-step actions).

Salesforce xLAM-2 (8B): Outperforms GPT-4o on tool calling (in plain terms, it’s better at knowing when and how to call an external API or database).

Google Gemma 3 270M: A hyper-compact 270 million parameter model optimized for efficiency and on-device use (it follows instructions well out of the box, can be fine-tuned quickly, and runs at extreme energy efficiency — Google demonstrated it consuming less than 1% of battery for 25 conversations on a Pixel 9 Pro).

And the ace in the hole is cost. Running a 7B SLM is 10–30x less expensive than a large model. That means companies can now build specialized AI at a fraction of the cost and put it closer to where the work happens.

We’ve Seen This Movie Before

Every major wave in computing has followed the same pattern: power starts centralized, then gets smaller, cheaper, and closer to the user.

Mainframes → Client-Server: In the 1960s and 70s, computing lived on massive mainframes that only the most prominent organizations could afford. Then came client-server, which pushed processing power onto cheaper, distributed machines. It opened the door for far more companies to adopt computing.

Desktop → Mobile: In the 1980s, PCs brought computing to every desk. By the 2000s, smartphones put it in every pocket. Each shift wasn’t just about form factor. It created new markets because computing got personal, contextual, and embedded in daily workflows.

Moore’s Law, the simple observation that chips get exponentially cheaper and more powerful every couple of years, underpinned all of this. That cost curve made decentralization possible.

SLMs fit squarely into this history. Large language models are today’s mainframes: centralized, expensive, impressive, but gatekept. Small models are the PCs and smartphones of AI: local, personal, and specialized. They may not be as broadly capable, but they’re good enough for the jobs most people and businesses need and cheap enough to run everywhere.

As Andrej Karpathy put it in a recent talk at Y Combinator:

“Large language models aren’t just tools — they’re becoming new operating systems.”

His analogy is that today’s LLMs look like the time-sharing mainframes of the 1960s: powerful but centralized. What comes next is the PC moment for AI, where smaller, cheaper, and local models put that capability in everyone’s hands.

The economic logic is the same as every prior cycle: once compute gets smaller and more efficient, it stops living only in the data center and starts living at the edge. The edge simply means running AI near where data originates, whether on your phone, your laptop, or inside enterprise systems, rather than relying on a remote cloud. That shift creates whole new categories of applications that weren’t viable before.

The Fork in the Road: Open or Closed

The question with Small Language Models is not whether they work but who controls them. That’s critical because trust is the #1 topic in AI.

We have seen this movie too. In the 1990s, Microsoft fought to keep Windows closed. Sun Microsystems went the other way. Scott McNealy open-sourced Java in 2006, creating OpenJDK. That decision standardized enterprise software and assured companies their investments would outlast any vendor. It became the backbone of, frankly, the internet. We talked about safety with McNealy in Reading Ambitiously back in January, he said:

“Open-source code is far safer. If there’s a secret in proprietary code, the only people who know it are inside the company. If there’s a bug or a backdoor, someone within that company knows about it. And humans? Fundamentally, they can’t keep secrets.”

AI is heading down the same path. Closed models like GPT-5 or Claude Opus 4.1 sit behind APIs. They are powerful but come with dependency and cost risk. In contrast, open Small Language Models are downloaded, fine-tuned, and run on your infrastructure. The transparency of open source brings much-needed safety to how you explain to customers and regulators what’s happening behind the scenes.

Even OpenAI now acknowledges this. In July, it released its first open-weight* models since GPT-2: gpt-oss-120b and gpt-oss-20b. Open-weight means the model’s parameters are available for anyone to run, but unlike true open source, the training data and code are not fully public. The smaller version can run on commodity hardware with 16 GB of memory. They are viable for enterprise workloads, on-prem, or at the edge.

A 7B to 20B open model costs less than a closed large model. You can tune it to your proprietary data. And because you can audit and govern it, regulators and boards will treat it differently. That is the same logic that made Java the safe choice in the enterprise era.

OpenAI’s move signals that the edge belongs to open SLMs. The market will likely follow if the most successful closed-model company seeds open weights.

The Bottom Line

As an Apple fan boy, it pains me to say, Apple Intelligence isn’t great, yet. It feels like a toy. That is how disruptive technologies usually begin. As Chris Dixon of Andreessen Horowitz writes:

“The next big thing will start being dismissed as a toy.”

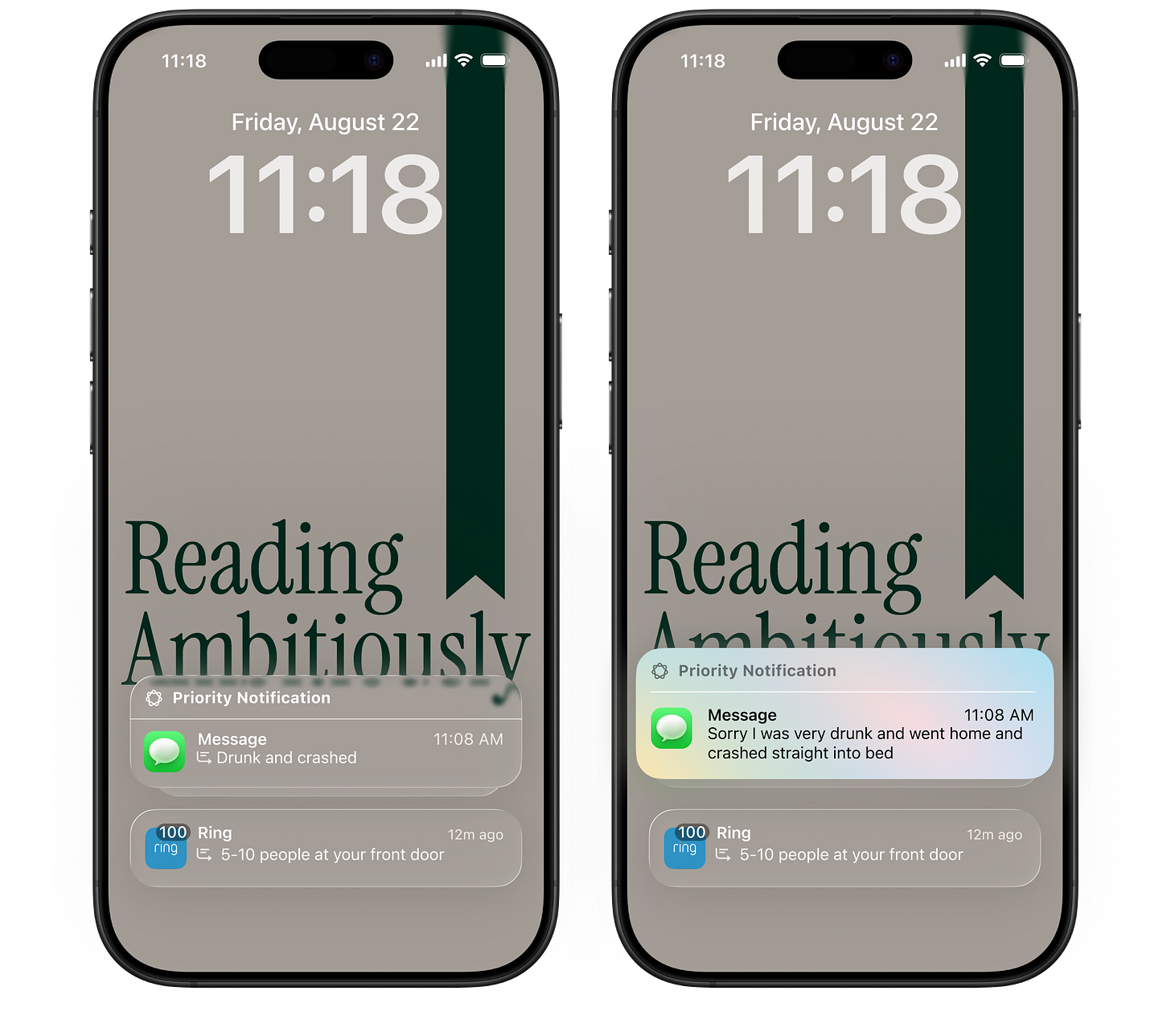

The telephone, the PC, and even Wikipedia all fit that pattern. They started small, they undershot users ala letting you know your friends are drunk and crashed, and then they compounded faster than anyone expected.

Karpathy says the “PC revolution” for AI has not started yet. Apple’s choice to anchor on a small language model on the device, backed by a larger model in the cloud, puts it in a good position at that moment. The product may feel limited now, but the design aligns with where the world is heading: cheaper, faster, safer, and private AI running as close to the user as possible.

Apple should stay committed to that bet. History is on their side. We have seen this movie before, and we are about to see it again.

Best of the rest:

🧩 Class Dismissed – From feared software baron to elementary school principal, Joe Liemandt’s improbable life reveals both the contradictions of modern ambition and the values we project onto power. A must read from the team at Colossus, gosh do I love their profiles – Colossus

🔌 AI’s Infrastructure Demands – Sands Capital argues that accelerating AI training and inference workloads are creating a multiyear “compute super-cycle,” with scarce advanced chip fabrication and packaging capacity giving select infrastructure providers durable pricing power and high barriers to entry. – Sands Capital

🔥 Hire people who give a shit. A simple formula for success - An oldie but a goodie: Alex Wang argues that obsession with mission and work, not credentials, is the only real predictor of meaningful impact. Alex Wang on Substack

🤖 How to Start a Lean AI-Native Startup – Henry Shi lays out a framework for building startups that are efficient, AI-first, and capital-light, combining lean startup principles with deep AI integration, seed-strapping funding models, and real-world examples from his Lean AI Leaderboard. – Henry the 9th

Charts that caught my eye:

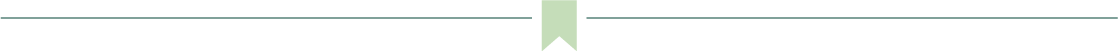

→ Why does it matter? It used to be called “T2D3,” short for “Triple, Triple, Double, Double, Double” - the five-year growth curve of a fast-growing SaaS company. But these AI “supernovas” are scaling even faster. The big question: is the revenue durable? Right now, a lot of AI dollars are chasing the “next best thing,” then moving on quickly as the pace of development accelerates. Think about how fast we went from GitHub Copilot as the go-to coding tool to Cursor.

Tweets that stopped my scroll:

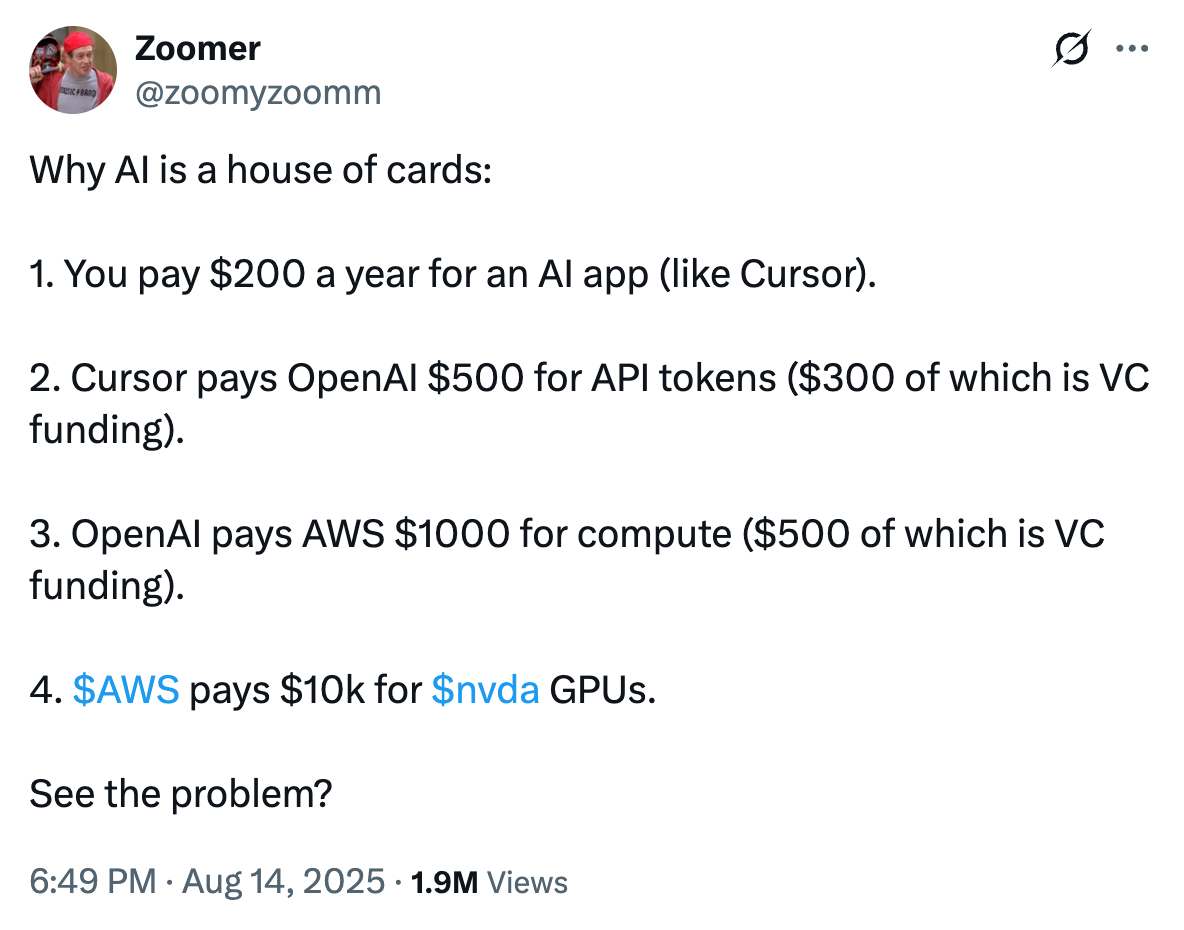

→ Why does it matter? If you’re a Reading Ambitious frequent reader, you know I’m a huge AI bull. We’ve also discussed AI’s parallels to dot com. It’s healthy to look at the other side of the argument. The biggest flaw I see in Zoomer’s tweet here is his assumption on how much people are going to pay. Sure, you pay $200/year for Cursor today, but most companies pay $200k+/year for a software engineer. No doubt, financial markets are exuberant and pricing a lot of the AI value creation in right now.

→ Why does it matter? If you’ve been reading about Model Context Protocols (MCP) and are curious what’s possible by setting up an MCP server, this is a great demonstration from Shopify! MCP is an open standard that lets AI systems securely connect to external tools and data sources, so they can act on your behalf in context-aware ways.

→ Why does it matter? As a huge fan of The Matrix, this one had me laughing out loud. All generated by AI. 🤯

Worth a watch or listen at 1x:

→ Why does it matter? Brian Armstrong is the Founder & CEO of Coinbase. Nobody better than him to hear about where Digital Assets are headed.

→ Why does it matter? Dylan Patel is perhaps the world’s foremost expert on semiconductors. He is the original source for most research on the market. Must listen if you want to understand where the GPU market is headed, and learn about Nvidia’s competitive moats.

→ Why does it matter? It’s a really rare opportunity to hear from one of the best healthcare operators in the world. You will be hooked in the first 5-minutes of Mark Bertolini’s conversation with Patrick O’Shaughnessy.

Quotes & eyewash:

→ Why does it matter? Too funny. Scooby dooby do!

→ Why does it matter? The greatest things in life come to those who wait!

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”