Reading Ambitiously 5.1.26 - The next killer app is AI computer use

AI computer use sounds reckless. It may also be the next step in moving AI from answering questions to actually getting work done.

The big idea: The next killer app is AI computer use

Reading time: 8 minutes

The next killer application for AI may sound like a bad idea.

Give it access to your computer.

Access to the actual machine where knowledge work happens: your browser, file system, productivity tools like Excel and Word, inbox, calendar, and all the half-finished context that makes modern work feel modern.

That sounds reckless.

It may also be the most logical next step toward realizing AI’s full potential.

It’s called computer use. The AI uses a keyboard, mouse, browser, and operating system just like humans do.

If AI is going to automate knowledge work, it eventually has to get “hands on” with the knowledge worker’s primary tools.

The strange part is not that this is technically possible, although the first time you see AI driving around your computer, it’s mind-boggling.

The strange part is that it may make sense to let AI operate the machine where work already happens.

The factory floor of knowledge work

The Industrial Revolution did not eliminate work. It reorganized it.

Labor moved from farms to factories, then from factories to offices. The modern knowledge worker was not born in opposition to automation. In many ways, knowledge work was created by it.

Today, the factory floor of knowledge work is a screen.

The machinery is familiar: inboxes, spreadsheets, decks, agendas, tabs, calendar invites, PDFs.

This is where most work happens.

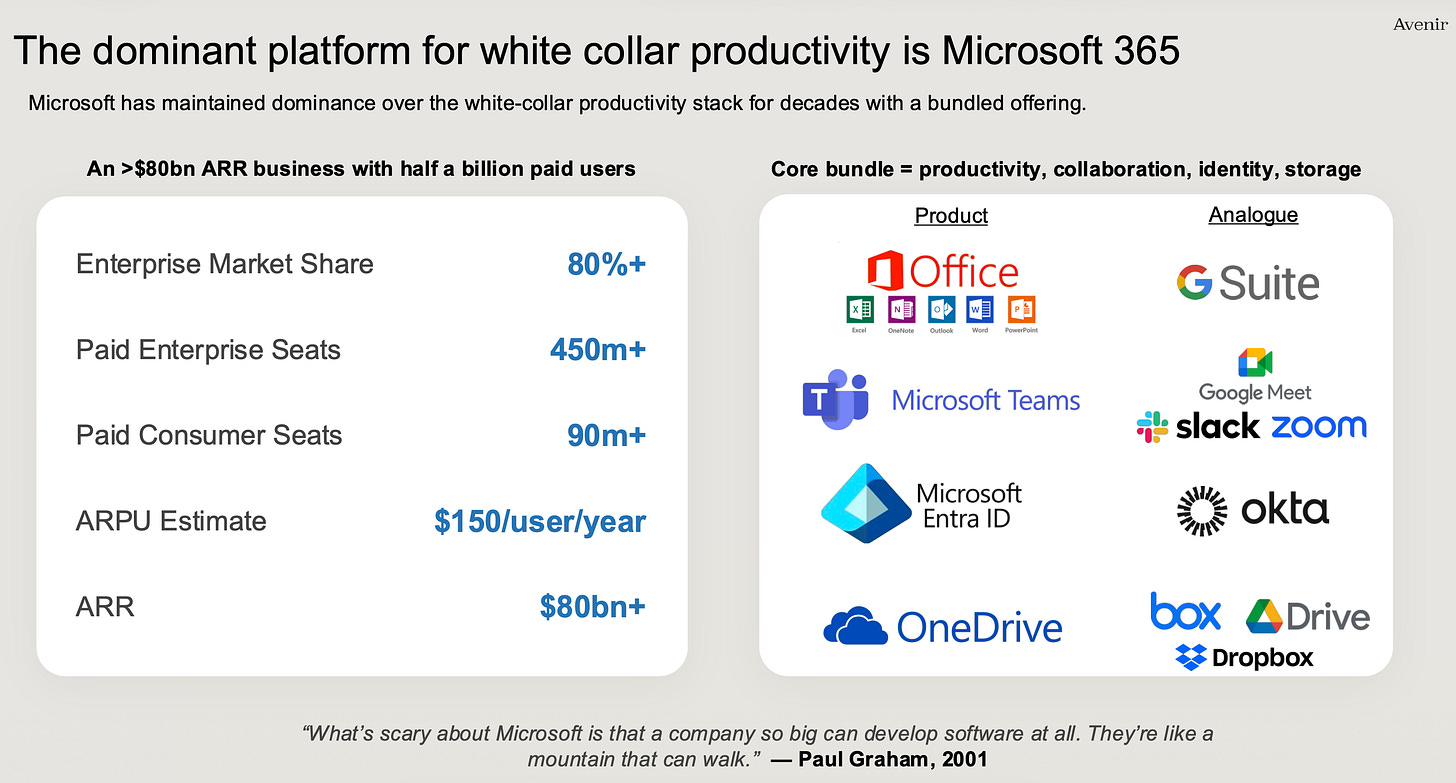

And the dominant provider of the tools for this work is Microsoft, anchored in Office and the broader Microsoft 365. Microsoft 365 has more than 80% enterprise market share, more than 500 million paid seats, and more than $80 billion in revenue.

Paul Graham once called Microsoft “a mountain that can walk.”

AI computer use is not circling a small category. It is circling this mountain.

And this makes a lot of sense. The AI is moving out of the chat window and into the environments where real work happens.

This has already proven to work in agentic coding, which is nearly perfect terrain for AI: codebases, terminals, tests, logs, and GitHub.

Knowledge work is messier. It does not have the cleaner, more structured world of software development.

It has the computer.

The first wave of products is here

For decades, productivity software assumed humans pushed the buttons. Users opened the application, wrote the words, attached the file, updated the spreadsheet, and sent the email.

Computer use gives AI the ability to press the buttons.

Humans still provide the desired outcome, but the AI performs as many steps as possible.

Claude Cowork is a great example. It moves beyond the prompt window and starts working across your files, browser, folders, and applications to complete tasks autonomously.

Microsoft recently released its competitor: Copilot Cowork.

Other examples include Perplexity Computer, Factory’s Droid Computers, OpenClaw, and Hermes. Just yesterday, Manus (acquired by Meta) launched their competitor.

And, of course, OpenAI is going with Codex here. In this demo, you can actually see the AI using a mouse.

Different products, same idea.

Move the AI into an “always on” position and into the place where work happens.

The harness is the product

A framework for building these types of AI computer-use products is coming into clarity.

A few weeks ago, we wrote that the harness is the product. A 2x user and a 100x user can be using the same AI model. The difference is the harness.

The harness is the program that wraps the model and helps it do useful work. A good harness gives the model context, tools, and memory management. It decides what belongs in the context window and when. It decides what the model should handle and what should be routed to more precise software.

With a good harness, the AI can make better decisions to achieve accurate, high-quality outcomes.

A simple example is arithmetic.

It makes no sense to ask the AI to do arithmetic when a deterministic calculator already exists. The AI should know when to call the calculator. It should know when to query the database. It should know when to use the browser, open the file, run the script, or ask the human for approval.

That is the difference between a model with access to tools and a system that knows how to work.

The model is useful for judgment: reading, synthesis, and classification.

Deterministic tools are useful for precision: queries, calculations, and workflows where the same input should produce the same output every time.

The worst agent systems confuse the two. They ask the model to do arithmetic. They force it to reason through tasks that should have been handled by software.

The best systems push intelligence up into skills and push execution down into deterministic tools.

This is how AI computer use becomes a lot more than AI clicking around on your screen.

It becomes a system that lives in the knowledge worker’s environment and can get work done.

Building a moat into the wrapper

If the model is not the whole product, the next question is where the IP lives.

It may live in the harness: tooling, memory, permissions, routing, evals, and integration.

It may live in the skills: reusable procedures that encode how work gets done.

It may live in the proprietary datasets: examples, corrections, approvals, exceptions, and workflows that teach the system what “right” looks like.

It may live in the integrations: the ability to touch the systems you need to drive outcomes.

It may live in the tacit knowledge that gets captured over time: your deep understanding of the domain, the judgment embedded in your workflows, the “secret sauce” of how you do what you do.

This is not traditional software IP. The crown jewels used to be the code.

This is not code.

It is institutional knowledge that augments the intelligence of the AI.

A skill is a reusable procedure. It captures the process, judgment, and context required to perform a task. The user supplies the specific request. The skill supplies the method.

Imagine a skill for investigating a company. It might tell the model to pull filings, build a timeline, compare management commentary against financial results, identify contradictions, summarize both sides of the argument, and cite the sources.

The same skill can be applied to different companies, questions, and datasets.

That is not prompt engineering. It is software design in a language the model understands.

If the AI can help perform the work, observe the pattern, and codify the process, the workflow itself becomes an asset.

Trust is essential

By now, you’ve probably formulated an opinion on whether you’d give AI access to your computer.

My hunch is that most readers are a “no.”

You could buy a new computer and give the AI its own. That’s what I’m doing. The base Mac mini was recently unavailable in Apple’s online store, and TechCrunch reported that marked-up Mac minis were appearing on eBay amid demand for machines that can run on-device AI.

Giving AI access to your computer is not a small permission. It is like giving a junior employee your laptop, your badge, and your password, then telling them to log in and help you get things done.

That employee may be capable. They may also misunderstand the task, click the wrong button, expose sensitive data, or post the wrong thing in the wrong place with confidence.

In the human world, we manage that risk with roles, permissions, training, audit trails, and supervision. In security, ideas like Zero Trust start from a similar premise: do not assume trust just because someone or something is inside the system. Verify access. Limit permissions. Require the right approval at the right time.

Maybe unglamorous, but AI will need this version of trust machinery for both consumer and enterprise usage.

And this is a big reason enterprise incumbents have a leg up. They have already built much of the machinery that makes software usable in real companies.

Computer use will raise the bar here.

The AI cannot just use the computer. It has to use the computer as someone, with a specific role, a specific set of permissions, and a specific boundary around what it can and cannot do.

Without that, it is not a product that companies can trust.

It is a very impressive prototype.

From productivity tools to supervising the work

The knowledge worker’s current tools give humans what they need to do the work.

Documents. Spreadsheets. Slides. Email. Calendars. Chat.

The new productivity layer may use all of these tools. It may not replace Excel. It may use Excel. It may not replace Outlook. It may use Outlook.

This is the strategic tension for Microsoft and Google. They may be mountains, but the terrain around them could change quickly.

The question is not whether AI gets added to productivity software. It will. The question is whether the knowledge worker’s primary tools remain the place where work happens, or become the surface on which AI operates.

For decades, the model was simple: learn the software, push the buttons, produce the work, share it.

The next model may be different: describe the outcome, watch the system work, approve what matters, share it.

From pushing the buttons yourself to supervising the work.

We may have to give the AI access to a computer to get there.

Stay ambitious.

Best of the rest:

🤖 OpenAI Misses Key Revenue, User Targets in High-Stakes Sprint Toward IPO — OpenAI’s growth story is colliding with the brutal economics of compute, as missed user and revenue targets raise harder questions about whether frontier AI can scale fast enough to justify its infrastructure arms race. — The Wall Street Journal

💰 Sources: Anthropic could raise a new $50B round at a valuation of $900B — Anthropic’s reported mega-round shows the AI capital race shifting from model capability to balance sheet capacity, with Claude’s coding momentum turning investor demand into a direct challenge to OpenAI’s scale advantage. — TechCrunch

🛡️ Our evaluation of OpenAI's GPT-5.5 cyber capabilities – AISI’s latest cyber eval shows GPT-5.5 pushing into frontier-risk territory, with one of the strongest performances they’ve measured and a rare end-to-end solve of a multi-step attack simulation. – AISI

⚠️ Cursor's warchest, xai's redemption – Ethan Ding argues Cursor’s rumored sale to xAI is less a victory lap than a warning shot for the AI application layer, where even breakout products can get squeezed when their model suppliers decide to own the margin pool. – mandates

Charts that caught my eye:

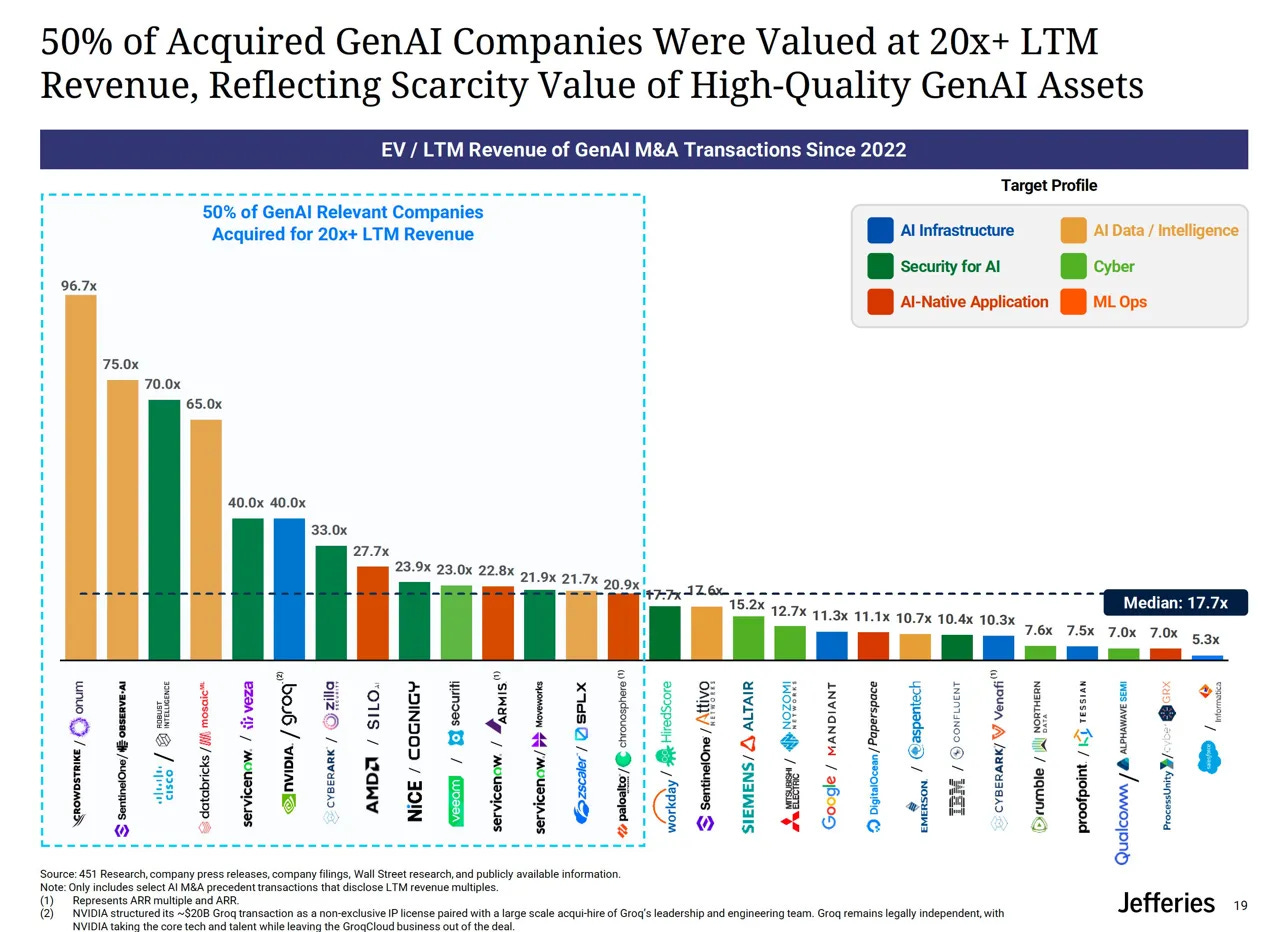

→ Why does it matter? Acquirers are paying scarcity prices for AI Native companies. At a 17.7x median and half the deals clearing 20x, buyers are betting that quality AI assets won’t get cheaper.

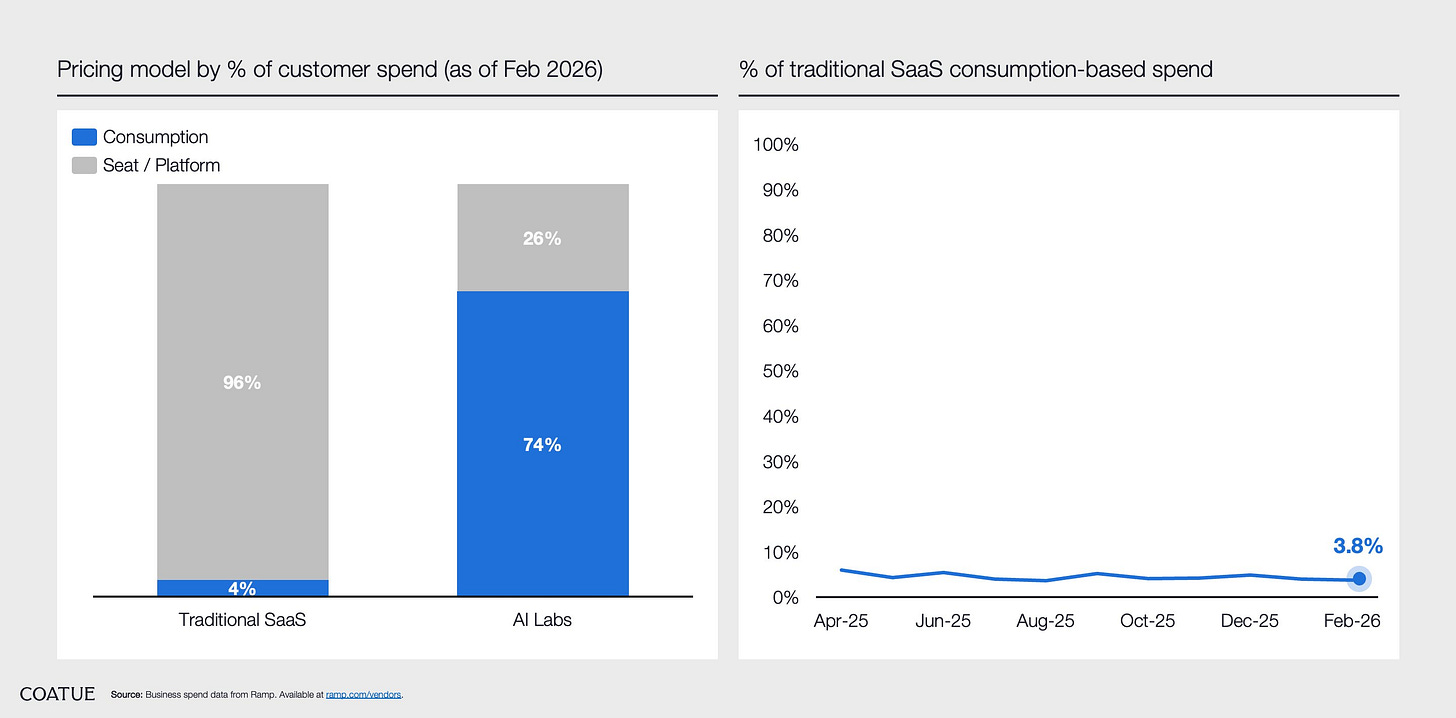

→ Why does it matter? AI labs built consumption pricing from day one. Traditional SaaS spent decades optimizing seat contracts, and that model is sticky: 3.8% consumption share after 11 months of AI pressure.

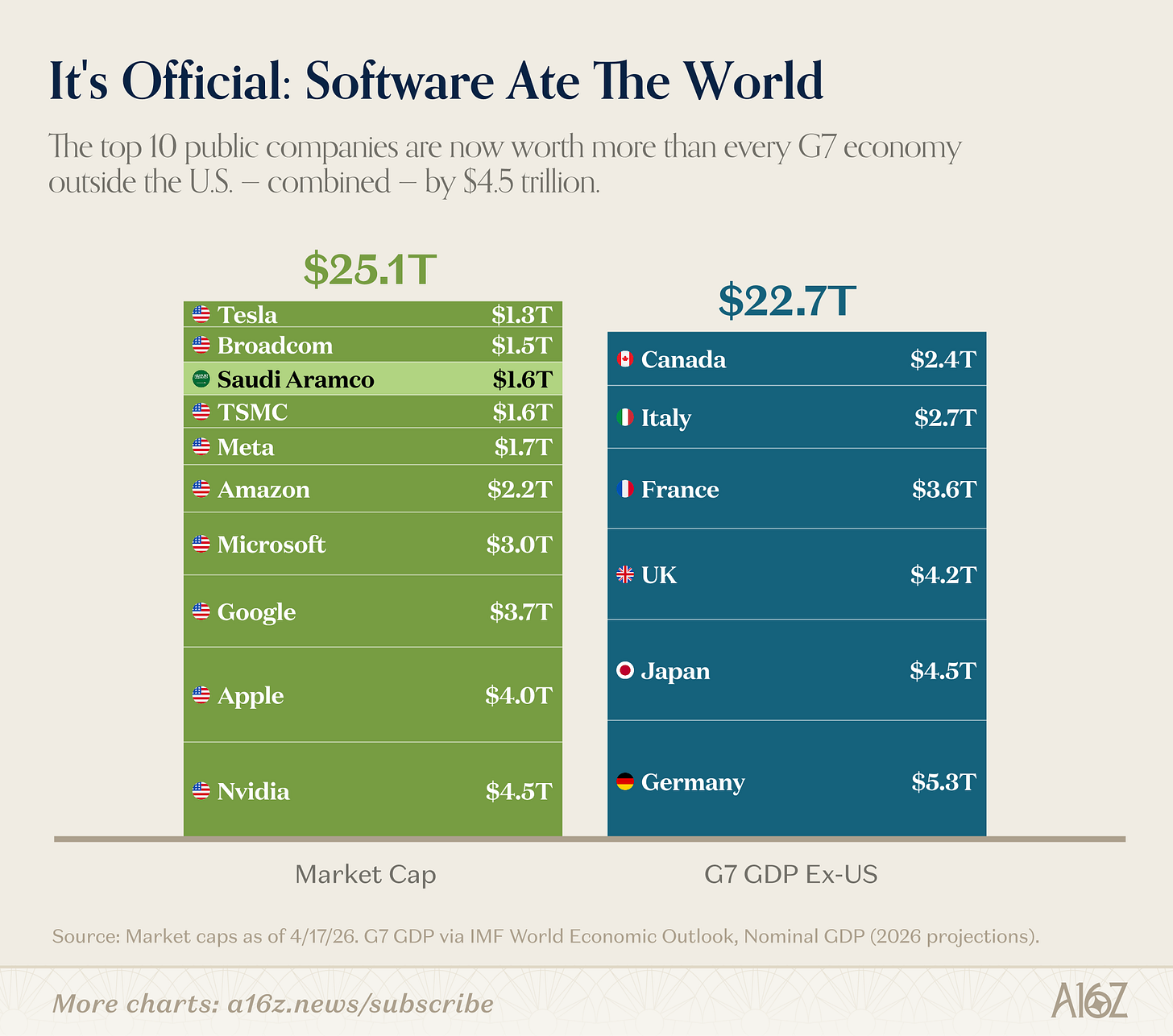

→ Why does it matter? Ten companies are now larger than the entire G7 national economies. Eight of those ten are American tech firms.

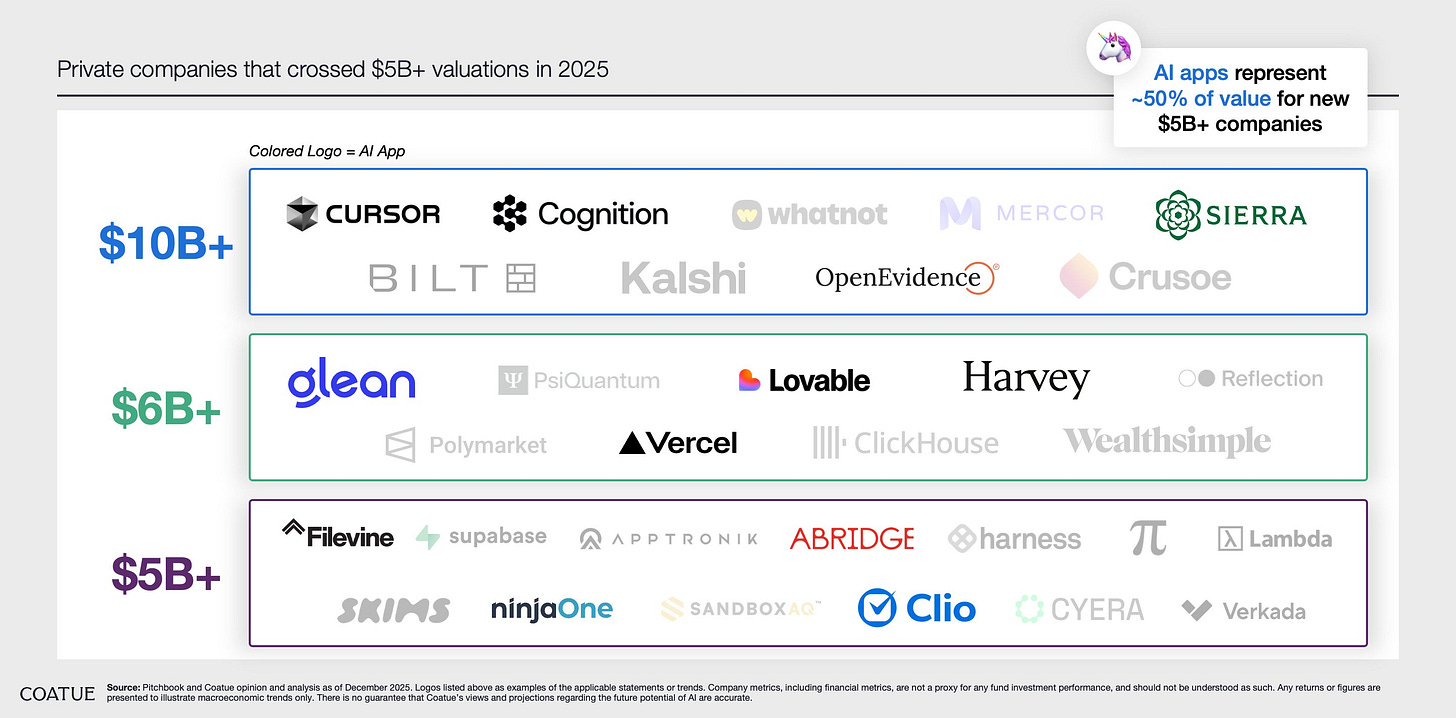

→ Why does it matter? Half the new $5B+ private companies in 2025 are AI apps, with the application layer (Cursor, Cognition, Harvey) clustering at the top. Capital is rewarding the layer closest to end users, not just the model labs underneath!

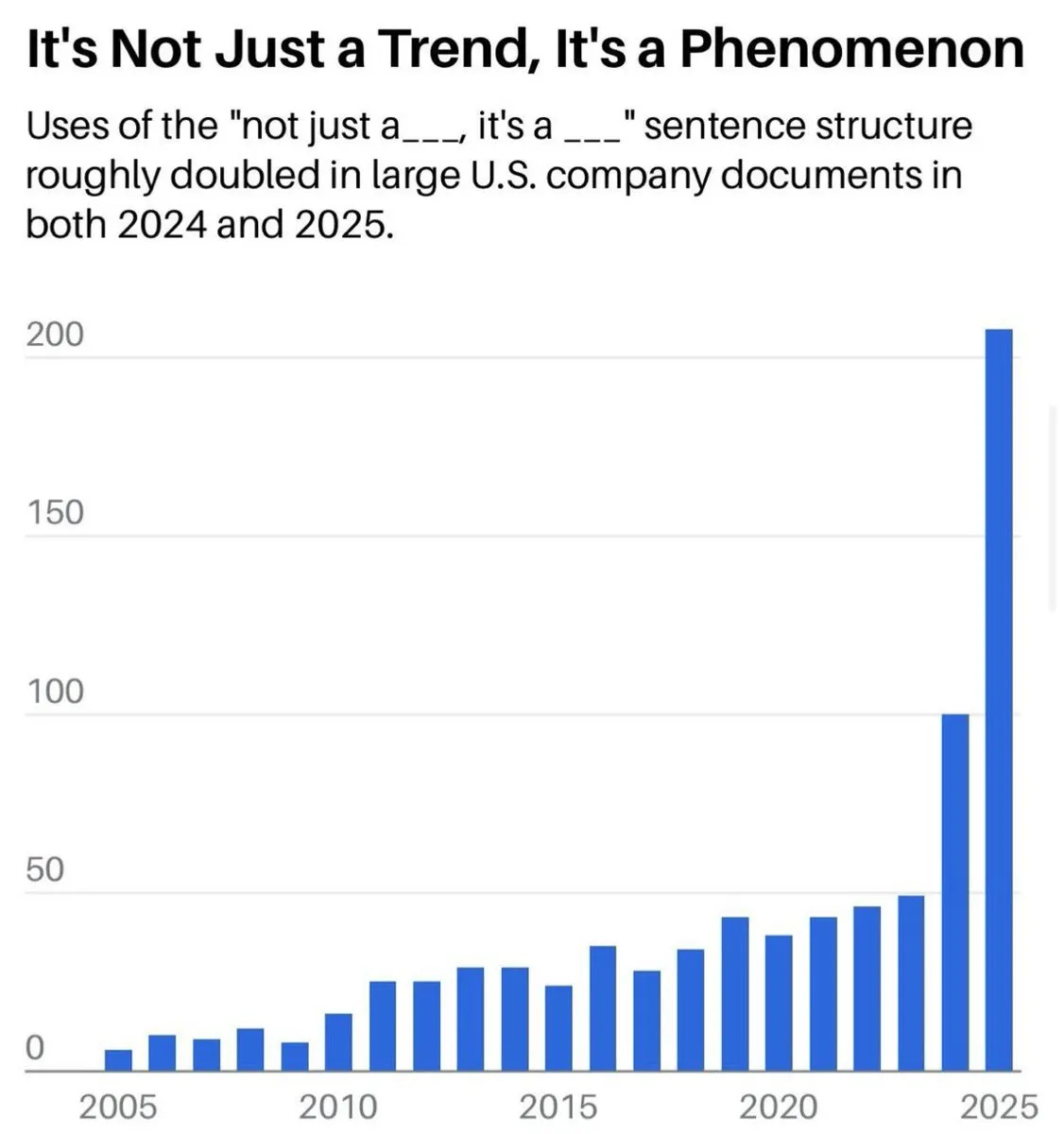

→ Why does it matter? The spike lines up almost exactly with ChatGPT’s public release. LLMs love dramatic rhetorical pivots and engagement-seeking styles. Your earnings calls may already sound like everyone else’s.

Tweets that stopped my scroll:

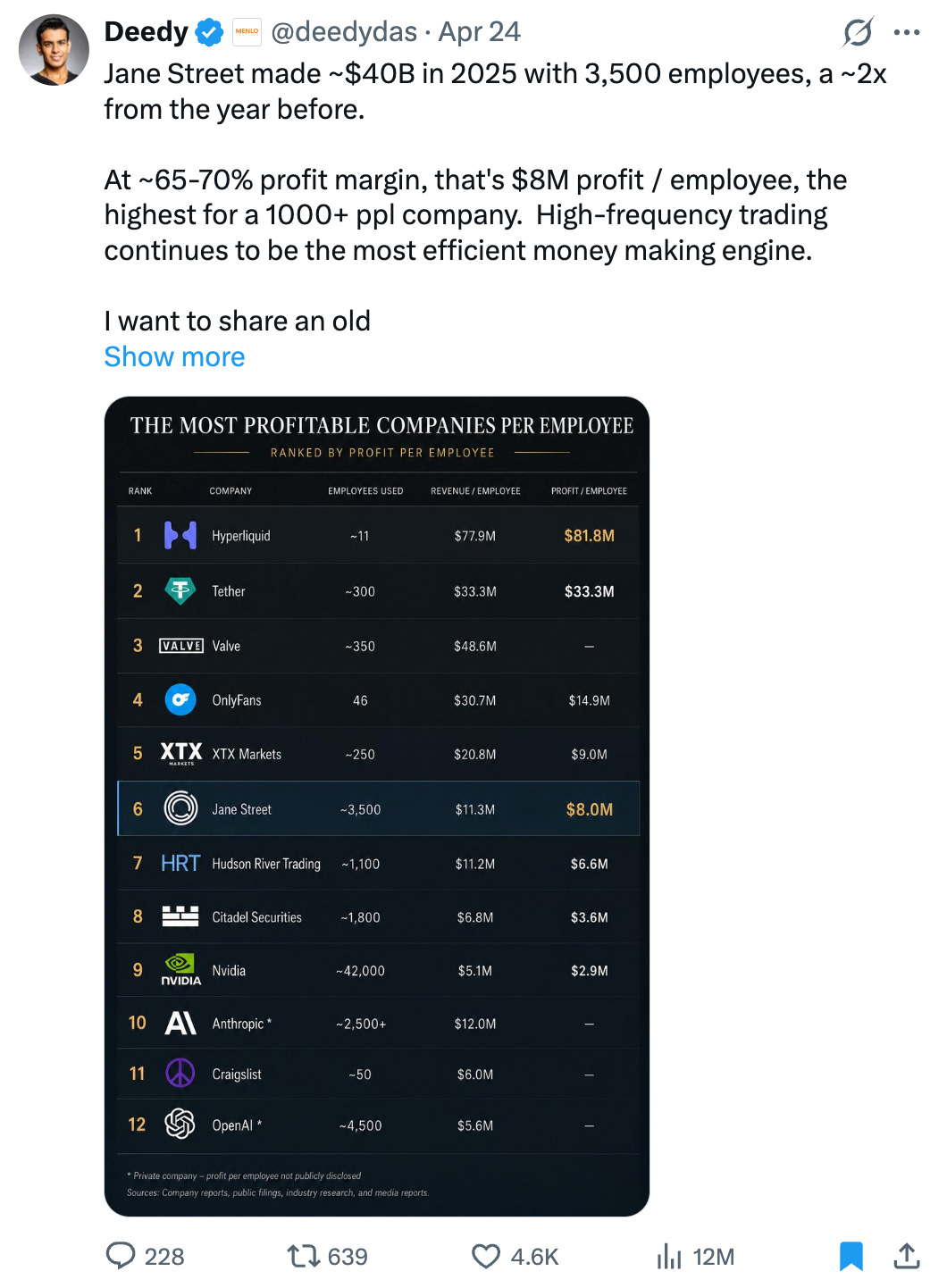

→ Why does it matter? Jane Street generates $8M in profit per employee, with 3,500 people. 🤯

→ Why does it matter? OpenAI building a phone with Luxshare (Apple’s own manufacturer) signals ambition well beyond ChatGPT and Codex. And they, of course, have Jonny Ive!

→ Why does it matter? Telling people they slept well (even falsely) measurably improved their cognitive test scores. What you believe about your condition shapes your actual performance, not just your mood.

Worth a watch or listen at 1x:

→ Why does it matter? CEO’s have the mandate from their BODs to figure out AI. A pleasure to join Marley Kayden and Sam Vadas on Schwab’s Market on Close to discuss a wide range of topics, including Ridgeline’s AIOS for Asset & Wealth Management, AI computer use, the “it” in AI is the data set, and the SaaS apocalypse. Complete with Harry Potter references.

→ Why does it matter? Karpathy reframes LLMs as ghosts rather than animals: jagged, statistical, summoned. He also separates vibe coding from agentic engineering and argues you can outsource thinking but not understanding.

→ Why does it matter? Tudor Jones walks through the trader-versus-investor split, lessons from 1987 and the 1980 silver collapse, and his current read on the debt bubble and AI risk. This is a wide-ranging conversation with an industry legend. Must watch!

→ Why does it matter? Dwarkesh is on a hot streak (NYT profile this week), and this episode is a good example of why. Reiner Pope walks through how frontier models actually get trained and served, and a striking amount of it reduces to a handful of equations.

Quotes & eyewash:

→ Why does it matter? I pre-ordered David Epstein’s new book, Inside the Box, this week. Epstein’s previous bestseller, Range, made the case for generalists. His new book asks a different question: why do limits often produce better work? That feels relevant to AI right now. Look at DeepSeek. The company did not have the cleanest supply chain, the largest American-style lab budget, or unfettered access to Nvidia’s best chips. And yet, DeepSeek trained V3 on 2,048 H800 GPUs and claimed performance comparable to leading closed-source models at a fraction of the cost. The question is not whether DeepSeek is the best model in the world. It isn’t. The question is: why did a constrained team get this close? One answer: scarcity forced better architecture, better training efficiency, and a sharper focus on what mattered.

The mission:

The Wall Street Journal once used “Read Ambitiously” as a slogan, but I took it as a personal challenge. Our mission is to give you a point of view in a noisy, changing world. To unpack big ideas that sharpen your edge and show why they matter. To fit ambition-sized insight into your busy life and channel the zeitgeist into the stories and signals that fuel your next move. Above all, we aim to give you power, the kind that comes from having the words, insight, and legitimacy to lead with confidence. Together, we read to grow, keep learning, and refine our lens to spot the best opportunities. As Jamie Dimon says, “Great leaders are readers.”

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Readers should do their own research and consult with a qualified professional before making any decisions.